Chapter 1

300 likes | 452 Views

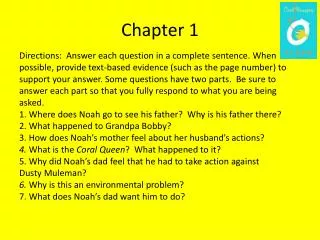

Chapter 1. Introduction to Clustering. Section 1.1. Introduction. Objectives. Introduce clustering and unsupervised learning. Explain the various forms of cluster analysis. Outline several key distance metrics used as estimates of experimental unit similarity. Course Overview. Definition.

Chapter 1

E N D

Presentation Transcript

Chapter 1 Introduction to Clustering

Section 1.1 Introduction

Objectives • Introduce clustering and unsupervised learning. • Explain the various forms of cluster analysis. • Outline several key distance metrics used as estimates of experimental unit similarity.

Definition • “Cluster analysis is a set of methods for constructing a (hopefully) sensible and informative classification of an initially unclassified set of data, using the variable values observed on each individual.” • B. S. Everitt (1998), “The Cambridge Dictionary of Statistics”

Unsupervised Learning Learning without a priori knowledge about the classification of samples; learning without a teacher. Kohonen (1995), “Self-Organizing Maps”

Section 1.2 Types of Clustering

Objectives • Distinguish between the two major classes of clustering methods: • hierarchical clustering • optimization (partitive) clustering.

Hierarchical Clustering Iteration Agglomerative Divisive 1 2 3 4

(error) (error) (error) Propagation of Errors Iteration 1 2 3 4

Old location X X X X X X X X X New location X X X “Seeds” Observations Initial State Final State Optimization (Partitive) Clustering

Heuristic Search • Find an initial partition of the n objects into g groups. • Calculate the change in the error function produced by moving each observation from its own cluster to another group. • Make the change resulting in the greatest improvement in the error function. • Repeat steps 2 and 3 until no move results in improvement.

Section 1.3 Similarity Metrics

Objectives • Define similarity and what comprises a good measure of similarity. • Describe a variety of similarity metrics.

What Is Similarity? • Although the concept of similarity is fundamental to our thinking, it is also often difficult to precisely quantify. • Which is more similar to a duck: a crow or a penguin? • The metric that you choose to operationalize similarity (for example, Euclidean distance or Pearson correlation) often impacts the clusters you recover.

What Makes a Good Similarity Metric? • The following principles have been identified as a foundation of any good similarity metric: • symmetry: d(x,y) = d(y,x) • non-identical distinguishability: if d(x,y) 0 then x y • identical non-distinguishability: if d(x,y) = 0 then x = y • Some popular similarity metrics (for example, correlation) fail to meet one or more of these criteria.

Euclidean Distance Similarity Metric • Pythagorean Theorem: The square of the hypotenuse is equal to the sum of the squares of the other two sides. (x1, x2) x2 (0, 0) x1

(x1,x2) City Block Distance Similarity Metric • City block (Manhattan) distance is the distance between two points measured along axes at right angles. (w1,w2)

. . . . . . . . . Marie . . Marie . . . . • No Similarity . . . . . . . . . . . . . . . . . . . . Tom . Jerry . . . • Dissimilar Jerry Correlation Similarity Metrics • Similar Tom

The Problem with Correlation • VariableObservation 1Observation 2 • x1 5 51 • x2 4 42 • x3 3 33 • x4 2 24 • x5 1 15 • Mean 3 33 • Std. Dev. 1.5811 14.2302 • The correlation between observations 1 and 2 is a perfect 1.0, but are the observations really similar?

Density Estimate Based Similarity Metrics • Clusters can be seen as areas of increased observation density. Similarity is a function of the distance between the identified density bubbles (hyper-spheres). similarity Density Estimate 2 (Cluster 2) Density Estimate 1 (Cluster 1)

Hamming Distance Similarity Metric • 1 2 3 4 5 … 17 • Gene A0 1 10010 0 10 01 1 1 001 • Gene B 0 1 11000 0 11 11 1 1 011 • DH = 0 0 0 1 0 1 0 0 0 11 0 0 0 0 1 0 = 5 • Gene expression levels under 17 conditions • (low=0, high=1)

The DISTANCE Procedure • General form of the DISTANCE procedure: • Both the PROC DISTANCE statement and the VAR statement are required. PROCDISTANCEMETHOD=method <options> ; COPY variables; VAR level (variables < / option-list >) ; RUN;

Generating Distancesch1s3d1 • This demonstration illustrates the impact on cluster formation of two distance metrics generated by the DISTANCE procedure.

Section 1.4 Classification Performance

Objectives • Use classification matrices to determine the quality of a proposed cluster solution. • Use the chi-square and Cramer’s V statistic to assess the relative strength of the derived association.

No Solution Typical Solution Quality of the Cluster Solution Perfect Solution

Probability Frequency Probability of Cluster Assignment The probability that a cluster number represents a given class is given by the cluster’s proportion of the row total.

The Chi-Square Statistic • The chi-square statistic (and associated probability) • determine whether an association exists • depend on sample size • do not measure the strength of the association.

S T R O N G WEAK 0 1 C R A M E R ' S V S T A T I S T I C Measuring Strength of an Association Cramer’s V ranges from -1 to 1 for 2X2 tables.