Connectionism & Consciousness

1.08k likes | 1.29k Views

Connectionism & Consciousness. Connectionism & Consciousness. This week’s question: How have connectionism, AI and dynamical systems influenced cognitive information processing accounts and what issues do they raise with regard to conscious and unconscious processing?.

Connectionism & Consciousness

E N D

Presentation Transcript

Connectionism & Consciousness • This week’s question: How have connectionism, AI and dynamical systems influenced cognitive information processing accounts and what issues do they raise with regard to conscious and unconscious processing?

Connectionism & Consciousness • Serial vs. parallel processing • Computer metaphor • Information processing approach

Serial to parallel processing models example: memory How is memory organised? If memory used a library addressing system, memory errors would be unpredictable. BUT memory errors tend to be near misses that are related in terms of meaning. If asked a question that demands information you have not encoded directly, your memory system often pulls related information that allows you to make an inference to answer the question.So the question is: How does the memory system ‘know’ the right information to produce?

The hierarchical theory (Collins & Quillan, 1972) • Memory consists of nodes and links. • Nodes become activated when concepts that they represent are present in the environment. • Links represent the relationship between concepts and in a hierarchical structure can provide property descriptions of concepts. (animal to bird to chicken) : true or false? 1,000msec A canary is a canary 1,160msec A canary is a bird 1,240msec A canary is an animal BUT did not always hold: sometimes faster at ‘a chicken is an animal’ than ‘a chicken is a bird’ • The hierarchical theory did not therefore appear to have cognitive economy [cognitive system that conserves resources].

Spreading activation theories Collin & Loftus (1975) Links represent associations between semantically related concepts. Stimulation form the environment activates nodes that send some activation to linked nodes which can also become active. Rumelhart, Hinton & McClelland (1986) proposed 6 properties of a semantic network: • A set of units (each represents a concept) • Each unit has its own state of activation • Output function – units pass activation to one another • Pattern of connectivity – links of different strengths (weights) • Activation rule that determines how the input activations to a node should be combined • Learning rules to change weights

Evidence for Spreading activation theories Concepts in memory become active and when activity surpasses a threshold they enter awareness. Repetition priming. Semantic priming. Criticism Vagueness about how to determine location & strength of links If one item primes another - assume it is linked (becomes circular) How far does the activation spread Mediated priming –lion primes stripes (presumably through tiger) Radcliff & MacKoon (1994) if each word spreads activation to 20 words in 3 steps have 8,000 concepts – spreading activation becomes pointless if most concepts active most of the time in ordinary conversation – (does this point to the important of context?)

Parallel Distribution Processing – distributed representation Concept is not represented with a local representation but is distributed over a number of nodes simultaneous. If part of the system is damaged it does not shut down but performance gets worse (degradation). Degradation also seems to be a feature of human memory –minimal memory damage causes minimal memory loss (unlike computer memory where minimal damage can be catastrophic). Learning ability- automatically finds prototypes & exceptions to Prototype. Generalisation –responds to new stimulus the way responded to old stimulus – a key property of human memory.

Criticisms of Parallel Distribution Processing Catastrophic interference if learns one set and then another set of associations – not a feature of human memory. Piker & Prince aspects of childrens’ learning that are not accounted for by the model and that require implementation of rules that are difficult for PDP models.

Computer metaphor • Mind as information processor • Brain processes symbols and stores them in LTM • Cognitive processes take time – use of experiments to measure reaction time and infer processes • Mind has a limited processing capacity • Symbol system has a neurological basis (brain)

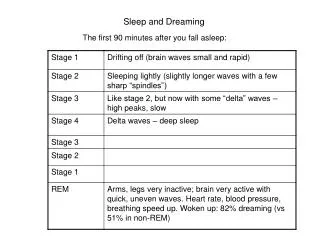

Early serial theories • Emphasis on a sequence of stages • Example Atkinson & Shiffrin’s (1968) serial theory of memory rehearsal Sensory store Short term store Long term store Displaced information

Serial theory of speech production Thought (meaning) Grammatical structure Selection of words Order of words Selection of phonological code

Serial theory of speech production Can be demonstrated experimentally Supply the appropriate words for the following: • A tyrant, absolute ruler • Large black beetle used in Egyptian hieroglyphs • Large hairy elephant that lived in the Pleistocene • Having leaves that fall in autumn Despot Scarab Mammoth Deciduous

Serial theory of speech production Tip-of-the-tongue phenomenon Results suggest that word meaning is accessed first (feeling of knowing) Then the sound form (phonological) of the word is accessed (but may fail!) Results argue for 2-stage process of lexical retrieval.

Alternative: parallel processing • Rather than speech production involving a sequence of stages • Perhaps activation spreads to many stages of processing simultaneously Thought (meaning) Order of words Grammatical structure) Selection of words Selection of phonological code

Alternative parallel processing: Connectionism: (Rumelhart & McClelland, 1986) • Brain metaphor • Millions of interconnected neurons • Activity flows along connections rather than being stored in one distinct location • Stored in simple nodes and connections as a pattern of activity

Alternative parallel processing: Connectionism: (Rumelhart & McClelland, 1986) Pictures of neurons dog cat neurons connectionism pattern of activation

Holistic / Distributed DebateAssociative/ Semantic Memory Debate Associative priming: nodes and connection strength More frequent activation - stronger connection strength Nodes as entities Associative strength data bases

Associative strength data bases Ask 100 people : what is the first word that comes to mind when I say ‘salt’ most say ‘pepper’ but associative strength is not equivalently bidirectional because if presented with the word ‘pepper’ most say ‘corn’ Note theses are associative-semantic pairs because as well as strong associative strengths all are types are food and therefore also have a semantic relationship. Associative only pairs = traffic jam – no semantic connection between the words apart from the association that is a symbol for a 3rd and completely separate concept. High associative strength >.1 (tomato-sauce) Low associative strength = 0 ! – absent from tables! (tiger – neuron)

Alternative parallel processing: Connectionism Semantic priming: nodes and connection strength More frequent activation - stronger connection strength Nodes as properties NOT entities A pattern of activation within the network results in the concept being activated. Red, round, can be eaten, grows on trees, juicy = ? Semantic distance data bases >.3 = high semantic distance score ( bag – box) < .1 = low semantic distance score (chatter – box)

Top down and bottom up processing • Higher level processes (top-down) affect more basic level processing (bottom-up) • Semantic priming • Read this

The procedure is quite simple. First you arrange things into two different groups. Of course one pile may be sufficient depending on how much there is to do. If you have to go somewhere else due to lack of facilities, that is the next step; otherwise you are pretty well set. It is important not to overdo things. That is, it is better to do fewer things at once rather than to many. In the short run this might not seem important, but complications can easily arise. A mistake can be expensive as well. At first the whole procedure will seem complicated. Soon, however, it will just become just another facet of life. After the procedure is completed, one arranges the material into different groups again. Then they can be put into their appropriate places. Eventually they will be used once more and the whole procedure will be repeated. However this is part of life.

Top down and bottom up processing • Higher level processes (top-down) affect more basic level processing (bottom-up) • Semantic priming • Lack of title inhibits reading and comprehension

Automatic & Controlled processing Unconscious • Automatic processing requires little processing capacity • Automatic processing occurs without deliberate thought (outside of awareness) • Automatic processing as effortless and spontaneous? e.g in reading simple common words (drink) Conscious • Controlled processing requires lots of capacity • Controlled processing requires awareness • Controlled processing as effortful, requires time. e.g. in reading complex uncommon words (hemidecortication)

Key issues for critique of experimental approach • External validity: can we generalise to a wider population, beyond the context of testing, over time? • Speed & accuracy measurements are indirect evidence about internal processes • If the ultimate aim is to understand brain processes then ERP & fMRI methods will require to be involved in experimental research (beginning!) • Computer simulations can only be created with precision and detail and make theories less vague • Experimental methods do not generally take heed of individual difference. • Research tends to generate theories with a narrow focus that do not tend to explain the ‘cognitive system’.

CONNECTIONISM approach • A class of models that all have in common the principle that processing occurs through the action of many simple interconnected units • Concept 1: many simple processing units connected together • Concept 2: activation spreads around the network in a way that is determined by the strength of the connections between the units.

CONNECTIONISM approach • Distinction between connectionist models that do and do not learn. • Interactive Activation & Competition model (IAC) example of connectionist model that does not learn (McClelland & Rumelhart, 1981) • Back-propagation models that learn through back-activation training

CONNECTIONISM approach • Distinction between • architecture of a network (describes the layout: number of units & how they are connected) • Algorithm determines how the activation spreads around the network • Learning Rule specifies how the network learns (e.g. Hebbian learning)

IAC model • Many simple processing units arranged in 3 levels. input level – visual feature unit level units correspond to individual letters output level – each unit corresponds to a word • Each unit is connected to the unit immediately before and after it. Each of these connections is either facilitatory (excitatory, positive) or inhibitatory (negative). Facilitatory connections make the units at the end > active, inhibitory connections make them < active. • When a unit becomes activated activation is sent simultaneously along connections to all connected units(positive or negative activation)

IAC model Fragment of a IAC model of word recognition – draw inhilitory (o) and facilitatory ( ) connections! able trip time trap take cart A N G S T

IAC model • The letter ‘T’ would excite the word units ‘TAKE’ and ‘TASK’ in the level above, but would inhibit ‘CAKE’ and ‘CASK’. • Element of Competition is introduced. • ‘T’ will mean ‘TIME’, ‘TAKE’ & ‘TASK’ will be activated but a the same time words that do not begin with ‘T’ will be inhibited ( ‘CAKE’, ‘COKE’ & ‘CASK’). • Activation from word level to letter level will mean all words beginning with ‘T’ will be slightly activated and ‘easier to see’ • Letters in the context of a word receive activation from the word units explains the word superiority effect - ‘T’ in a word easier to see than in isolation (no top-down activation). • If the next letter is an ‘A’ activates‘TAKE’ & ‘TASK’, inhibits ‘TIME’ which will also be inhibited within the word level by ‘TAKE’ & ‘TASK’. ‘A’ will also activate (some way behind words beginning in ‘T’) ‘CASK’ & ‘CAKE’ but if the next letter is ‘K’ the clear leader will be ‘TAKE’.

IAC model • Over time the pattern of activation settles down into a stable configuration so only ‘TAKE’ remains active and the word is ‘seen’/ recognised • Because this model of letter and word recognition is highly interactive, evidence that places a restriction on the role of context is problematic to the IAC model. • In more recent models connection strengths are learnt Back-propagation being the most widely used connectionist learning rule

Back-propagation • Enables networks to learn to associate input patterns with output patterns • Error reduction learning is an algorithm that enables networks to be trained to reduce the error between what the network actually outputs given a particular input, and what it should output. • The simplest net architecture that can be trained by back propagation has 3 layers (levels): input, hidden & output.

Back-propagation • Connections all start with random weights. • In the case of ‘DOG’ the pattern of activation is distributed over input units with no single unit corresponding to a single letter. For example units 1 and 3 might be on and unit 2 off. • Activation is then passed on to hidden unitsaccording to the values of the connections between the input and hidden units. In the hidden layer activation is summed by each unit. • The output of a unit is a complex function of it’s input and is non-linear (logistic function)

Back-propagation • Each unit has an individual threshold (bias) that can be learnt like any other weight and activation is passed onto the output units so that they eventually have an activation value. • Output activation values are unlikely to be the correct ones as the input unit weights were random. • The learning rule then modifies the connections in the network so that the output will be a bit more like it should be. • The difference between the actual and the target outputs is computed and the values of all the weights from the hidden to the output units are adjusted to make the difference smaller. This process is then back-propagated to change the weights between the hidden and input units.

Back-propagation • The whole process can then be repeated for a different input-output pair. • Eventually the weights of the network converge on values that give the best output averaged across all input-out-put pairs • Common modification is to introduce recurrent connections between the hidden layer and a context layer that stores the past state of the hidden layer. The network can then learn to encode sequential information. • Most interest is in the behaviour of the trained network rather than the training.

Connectionism, AI & Dynamic Systems • Dramatic new theoretical framework (Kuhn: paradigm shift) • Recasts old problems in new terms • May discover new solutions obscured by prior ways of thinking • Redefines what the problems in cognition are

1970s: Metaphor of the brain as a digital computer. • Rationalised cognition: possible to study cognition in an explicit formal manner. • When cognition was first thought of in computational terms the digital computer was used as a framework to understanding: processing carried out by discrete processor operations that could be described as rules and were executed in serial order. The memory component was distinct from the processor.

1980s: difference between the brain & a digital computer may be important. • Connectionist approaches came into mainstream cognitive psychology

Connectionism • Response function is non-linear and has important consequences for processing. Nonlinearity allows crisp categorical behaviour and in other circumstances graded continuous responses. • What the system knows is captured by the pattern of connections & the weights associated with the connections. • Connectionist systems consist of patterns of activation across different units rather than using symbolic systems.

Connectionism • Key question: who determines the weights? • Key development: learning algorithms that allowed the network to learn the values for weights. • The style of learning was inductive – exposed to sample target behaviour through learning the network would adjust weights in small incremental steps so that over time response accuracy would improve. • Ideal the network would also be able to generalise performance to novel stimuli, demonstrating that it had learnt the underlying function that relates output to input rather than just memorising trained examples.

Connectionism: Issues & Controversies • Computer metaphor: use of rules for analysis replaced the pattern recognition (template-matching) of early models • Information processing was bottom-up: perceptual features first that yielded a representation that was passed on to successively higher levels of processing • Bottom-up processing was challenged by the influence of context. A letter flashed on a screen was identified better if it appeared in a real word rather than appearing in isolation or appearing embedded in a non-word. This seems to be an example of top-down processing – higher processing influencing supposedly lower processes.

Connectionism: Issues & Controversies • Growing evidence that the cognitive system was able to process at multiple levels in parallel rather than being restricted to executing a single instruction at a time. The word perception model of McClelland & Rumelhart (1981) interactive activation model (IAC) was the first to depart from the digital framework.

Connectionism: Issues & Controversies • How do we account for the regularity of human behaviour is a key question to cognitive science. • There would be nothing to explain if it was entirely random or limited to a fixed repertoire that could be memorised. • But behaviour is both patterned and often productive when these patterns are generalised to novel circumstances. • Do we use rules or associations to account for the regularity?

Connectionism: Issues & Controversies • Assumption: underlying behaviour is a set of rules = explanation for the patterned nature of human cognition • Many children initially only know a small number of verbs and produce both correct regular & irregular forms. Later they go on to make mistakes giving irregular verbs regular endings (‘goed’ instead of ‘gone’). Children then learn which follow the rule (regular) and which have to be memorised (irregular). Development is therefore ‘U-shaped’ (good – worse – good again). • A rule-based account of this phenomena is initial memorising, discovery of past tense regularity rule, use and overgeneralisation, before learning which verbs are regular & irregular.

Connectionism: Issues & Controversies • Rule-based explanation runs into difficulty because: some irregular verbs are unique (is – was, go – went) some group in terms of phonological similarity (sing, ring, catch, teach – sang,rang, caught,taught) that is generalised to a novel word (pling – plang [analogy to ring-rang]) Rumelhart & McClelland (1986) produced a connectionist learning simulation that produced the same U-shaped performance over time as that of the children, and therefore performance may not necessarily arise from explicit rules. Pinker & Prince (1988) disagree and state that the qualitative difference between irregular & regular verbs means that the latter are still rule-based.