A Hybrid Optimization Approach for Parameter Estimation Using SPSA and NKIP

10 likes | 145 Views

This paper presents a novel hybrid optimization approach that combines the Simultaneous Perturbation Stochastic Approximation (SPSA) method with the Newton-Krylov Interior Point (NKIP) algorithm for efficient parameter estimation. By generating a surrogate model through sampling with SPSA, we aim to perform global searches in parameter space and switch to NKIP for local optimization. Our preliminary numerical results demonstrate the method's potential effectiveness, particularly for large-scale estimation problems, suggesting further research and experimentation are warranted.

A Hybrid Optimization Approach for Parameter Estimation Using SPSA and NKIP

E N D

Presentation Transcript

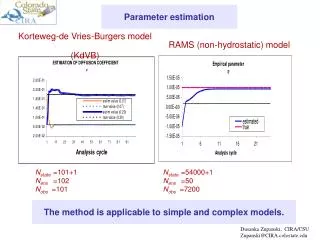

Stop Global Search Via SPSA Yes Optimal Solution found for Original model? Surrogate Model Filtering Data Local Search Via NKIP Multi-Start A Hybrid Optimization Approach for Automated Parameter Estimation Problems Carlos A. Quintero1 Miguel Argáez1, Hector Klie2, Leticia Velázquez1 and Mary Wheeler2 2 ICES-The Center for Subsurface Modeling The University of Texas at Austin 1Department of Mathematical Sciences The University of Texas at El Paso Local Search: NKIP • We consider the optimization problem in the form: Test Case Abstract • We test the algorithm on the Rastrigin’s Problems [Ref 6]: • where a and b are determined by the sampled points given by SPSA. • NKIP is a globalized and derivative dependent optimization method. This method calculates the directions using the conjugate gradient algorithm, and a linesearch is implemented to guarantee a sufficient decrease of the objective function as described in Argáez and Tapia [Ref.4]. This algorithm has been developed for obtaining an optimal solution for large scale and degenerate problems. • NKIP algorithm apply to the surrogate model find an optimal solution at x*=(1.94, 0.01) and fs(x*)= 6.26. • We present a hybrid optimization approach for solving automated parameter estimation problems that is based on the coupling of the Simultaneous Perturbation Stochastic Approximation (SPSA)[Ref.1] and a globalized Newton-Krylov Interior Point algorithm (NKIP) presented by Argáez et al.[Refs.2,3]. The procedure generates a surrogate model that yield to use efficiently first order information and applies NKIP algorithm to find an optimal solution. We implement the hybrid optimization algorithm on a simple test case, and present some preliminary numerical results. • 3-D view, n=2 • Sampling Data from SPSA Future Work Surrogate Model Further research and numerical experimentation are needed to demonstrate the effectiveness of the hybrid optimization scheme being proposed, especially for solving large application problems of interest of the Department of Defense. Problem Formulation • We find the surrogate model fs(x) using an interpolation method with the data, , provided by SPSA. • This can be performed in different ways, e.g., radial basis functions, kriging, regression analysis, or using artificial neural networks. • In our test case, we optimize the surrogate function • where the multiquadric basis functions are given by • The interpolation algorithm [Ref.4] characterizes the uncertainty parameters • We plot the original model function and the surrogate function: • To add a multi-start on SPSA • Filter data from SPSA • If x* is such that f(x*) does notsatisfy an upper bound given by • then we use x* as an initial point for SPSA. • We consider the global optimization problem in the form: • Front view • where the global solution x* is such that • We are interested in problem (1) that have many local minima. References • [1] J. C. Spall. Introduction to stochastic search and optimization: Estimation, simulation and control. John Wiley & Sons, Inc., New Jersey, 2003. • [2] M. Argáez, R. Sáenz, and L. Velázquez. A trust–region interior–point algorithm for nonnegative constrained minimization. Technical report, Department of Mathematics, The University of Texas at El Paso, 2004. • [3] M. Argáez and R.A. Tapia. On the global convergence of a modified augmented Lagrangian linesearch interior-point Newton method for nonlinear programming. J. Optim. Theory Appl., 114:1–25, 2002. • [4]Mark J. L. Orr. Matlab Functions for Radial Basis Function Networks, 1999. • [5] H. Klie and W. Bangerth and M. F. Wheeler and M. Parashar and V. Matossian. Parallel well location optimization using stochastic algorithms on the grid computational framework. 9th European Conf. on the Mathematics of Oil Recovery (ECMOR), August, 2004. • [6] A.J. Keane and P.B. Nair. Computational Approaches for Aerospace Design: The Pursuit of Excellence. Wiley, England, 2005. Hybrid Optimization Scheme • The scheme is to use SPSA as the sampling device to perform a global search of the parameter space and switch to NKIP to perform the local search via a surrogate model. • fs(x) • x*=(0,0) global solution • f(x*)=0 SPSA data points Global Search: SPSA Contact Information Acknowledgments Explore Parameter space Carlos A. Quintero, Graduate Student The University of Texas at El Paso Department of Mathematical Sciences 500 W. University Avenue El Paso, Texas 79968-0514 Email: caquintero@utep.edu Phone: (915) 747-6858 Fax: (915) 747-6502 The authors were partially supported by DOD PET project EQM KY06-03 . The authors thank IMA for the travel support to attend the Blackwell-Tapia Conference, November 3-4, 2006. Sampling No • SPSA is a global derivative free optimization method that uses only objective function measurements. In contrast with most algorithms which requires the gradient of the objective function that is often difficult or impossible to obtain. Further, SPSA is especially efficient in high-dimensional problems in terms of providing a good approximate solution for a relatively small number of measurements of the objective function [Ref. 5]. • The parameter estimation is first carried out by means of SPSA algorithm. This process may be performed by starting with different initial guesses (multistart). This increases the chances for finding a global solution, and yields to find a vast sampling of the parameter space. • f(x) Interpolate Response surface Improved Solution fs (x) Sensitivity analysis