Edge Detection & Image Segmentation

Edge Detection & Image Segmentation. Dr . Md. Altab Hossain Associate Professor Dept. of Computer Science & Engineering, RU. Edge Detection. Element of Image Analysis. Preprocess Image acquisition, restoration, and enhancement. Intermediate process

Edge Detection & Image Segmentation

E N D

Presentation Transcript

Edge Detection & Image Segmentation Dr. Md. Altab Hossain Associate Professor Dept. of Computer Science & Engineering, RU

Element of Image Analysis Preprocess Image acquisition, restoration, and enhancement Intermediate process Image segmentation and feature extraction High level process Image interpretation and recognition

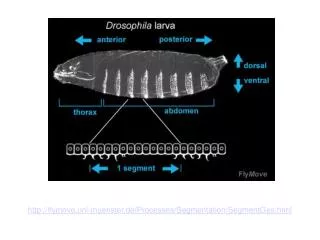

Image Attributes for Image Segmentation 1.Similarity properties of pixels inside the object are used to group pixels into the same set. 2. Discontinuity of pixel properties at the boundary between object and background is used to distinguish between pixels belonging to the object and those of background. Discontinuity: Intensity change at boundary Point, Line, Edge Similarity: Internal pixels share the same intensity

Point Detection 0 -1 -1 -1 -1 0 -1 -1 4 8 -1 -1 -1 0 -1 -1 -1 0 • We can use Laplacian masks for point detection. • Laplacian masks have the largest • coefficient at the center of the mask • while neighbor pixels have an • opposite sign. • This mask will give the high response to the object that has the • similar shape as the mask such as isolated points. • Notice that sum of all coefficients of the mask is equal to zero. • This is due to the need that the response of the filter must be zero • inside a constant intensity area

Point Detection • Point detection can be done by applying the thresholding function: Location of porosity X-ray image of the turbine blade with porosity Laplacian image After thresholding (Images from Rafael C. Gonzalez and Richard E. Wood, Digital Image Processing, 2nd Edition.

Line Detection • Similar to point detection, line detection can be performed • using the mask the has the shape look similar to a part of a line • There are several directions that the line in a digital image can be. • For a simple line detection, 4 directions that are mostly used are • Horizontal, +45 degree, vertical and –45 degree. Line detection masks (Images from Rafael C. Gonzalez and Richard E. Wood, Digital Image Processing, 2nd Edition.

Line Detection Example Binary wire bond mask image Absolute value of result after processing with -45 line detector Result after thresholding Notice that –45 degree lines are most sensitive (Images from Rafael C. Gonzalez and Richard E. Wood, Digital Image Processing, 2nd Edition.

Definition of Edges • Edges are significant local changes of intensity in an image. • Edges typically occur on the boundary between two different regions in an image.

surface normal discontinuity depth discontinuity color discontinuity illumination discontinuity What Causes Intensity Changes? • Geometric events • surface orientation (boundary) discontinuities • depth discontinuities • color and texture discontinuities • Non-geometric events • illumination changes • specularities • shadows • inter-reflections

Why is Edge Detection Useful? Important features can be extracted from the edges of an image (e.g., corners, lines, curves). These features are used by higher-level computer vision algorithms (e.g., recognition).

Edge Descriptors • Edge normal: unit vector in the direction of maximum intensity change. • Edge direction: unit vector to perpendicular to the edge normal. • Edge position or center: the image position at which the edge is located. • Edge strength: related to the local image contrast along the normal.

Modeling Intensity Changes • Step edge: the image intensity abruptly changes from one value on one side of the discontinuity to a different value on the opposite side. • Ramp edge: a step edge where the intensity change is not instantaneous but occur over a finite distance.

Modeling Intensity Changes (cont’d) • Ridge edge: the image intensity abruptly changes value but then returns to the starting value within some short distance (i.e., usually generated by lines). • Roof edge: a ridge edge where the intensity change is not instantaneous but occur over a finite distance (i.e., usually generated by the intersection of two surfaces).

Main Steps in Edge Detection (1) Smoothing: suppress as much noise as possible, without destroying true edges. (2) Enhancement: apply differentiation to enhance the quality of edges (i.e., sharpening). (3) Thresholding: determine which edge pixels should be discarded as noise and which should be retained (i.e., threshold edge magnitude). (4) Localization:determine the exact edge location. sub-pixelresolution might be required for some applications to estimate the location of an edge to better than the spacing between pixels.

1st derivative 2nd derivative Edge Detection Using Derivatives • Often, points that lie on an edge are detected by: (1) Detecting the local maxima or minima of the first derivative. (2) Detecting the zero-crossings of the second derivative.

Smoothed Step Edge and Its Derivatives 1.2 1 0.8 0.6 0.4 0.2 0 -0.2 -1.5 -1 -0.5 0 0.5 1 1.5 2 2.5 3 3.5 0.06 0.04 0.02 0 -0.02 -0.04 -0.06 -1.5 -1 -0.5 0 0.5 1 1.5 2 2.5 3 3.5 -3 x 10 5 4 3 2 1 0 -1 -2 -3 -4 -5 -1.5 -1 -0.5 0 0.5 1 1.5 2 2.5 3 3.5 Edge Edge Gray level profile Intensity Minimum point The 1st derivative Maximum point Zero crossing + + The 2nd derivative - -

Image Derivatives • How can we differentiate a digital image? • Option 1: reconstruct a continuous image, f(x,y), then compute the derivative. • Option 2: take discrete derivative (i.e., finite differences) Consider this case first!

Edge Detection Using First Derivative 1D functions (not centered at x) (centered at x) (upward) step edge

Edge Detection Using Second Derivative • Approximate finding maxima/minima of gradient magnitude by finding places where: • Can’t always find discrete pixels where the second derivative is zero – look for zero-crossing instead.

Edge Detection Using Second Derivative (cont’d) 1D functions: (centered at x+1) Replace x+1 with x (i.e., centered at x):

Noise Effect on Images Edges • First column: images and gray-level profiles of a ramp edge corrupted by random Gaussian noise of mean 0 and = 0.0, 0.1, 1.0 and 10.0, respectively. • Second column: first-derivative images and gray-level profiles. • Third column : second-derivative images and gray-level profiles.

Edge Detection Using First Derivative (Gradient) • The first derivate of an image can be computed using the gradient: 2D functions:

Gradient Representation • The gradient is a vector which has magnitude and direction: • Magnitude: indicates edge strength. • Direction: indicates edge direction. i.e., perpendicular to edge direction (approximation) or

Approximate Gradient • Approximate gradient using finite differences:

Approximate Gradient (cont’d) • Cartesian vs pixel-coordinates: - j corresponds to x direction - i to -y direction

Approximating Gradient (cont’d) • We can implement and using the following masks: (x+1/2,y) good approximation at (x+1/2,y) (x,y+1/2) * * good approximation at (x,y+1/2)

Approximating Gradient (cont’d) • A different approximation of the gradient: • 3 x 3 neighborhood:

Approximating Gradient (cont’d) • and can be implemented using the following masks:

Another Approximation • Consider the arrangement of pixels about the pixel (i, j): • The partial derivatives can be computed by: • The constant cimplies the emphasis given to pixels closer to the center of the mask. 3 x 3 neighborhood:

Prewitt Operator • Setting c = 1, we get the Prewitt operator:

Sobel Operator • Setting c = 2, we get the Sobel operator:

The Canny Edge Detector • Canny – smoothing and derivatives:

The Canny Edge Detector • Canny – gradient magnitude: image gradient magnitude

Practical Issues • Choice of threshold. gradient magnitude low threshold high threshold

Practical Issues (cont’d) • Edge thinning and linking.

Criteria for Optimal Edge Detection • (1) Good detection • Minimize the probability of false positives (i.e., spurious edges). • Minimize the probability of false negatives (i.e., missing real edges). • (2) Good localization • Detected edges must be as close as possible to the true edges. • (3) Single response • Minimize the number of local maxima around the true edge.

Edge Contour Extraction • Edge detectors typically produce short, disjoint edge segments. • These segments are generally of little use until they are aggregated into extended edges. • We assume that edge thinning has already be done (e.g., non-maxima suppression). • Two main categories of methods: • local methods (extend edges by seeking the most "compatible" candidate edge in a neighborhood). • global methods (more computationally expensive - domain knowledge can be incorporated in their cost function).

Local Processing Methods • Edge Linking using neighbor

Local Processing Methods • Contour extraction using heuristic search • A more comprehensive approach to contour extraction is based on graph searching. • Graph representation of edge points: • (1) Edge points at position picorrespond to graph nodes. • (2) The nodes are connected to each other if local edge linking rules are satisfied.

Local Processing Methods • Contour extraction using heuristic search – cont. • The generation of a contour (if any) from pixel pAto pixel pB the generation of a minimum-cost path in the directed graph. • A cost function for a path connecting nodes p1 = pAto pN= pBcould be defined as follows: • Finding a minimum-cost path is not trivial in terms of computation.