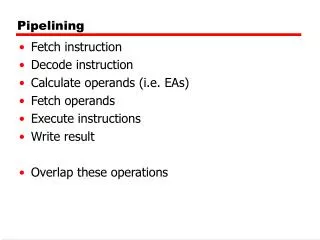

Pipelining

E N D

Presentation Transcript

Processor Data Path • Single cycle processor makes poor use of units:

Processor Data Path • ADD r1, r2, r3 running

Processor Data Path • ADD r1, r2, r3 running

Processor Data Path • ADD r1, r2, r3 running

Processor Data Path • ADD r1, r2, r3 running

Processor Data Path • ADD r1, r2, r3 running

Assembly Lines • Single cycle laundry:

Assembly Lines • Assembly line laundry:

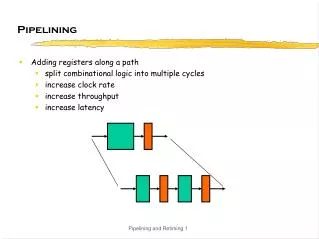

Segmented Data Path • Registers to hold values between stages

Pipelined • Each stage can work on different instruction:

Pipeline vs Not: • Pipeline: 4 ins / 8 cycles • No Pipeline: 2 ins / 10 cycles

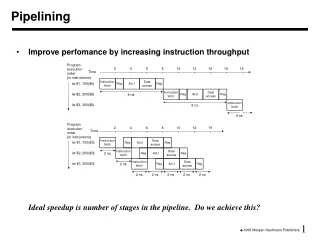

Throughput • N stage pipeline: • n - 1 cycles to "prime it" • Then one instruction per cycle

Throughput • N stage pipeline: • Time for i instructions in n stage pipeline • Time for i instructions without pipelining

Throughput • N stage pipeline: • Time for i instructions in n stage pipeline • Time for i instructions without pipelining • Max Speedup: as = n

Pipelining Limits • In theory:n times speedup for n stage pipeline • But • Only if all stages are balanced • Only if can be kept full

Weak Link & Latency • Total data path = 800ps

Weak Link & Latency • Pipelined : can't run faster than slowest step

Weak Link & Latency • Pipelined : can't run faster than slowest step5 x 200ps = 1000ps • Plus delay of memory betweenstages

Pipeline vs Not Clock time 800psno pipeline 200ps pipeline

Weak Link & Latency • First Instruction • No-pipeline: 800ps / 1 instruction • Pipeline: 1000ps / 1 instruction • "Speedup" on first instruction : 0.8x (25% slower) Increased Latency

Weak Link & Latency • Full Pipeline • No-pipeline: 800ps / 1 instruction • Pipeline: 1000ps / 5 instructions = 200 ps / inst • Speedup with full pipeline = = 4x Increased Throughput

Designed for Pipelining • Consistent instruction length • Simple decode logic • No feeding data from memory to ALU

Hazards • Hazard : Situation preventing next instruction from continuing in pipeline • Structural : Resource (shared hardware) conflict • Data : Needed data not ready • Control : Correct action depends on earlier instruction

Structural Hazards • What if one memory?IF and MEMaccess sameunit Mem Mem

Structural Hazards • Conflict between MEM and IF

Dealing with Conflict • Bubble : Unused pipeline stage MOV Bubble LDR SUB ADD

Dealing with Conflict • Bubbles to handle shared memory

Avoiding Structural Hazards • Separate Inst/Data cache • Can’t send memory data to ALU

Data Hazards • Sequence of instructions to be executed:

Data Hazards • RAW : Read After Write • Later instruction depends on result from earlier • ADD writes R1 at time 5 • SUB wants r1 at time 3

Dealing with Data Hazards • Option 1 : NOP = No op = Bubble • Assuming can read new value of r1 as being written : 2 cycles of bubble (otherwise 3)

Dealing with Data Hazards • Option 2 : Clever compiler/programmer reorders instructions: • 1 Bubble eliminated by LDR before SUB

Reorder = New Problems • While reordering, need to maintain critical ordering: • RAW : Read after WriteADD r1, r3, r4ADD r2, r1, r0 • WAR : Write after ReadADD r2, r1, r0ADD r1, r3, r4 • WAW : Write after WriteADD r1, r4, r0ADD r1, r3, r4

Dealing with Data Hazards • Option 3 : Forwarding • Shortcut to send results back to earlier stages

Dealing with Data Hazards • r1’s value forwarded to ALU

Dealing with Data Hazards • Forwarding may not eliminate all bubbles

Dealing with Data Hazards • Requires complex hardware • Potentially slows down pipeline

Pipeline History • Pipelines: