Data Mining

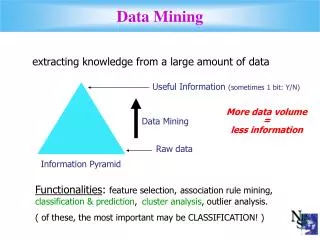

This document delves into the application of data mining in optimizing cow breeding decisions based on multiple factors such as weight, age, health, behavior, milk production, and nutritional habits. With a focus on extracting valuable information from large datasets—potentially containing 100,000 cows—it explores both classification rules and association rules in data mining. The constant increase in data volume presents challenges in understanding and making informed decisions, necessitating advanced machine learning techniques to identify structural patterns that lead to effective breeding strategies.

Data Mining

E N D

Presentation Transcript

Jim Jim’s cows Which cows should I breed??

Which cows should I breed?? • Suppose I know the weight, age and health of each cow? • And suppose I know their behavior, preferred mating months, milk production, nutritional habits, immune system data…? • Suppose I have 50 cows. • Now suppose I have 100,000 cows…

“understanding” data • Trying to find patterns in data is not new: hunters seek patterns in animal migration, politicians in voting habits, people in their partner’s behavior, etc. • However, the amount of available data is increasing very fast (exponentially?). • This gives greater opportunities to extract valuable information from the data. • But it also makes the task of “understanding” the data with conventional tools very difficult.

Data Mining • Data Mining: The process of discovering patterns in data, usually stored in a Database. The patterns lead to advantages (economic or other). • Two extremes for the expression of the patterns: • “Black Box”:“Breed cow Zehava, Petra and Paulina” • “Transparent Box” (Structural Patterns):“Breed cows with age<4 and weight >300 or cows with calm behavior and >90 liters of milk production per month” • Data Mining is about techniques for finding and describing Structural Patterns in data. • The techniques (algorithms) are usually from the field of Machine Learning.

The weather example cont. • A set of rules learned from this data could be presented in a Decision List: • If outlook=sunny and humidity=high then play=no • ElseIf outlook=rainy and windy=true then play=no • ElseIf outlook=overcast then play=yes • ElseIf humidity=normal then play=yes • Else play=yes • This is an example of Classification Rules • We could also look for Association Rules: • If temperature=cool then humidity=normal • If windy=false and play=no then outlook=sunny and humidity=high

Example Cont. • The previous example is very simplified. Real Databases will probably: • Contain Numerical values as well. • Contain “Noise” and errors. • Be a lot larger. • And the analysis we are asked to perform might not be of Association Rules, but rather Decision Trees, Neural Networks, etc.

Another Example • A classic example is a Database which holds data concerning all purchases in a supermarket. • Each Shopping Basket is a list of items that were bought in a single purchase by some customer. • Such huge DB’s which are saved for long periods of time are called Data Warehouses. • It is extremely valuable for the manager of the store to extract Association Rules from the huge Data Warehouse. • It is even more valuable if this information can be associated with the person buying, hence the Club Memberships…

Supermarket Example • For example, if Beer and Diapers are found to be bought together often, this might encourage the manager to give a discount for purchasing Beer, Diapers and a new product together. • Another example: if older people are found to be more “loyal” to a certain brand than young people, a manager might not promote a new brand of shampoo, intended for older people.

The Purchases Relation Itemset: A set of items Support of an itemset: the fraction of transactions that contain all items in the itemset. • What is the support of: • {pen}? • {pen, ink}? • {pen, juice}?

Frequent Itemsets • We would like to find items that are purchased together in high frequency- Frequent Itemsets. • We look for itemsets which have a support > minSupport. • If minSupport is set to 0.7, then the frequent itemsets in our example would be: {pen}, {ink}, {milk}, {pen, ink}, {pen, milk} • The A-Priori property of frequent itemsets: Every subset of a frequent itemset is also a frequent itemset.

Algorithm for finding Frequent itemsets • The idea (based on the A-prori property): first identify frequent itemsets of size 1, then try to expand them. • By considering only itemsets obtained by enlarging frequent itemsets, we greatly reduce the number of candidate frequent itemsets. • A single scan of the table is enough to determine which candidate itemsets which were generated, are frequent. • The algorithm terminates when no new frequent itemsets are found in an iteration.

Algorithm for finding Frequent itemsets foreach item, check if it is a frequent itemset; (appears in >minSupport of the transactions) k=1; repeat foreach new frequent itemset Ik with k items: Generate all itemsets Ik+1 with k+1 items, such that Ik is contained inIk+1. scan all transactions once and add itemsets that have support > minSupport. k++ until no new frequent itemsets are found

Finding Frequent itemsets, on table “Purchases”, with minSupport=0.7 • In the first run, the following single itemsets are found to be frequent: {pen}, {ink}, {milk}. • Now we generate the candidates for k=2: {pen, ink}, {pen, milk}, {pen, juice}, {ink, milk}, {ink, juice} and {milk, juice}. • By scanning the relation, we determine that the following are frequent: {pen, ink}, {pen, milk}. • Now we generate the candidates for k=3: {pen, ink, milk}, {pen, milk, juice}, {pen, ink, juice}. • By scanning the relation, we determine that none of these are frequent, and the algorithm ends with: { {pen}, {ink}, {milk}, {pen, ink}, {pen, milk} }

Algorithm refinement: • More complex algorithms use the same tools: iterative generation and testing of candidate itemsets. • One important refinement: after the candidate-generation phase, and before the scan of the relation (A-priori), eliminate candidate itemsets in which there is a subset which is not frequent. This is due to the A-Priori property. • In the second iteration, this means we would eliminate {pen, juice}, {ink, juice} and {milk, juice} as candidates since {juice} is not frequent. So we only check {pen, ink}, {pen, milk} and {ink, milk}. • So only {pen, ink,milk} is generated as a candidate, but it is eliminated before the scan because {ink, milk} is not frequent. • So we don’t perform the 3rd iteration of the relation. Not frequent

Association Rules • Up until now we discussed identification of frequent item sets. We now wish to go one step further. • An association rule is of the structure {pen}=> {ink} • It should be read as: “if a pen is purchased in a transaction, it is likely that ink will also be purchased in that transaction”. • It describes the data in the DB (past). Extrapolation to future transactions should be done with caution. • More formally, an Association Rule is LHS=>RHS, where both LHS and RHS are sets of items, and implies that if every item in LHS was purchased in a transaction, it is likely that the items in RHS are purchased as well.

Measures for Association Rules • Support of “LHS=>RHS”is the support of the itemset (LHS U RHS). In other words: the fraction of transactions that contain all items in (LHS U RHS) . • Confidence of“LHS=>RHS”: Consider all transactions which contain all items in LHS. The fraction of these transactions that also contain all items in RHS, is the confidence of RHS. • The confidence of a rule is an indication of the strength of the rule. • What is the support of {pen}=>{ink}? And the confidence? • What is the support of {ink}=>{pen}? And the confidence?

Finding Association rules • A user can ask for rules with minimum support minSup and minimum confidence minConf. • Firstly, all frequent itemsets with support>minSup are computed with the previous Algorithm. • Secondly, rules are generated using the frequent itemsets, and checked for minConf.

Finding Association rules • Find all frequent itemsets using the previous alg. • For each frequent itemset X with support S(X): For each division of X into 2 itemsets: Devide X into 2 itemsets LHS and RHS. The Confidence of LHS=>RHS is S(X)/S(LHS). • We computed S(LHS) in the previous algorithm (because LHS is frequent since X is frequent).

Generalized association rules We would like to know if the rule {pen}=>{juice} is different on the first day of the month compared to other days. How? What are its support and confidence generally? And on the first days of the month?

Generalized association rules • By specifying different attributes to group by (date in the last example), we can come up with interesting rules which we would otherwise miss. • Another example would be to group by location and check if the same rules apply for customers from Jerusalem compared to Tel Aviv. • By comparing the support and confidence of the rules we can observe differences in the data on different conditions.

Caution in prediction • When we find a pattern in the data, we wish to use it for prediction (that is in many case the point). • However, we have to be cautious about this. • For example: suppose {pen}=>{ink} has a high support and confidence. We might give a discount on pens in order to increase sales of pens and therefore also in sales of ink. • However, this assumes a causal link between {pen} and {ink}.

Caution in prediction • Suppose pens and pencils are always sold together (for example because customers tend to buy writing instruments together). • We would then also get the rule {pencil}=>{ink} with the same support and confidence as {pen}=>{ink} • However, it is clear there is no causal link between buying pencils and buying ink. • If we promoted pencils it would not cause an increase in sales of ink, despite high support and confidence. • The chance to infer “wrong” rules (rules which are not causal links) decreases as the DB size increases, but we should keep in mind that such rules do come up. • Therefore, the generated rules are a only good starting point for identifying causal links.

Classification and Regression rules • Consider the following relation: InsuranceInfo(age integer, carType string, highRisk bool) • The relation holds information about current customers. • The company wants to use the data in order to predict if a new customer, whose age and carType are known, is at high risk (and therefore charge higher insurance fee of course). • Such a rule for example could be “if age is between 18 and 23, and carType is either ‘sports’ or ‘truck’, the risk is high”.

Classification and Regression rules • Such rules, where we are only interested in predicting one attribute are special. • The attribute which we predict is called the Dependent attribute. • The other attributes are called the Predictor attributes. • If the dependant attribute is categorical, we call such rules classification rules. • If the dependent attribute is numerical, we call such rules regression rules.

Regression in a nutshell Jim’s cows (training set) new cow (test set)

Regression in a nutshell • Assume that the Rate is a linear combination of the other attributes: Rate= w0 + w1*BP + w2*MA + w3*AGE + w4*NOC • Our goal is thus to find w0, w1, w2, w3, w4 (which actually means how strongly each attribute affects the Rate) • We thus want to minimize: Σ(Rate(i)-[w0+w1*BP(i)+w2*MA(i)+w3*AGE(i)+w4*NOC(i) ]) i=Cow number i Prediction of Rate using w0-w4 Real Rate

Regression in a nutshell • This minimization is pretty straightforward (though outside the scope of this course). • It will give better coefficients the larger the “training set” is. • The assumption that the sum is linear is wrong in many cases. Hence the use of SVM. • Notice this only deals with the case of all attributes being numerical. • All this and more in Intro. to Machine Learning course