Data Mining

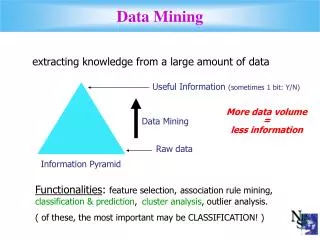

Data Mining. extracting knowledge from a large amount of data. Useful Information (sometimes 1 bit: Y/N). More data volume = less information. Data Mining. Raw data. Information Pyramid.

Data Mining

E N D

Presentation Transcript

Data Mining extracting knowledge from a large amount of data Useful Information (sometimes 1 bit: Y/N) More data volume = less information Data Mining Raw data Information Pyramid Functionalities: feature selection,association rule mining, classification & prediction, cluster analysis, outlier analysis. ( of these, the most important may be CLASSIFICATION! )

Classification Training data: Feature1 Feature2 Feature3 Class a1 b1 c1 A a2 b2 c2 A a3 b3 c3 B Unclassified sample: Classifier Predicted class of that sample a b c Predicting the class of an unclassified data sample based on some history (Training Data). Eager classifier: Builds a classifier model in advance e.g. a decision tree, a trained neural network... Lazy classifier: Uses the training data each time e.g. k-nearest neighbor

k-Nearest Neighbor (kNN) Classification andClosed-k-Nearest Neighbor (CkNN) Classification 1) Select a suitable value for k 2) Determine a suitable distance or similarity notion. 3) Find the k nearest neighbor set [closed] of the unclassified sample. 4) Find the plurality class in the nearest neighbor set. 5) Assign the plurality class as the predicted class of the sample T is the unclassified sample. Use Euclidean distance. k = 3: Find 3 closest neighbors. Move out from T until ≥ 3 neighbors T That's 2 ! kNN arbitrarily select one point from that boundary line as 3rd nearest neighbor, whereas, CkNN includes all points on that boundary line. That's 1 ! That's more than 3 ! CkNN yields higher classification accuracy than traditional kNN. At what additional cost? Actually, at negative cost (faster and more accurate!!)

Performance Experimented on two sets of (Arial) Remotely Sensed Images of Best Management Plot (BMP) of Oakes Irrigation Test Area (OITA), ND Data contains 6 bands: Red, Green, Blue reflectance values, Soil Moisture, Nitrate, and Yield (class label). Band values ranges from 0 to 255 (8 bits) Considering 8 classes or levels of yield values

Performance – Accuracy (3 horizontal methods in middle, 3 vertical methods (the 2 most accurate and the least accurate) 1997 Dataset: 80 75 70 65 Accuracy (%) 60 55 kNN-Manhattan kNN-Euclidian 50 kNN-Max kNN using HOBbit distance P-tree Closed-KNN0-max Closed-kNN using HOBbit distance 45 40 256 1024 4096 16384 65536 262144 Training Set Size (no. of pixels)

Performance – Accuracy (3 horizontal methods in middle, 3 vertical methods (the 2 most accurate and the least accurate) 1998 Dataset: 65 60 55 50 45 Accuracy (%) 40 kNN-Manhattan kNN-Euclidian kNN-Max kNN using HOBbit distance P-tree Closed-KNN-max Closed-kNN using HOBbit distance 20 256 1024 4096 16384 65536 262144 Training Set Size (no of pixels)

Performance – Speed (3 horizontal methods in middle, 3 vertical methods (the 2 fastest (the same 2) and the slowest) Hint: NEVER use a log scale to show a WIN!!! 1997 Dataset: both axis in logarithmic scale Training Set Size (no. of pixels) 256 1024 4096 16384 65536 262144 1 0.1 0.01 Per Sample Classification time (sec) 0.001 0.0001 kNN-Manhattan kNN-Euclidian kNN-Max kNN using HOBbit distance P-tree Closed-KNN-max Closed-kNN using HOBbit dist

Performance – Speed (3 horizontal methods in middle, 3 vertical methods (the 2 fastest (the same 2) and the slowest) Win-Win situation!! (almost never happens) P-tree CkNN and CkNN-H are more accurate and much faster. kNN-H is not recommended because it is slower and less accurate (because it doesn't use Closed nbr sets and it requires another step to get rid of ties (why do it?). Horizontal kNNs are not recommended because they are less accurate and slower! 1998 Dataset : both axis in logarithmic scale Training Set Size (no. of pixels) 256 1024 4096 16384 65536 262144 1 0.1 0.01 Per Sample Classification Time (sec) 0.001 0.0001 kNN-Manhattan kNN-Euclidian kNN-Max kNN using HOBbit distance P-tree Closed-kNN-max Closed-kNN using HOBbit dist