search engine

Importanace of Search engine, how they play an important role in developing the searching criteria

search engine

E N D

Presentation Transcript

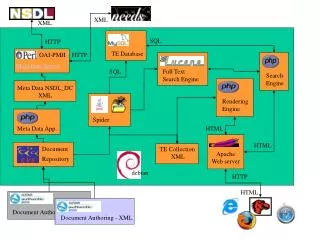

A web search engine is a software system that is designed to search for information on the World Wide Web. The search results are generally presented in a line of results often referred to as search engine result page(SERPs).

Working of search engine??? Search engine works based on the following steps: • Crawling • Catching • Indexing Crawling is the process of crawling websites to get information about that website. After taking snapshot it is saved in the database. This is done by web crawlers or spiders or robots Catching is fetching data related to the website and storing it in the database. Indexing is the process of listing the fetched web pages. Web masters controls website related activities.

WORLD WIDE WEB:_ The World Wide Web (abbreviated WWW or the Web) is an information space where documents and other web resources are identified by Uniform Resource Locators (URLs), interlinked by hypertext links, and accessible via the Internet

Web crawler??? A Web crawler, sometimes called a spider, is an Internet bot that systematically browses the World Wide Web, typically for the purpose of Web indexing

What is indexing??? Search engine indexing collects, parses, and stores data to facilitate fast and accurate information retrieval.

What is fetching??? Gathering or collecting the correct relevant data from the collection data's available within the www (world wide web)registered library.