Enhancing Reinforcement Learning through Autonomous Recombinant Process Models

This thesis explores Recombinant Reinforcement Learning (RRL), focusing on improving task learning efficiency for RL agents, particularly robots. By leveraging a repository of diverse process models, RRL enables agents to combine information from prior tasks, facilitating quicker adaptation to new challenges. The research addresses representation choices using Dynamic Bayesian Networks and Decision Trees. Key benefits of RRL include modularity in task performance, efficient transfer of prior knowledge, and optimization under partial observability—paving the way for advancements in vision-based robotics and event understanding.

Enhancing Reinforcement Learning through Autonomous Recombinant Process Models

E N D

Presentation Transcript

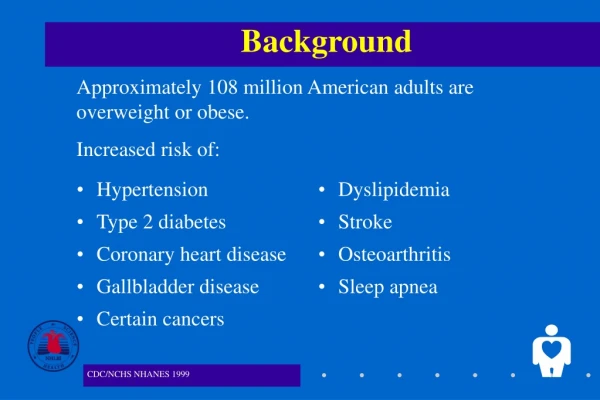

Introducing Recombinant Reinforcement Learning Funlade T. Sunmola (PhD Candidate) Thesis Committee: Dr. Ela Claridge, Prof. Marta Kwiatkowska and Dr. Jeremy Wyatt (supervisor) School of Computer Science The University of Birmingham Edgbaston, Birmingham B15 2TT UK. Background Premise Reinforcement Learning (RL) agents learn to do tasks by iteratively performing actions in the world and using resulting experiences to decide future actions. The experiences encapsulate amongst other things a reward or reinforcement on the actions taken The agents learn to perform intelligently by either building a process model of its environment and planning using the model or simply by learning the value of each action in every state and performing the action with the highest value. Model-based reinforcement learners are much more data efficient and relatively superior especially where real world actions are expensive and computation time is relatively cheap. We express recombinant RL as autonomous recombination of processes, especially process models, in the learning and performing of new tasks based on reinforcement. MOTIVATION: We seek to endow agents, particularly robots, with the ability to combine information from process models for separate and possibly diverse tasks in order to help them learn more quickly on some new tasks, which shares some features with each of the models for the previous tasks. • Environment has structure that can be exploited. • Planning and Learning is primarily model-based. • Repository of process models exists for related tasks • Process is open to learning opportunities (exploration). Illustrating Recombinant RL • BENEFITS: • SCALE UP – exploit modularity in task performance. • TRANSFER – share and reuse components of process models • OVERCOME PARTIAL OBSERVABILITY • SELECTIVE OPTIMISATION & COMPENSATION Current Issues 1. CHOICE OF REPRESENTATION: How do we represent process models in Recombinant RL? OPTION – Probabilistic graphical Models, Framework We are using two time slice Dynamic Bayesian Networks (DBN) and decision trees / Algebraic Decision Diagrams (ADDs). Probabilistic graphical models are graphical representations for probabilistic structure, along with functions that can be used to derive joint distributions. Examples include Markov random fields and Bayesian Networks.. They combine representational and algorithmic powers of graph theory with probability theory. • We are developing a system that will enable intelligent agents learn compact models of new tasks by identifying similarities and differences across a set of related process models. • INPUT: • A description of the new task in the form of a ‘task graph’ and a ‘pro-forma priormodel’ of the task environment. • A set of related ‘prospective prior models’ retrieved from a repository of donor process models. • KEY OPERATIONS: • OUTPUT: • Learned compact process model of the new task • Benefits include: • Clear semantics – easy to understand and interpret. • Provides attractive basis for inference algorithms • Facilitates processing of partial observations – hidden variables and missing data. • Dependencies can be handled efficiently – partitioned and exploited. • Components of the network can be targeted for reuse. 2. Transfer of prior information between models. OPTION – Using methods of imaginary Data? 3. Exploration control of learning with the recombined model. OPTION – Approximation techniques - Optimistic model selection? MCMC? basis for Probabilistic Inference Optimal Policy 4. Real world applications – vision based robotics …event understanding.