Analyzing & Interpreting Data

Analyzing & Interpreting Data Assessment Institute Summer 2005 Categorical vs. Continuous Variables Categorical Variables Examples S tudent’s major, enrollment status, gender, ethnicity; also whether or not the student passed the cutoff on a test Continuous Variables

Analyzing & Interpreting Data

E N D

Presentation Transcript

Analyzing & Interpreting Data Assessment Institute Summer 2005

Categorical vs. Continuous Variables • Categorical Variables • Examples Student’s major, enrollment status, gender, ethnicity; also whether or not the student passed the cutoff on a test • Continuous Variables • Examples GPA, test scores, number of credit hours. • Why make this distinction? • Whether a variable is categorical or continuous affects whether a particular statistic can be used • Doesn’t make sense to calculate the average ethnicity of students!

Averages • Typical value of a variable • In assessment we commonly compare averages of: • Different groups • Each group consists of different people • Avg. score on a test for students in different classes • Different occasions • Same people tested on each occasion • Avg. score on a test for students who took the test as freshmen an then again when they were seniors

Before calculating an average… • Check to make sure that the variable: • Is continuous • Has values in your data set that are within the possible limits • Check minimum and maximum values • Does not have a distribution that is overly skewed • If so, consider using median • Does not have any values that would be considered outliers

Correlations (r) • Captures linear relationship between two continuous variables (X and Y) • Ranges from -1 to 1 with values closer to |1| indicating a stronger relationship than values closer to 0 (no relationship) • Positive values: • High X associated with high Y; low X associated with low Y • Negative values: • High X associated with low Y; low X associated with high Y

In this example, dropping cases that appeared to be outliers did not change the relationship between the two administrations (r = .30), nor their averages. Scatterplot: Does relationship appear linear? Is there a problem with restriction of range? Does there appear to be outliers?

Standards • May want to use standard setting procedures to establish “cut-offs” for proficiency on the test • Could be that students are gaining knowledge/skills over time, but are they gaining enough? • Another common statistic calculated in assessment is the % of students meeting or exceeding a standard

A. Are the 29 senior music majors in Spring 2005 scoring higher on the Vocal Techniques 10-item test than last year’s 20 senior music majors? • Compare averages of different groups Yes, this year’s seniors scored higher (M = 6.72) than last year’s (M = 6.65).

B. Are senior kinesiology majors in different concentrations (Sports Management vs. Therapeutic Recreation) scoring differently on a test used to assess their “core” kinesiology knowledge? • Compare averages of different groups

C.On the Information Seeking Skills Test (ISST), what percent of incoming freshmen in Fall 2004 met or exceeded the score necessary to be considered as having “proficient” information literary skills? • Percent of students meeting and exceeding a standard Of the 2862 students attempting the ISST, 2751 (96%) met or exceeded the “proficient” standard.

D. Are the well-being levels (as measured using six subscales - e.g., self-acceptance, autonomy, etc.) of incoming JMU freshmen different than the well-being levels of adults? • Compare averages of different groups (JMU students vs. adults) • More than one variable (six different subscales)

While the practical significance of the differences for Self-Acceptance and Purpose in Life are considered small (d=.14 and d=.25), the differences for Autonomy (d=.50) and Environmental Mastery (d=.35) are considered medium and small to medium, respectively. Similarities JMU Incoming Freshmen seem to be similar to the adult sample (N = 1100) in Positive Relations with Others and Personal Growth. Differences JMU incoming freshmen have significantly lower Autonomy and Environmental Mastery well-being compared to the adult sample and significantly higher Self-Acceptance and Purpose in Life.

E. Are students scoring higher on the Health and Wellness Questionnaire as sophomores compared to when they were freshmen? Does the difference depend on whether or not they have completed their wellness course requirement? • Comparing Means Across Different Occasions for Different Groups

“Non-Completers” N = 21 “Completers” N = 283

F. Are the writing portfolios collected in the fall semester yielding higher ratings than writing portfolios collected in the spring semester?Are the differences between the semesters the same across three academic years? • Compare averages of different groups • Six different groups (fall and spring for each academic year)

In the 2001-2002 and 2002-2003 academic years, fall portfolios were rated slightly higher than spring portfolios. In the most current academic year, the fall and spring portfolio averages were about the same. There doesn’t seem to be overwhelming evidence that the difference between fall and spring portfolios is of importance.

G. Are students who obtained transfer or AP credit for their general education sociocultural domain course scoring differently on the 27-item Sociocultural Domain Assessment (SDA) than students who completed their courses at JMU? • Compare averages of different groups • JMU students: N = 369, M = 18.63, SD = 3.83 • AP/transfer students: N = 29, M = 18.55, SD = 3.68 • Difference was not statistically, t(335)=.11, p = .92, nor practically significant (d = .02).

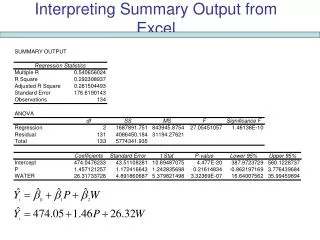

r = .31 r = .23 G. What is the relationship between a student’s general education sociocultural domain course grade and their score on the 27-item Sociocultural Domain Assessment (SDA)? • Relationship between two variables, finally!

Inferential Statistics • “How likely is it to have found results such as mine in a population where the null hypothesis is true?” • Comparing Averages of Different Groups • Independent Samples T-test • Null Groups do not differ in population means • Comparing Averages Across Different Occasions • Paired Samples T-test • Null Occasions do not differ in population means • Correlation • Null No relationship between variables in the population Typically, want to reject the null: p-value < .05

Effect Sizes and Confidence Intervals • Statistical significance is a function of both the magnitude of the effect (e.g., difference between means) and sample size • Supplement with confidence intervals and effect sizes • SPSS provides you with confidence intervals • Can use Wilson’s Effect Size Calculator to obtain effect sizes

Wellness Domain ExampleGoals & Objectives Students take one of two courses to fulfill this requirement, either GHTH 100 or GKIN 100.

Knowledge of Health and Wellness (KWH) Test Specification Table

Data Management PlanWellness_Data.sav (N = 105) Missing data indicated for all variables by "."

Item Analysis Item 1

Item Difficulty • The proportion of people who answered the item correctly (p) Used with dichotomously scored items • Correct Answer - score=1 • Incorrect Answer - score=0 • Item difficulty a.k.a. p-value • Dichotomous items • Mean=p • Variance=pq, where q = 1-p

SPSS output for 1st 6 items of 35 item GKIN100 Test3 Spring 2005 Std Dev is a measure f the variability in the item scores Mean is item difficulty (p) Mean Std Dev Cases 1. ITEM1 .5609 .4972 271.0 2. ITEM2 .9520 .2141 271.0 3. ITEM3 .8598 .3479 271.0 4. ITEM4 .7454 .4364 271.0 5. ITEM5 .6089 .4889 271.0 6. ITEM6 .5793 .4946 271.0 Sample size on which analysis is based 58% of the sample obtained the correct response to Item 6. The difficulty or p-value of Item 6 is .58

Easiest & Hardest Items p = .99 EASIEST • 25. Causes of mortality today are: A. the same as in the early 20th century. B. mostly related to lifestyle factors. C. mostly due to fewer vaccinations. D. a result of contaminated water. • 34. Which of the following is a healthy lifestyle that influences wellness? A. brushing your teeth B. physical fitness C. access to health care D. obesogenic environment p = .14 HARDEST

Item Difficulty Guidelines • High p-values, item is easy; low p-values, item is hard • If p-value=1.0 (or 0), everyone answering question correctly (or incorrectly) and there will be no variability in item scores • If p-value too low, item is too difficult, need revision or perhaps test is too long • Good to have a mixture of difficulty in items on test • Once know difficulty of items, usually sort them from easiest to hardest on test

Item Discrimination • Correlation between item score and total score on test • Since dealing with dichotomous items, this correlation is usually either a biserial or point-biserial correlation • Can range in value from -1 to 1 • Positive values closer to 1 are desirable

Item Discrimination Guidelines • Item discrimination: can the item separate the men from the boys (women from the girls) • Can the item differentiate between low or high scorers? • Want high item discrimination! • Consider dropping or revising items with discriminations lower than .30 • Can be negative, if so – check scoring key and if the key is correct, may want to drop or revise item • a.k.a. rpbis or Corrected Item-Total Correlation

Scatterplot of relationship between item 2 score (0 or 1) and total score rpbis = .52 If I know you item score, I have a pretty good idea as to what your ability level or total score is. 0 1 Scatterplot of relationship between item 17 score (0 or 1) and total score rpbis = .18 If I know you item score, I DO NOT have a pretty good idea as to what your ability level or total score is. 0 1

SPSS output for 1st 6 items of 35 item GKIN100 Test3 Spring 2005 Corrected Item-Total Correlation is Item Discrimination (rpbis) Why is it called corrected item-total correlation? The corrected implies that the total is NOT the sum of all item scores, but the sum of item scores WIHTOUT including the item in question.

Percentage of sample choosing each alternative. Average total test score for students who chose each alternative. A = 1 B = 2 C = 3 D = 4 9 = Missing Notice how the highest average total test score (M = 27.65) is associated with the correct alternative (B). All other means are quite a bit lower. This indicates that the item is functioning well and will discriminate. This information is for item 2, where the item difficulty and discrimination were: p = .95, rpbis = .52

Percentage of sample choosing each alternative. Average total test score for students who chose each alternative. A = 1 B = 2 C = 3 D = 4 9 = Missing Notice how the highest average total test score (M = 27.91) is associated with the correct alternative (C). Unlike item 2, with this item all other means are fairly close to 27.91. This indicates that the item does not discriminate as well as item 2. This information is for item 17, where the item difficulty and discrimination were: p = .697, rpbis = .18

Took information from SPSS distractor analysis output and put it in the following graph. • Did this mainly for those items that were difficult (p < .50) or had low discrimination (rpbis < .20) 4. The DSHEA of 1994 has: A. labeled certain drugs illegal based on their active ingredient. B. caused health food companies to lose significant business. C. made it easier for fraudulent products to stay on the market. D. caused an increase in the cost of many dietary supplements.

Hard item - but pattern of means indicates it is not problematic. 31. aging relates to lifestyle. A. Time-dependent B. Acquired C. Physical D. Mental

10.Chris MUST get a beer during the commercials each time he watches the NFL. Which stage of addiction does this demonstrate? • Exposure • Compulsion • Loss of control • This is not an example of addiction. This item may be problematic - students choosing "C" scoring almost as high on the test overall as those choosing "B".

Other Information from SPSS • Descriptive Statistics for total score. N of Statistics for Mean Variance Std Dev Variables SCALE 27.1033 19.1152 4.3721 35 # items on the test Average total score Average # of points by which total scores are varying from the mean • An measure of the internal consistency reliability for your test called coefficient alpha. Alpha = .7779 Ranges from 0 1 with higher values indicating higher reliability. Want it to be > .60

Test Score Reliability • Reliability defined: extent or degree to which a scale/test consistently measures a person • Need a test/scale to be reliable in order to trust the test scores! If I administered a test to you today, wiped out your memory, administered it again to you tomorrow – you should receive the same score on both administrations! • How much would you trust a bathroom scale if you consecutively weighed yourself 4 times and obtained weights of 145, 149, 142, 150?

Internal Consistency Reliability • Internal consistency reliability: extent to which items on a test are highly intercorrelated • SPSS reports Cronbach’s coefficient alpha • Alpha may be low if: • Test is short • Items are measuring very different things (several different content areas or dimensions) • Low variability in your total scores or small range of ability in the sample you are testing • Test only contains either very easy items or very hard items