Background

Budgeted Machine Learning of Bayesian Networks. Michael R. Gubbels , Mentor: Dr. Stephen D. Scott. Department of Computer Science and Engineering University of Nebraska—Lincoln. Background. Materials and Methods.

Background

E N D

Presentation Transcript

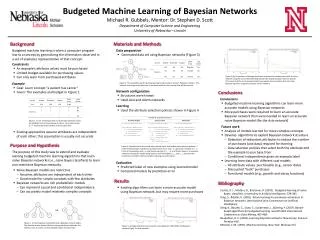

Budgeted Machine Learning of Bayesian Networks Michael R. Gubbels, Mentor: Dr. Stephen D. Scott Department of Computer Science and Engineering University of Nebraska—Lincoln Background Materials and Methods Budgeted machine learning is when a computer program learns a concept by generalizing the information observed in a set of examples representative of that concept. • Data preparation • Generated data set using Bayesian networks (Figure 3) • Constraints • An example’s attribute values must be purchased • Limited budget available for purchasing values • Can only learn from purchased attributes Figure 5 (b). Comparison of average prediction accuracies for models learned for the Asia network using the round robin (left) and biased robin (right) attribute selection policies. This shows that the more complex model may require more purchases than the naïve Bayesian classifier using these policies for this network. • Example • Goal: Learn concept “a patient has cancer” • Given: The examples and budget in Figure 1 Figure 3. The examples used for learning were generated using a “correct” Bayesian network. The network used to generate data was an accurate model for the concept that will be learned. • Network configuration • Structures were known • Used Asia and Alarm networks Conclusions • Conclusions • Budgeted machine learning algorithms can learn more accurate models using Bayesian networks • More purchases were required to learn an accurate Bayesian network than were needed to learn an accurate naïve Bayesian model (for the Asia network) • Learning • Used the attribute selection policies shown in Figure 4 Figure 1. An set of examples with no observable attribute values. An attribute’s cost is shown below its name. Each value shown as “?” must be purchased before it can be observed. • Future work • Analysis of models learned for more complex concepts • Develop algorithms to exploit Bayesian network structure • Detection of redundant attributes to reduce the number of purchases (and data) required for learning • Data selection policies that select both the attribute and the example to purchase from • Conditional independence given an example label • Learning from data with different cost models • All attribute values purchasable (e.g., sensor data) • Discounted “bulk” purchases • Functional models (e.g., growth and decay functions) • Existing approaches assume attributes are independent of each other; this assumption is usually not accurate Figure 4. Pseudocode for the round robin, biased robin, and random data selection policies. A is the set of attributes available to purchase values from, and a is a particular attribute in A. E is the set of examples, and e is a particular example in E. v is an attribute value in an example. M denotes the specific model being learned, and the variables mold and mnew represent the correctness of model M before and after learning new information. Purpose and Hypothesis The purpose of this study was to extend and evaluate existing budgeted machine learning algorithms that learn naïve Bayesian networks (i.e., naïve Bayes classifiers) to learn non-restrictive Bayesian networks. • Evaluation • Predicted label of new examples using learned model • Compared models by prediction error Bibliography • Naïve Bayesian models are restrictive • Assumes attributes are independent of each other • Good model for simple concepts with few attributes • Bayesian networks are rich probabilistic models • Can represent causal and conditional independence • Can accurately model relatively complex concepts Lizotte, D. J., Madani, O., & Greiner, R. (2003). Budgeted learning of naïve-Bayes classifiers. Uncertainty in Artificial Intelligence, 378-385. Tong, S., &Koller, D. (2001). Active learning for parameter estimation in Bayesian networks. International Joint Converences on Artificial Intelligence. Deng, K., Bourke, C., Scott, S., Sunderman, J., &Zheng, Y. (2007). Bandit-based algorithms for budgeted learning. Seventh IEEE International Conference on Data Mining, 463-468. Neapolitan, R. E. (2004). Learning bayesian networks. New Jersey: Pearson Prentice Hall. Mitchell, T. M. (1997). Machine learning. New York: McGraw-Hill. Results • Existing algorithms can learn a more accurate model using Bayesian network, but may require more purchases vs. Figure 2. A naïve Bayesian model (left) and a Bayesian network (right). The directed arrows denote influence among attributes (although this influence can vary when certain attribute values are observed). Figure 5. Average prediction accuracies for naïve Bayesian model (left) and Bayesian network (right) for Asia networks.