Single Image Super Resolution

Single Image Super Resolution. Seminar presented by: Tomer Faktor Advanced Topics in Computer Vision (048921) 12/01/2012. OutLine. What is Image Super Resolution (SR)? Prior Art Today – S ingle Image SR: Using patch recurrence [ Glasner et al., ICCV09]

Single Image Super Resolution

E N D

Presentation Transcript

Single Image Super Resolution Seminar presented by: TomerFaktor Advanced Topics in Computer Vision (048921) 12/01/2012

OutLine • What is Image Super Resolution (SR)? • Prior Art • Today – Single Image SR: • Using patch recurrence [Glasner et al., ICCV09] • Using sparse representations [Yang et al., CVPR08], [Zeyde et al., LNCS10]

From High to Low Res. and Back Blur (LPF) + Down-sampling Inverse problem

What is Image SR? • Inverse problem – underdetermined • Image SR - how to make it determined:

Goals of Image SR • Always: • More pixels - according to the scale factor • Low res. edges and details - maintained • Bonus: • Blurred edges - sharper • New high res. details missing in the low res.

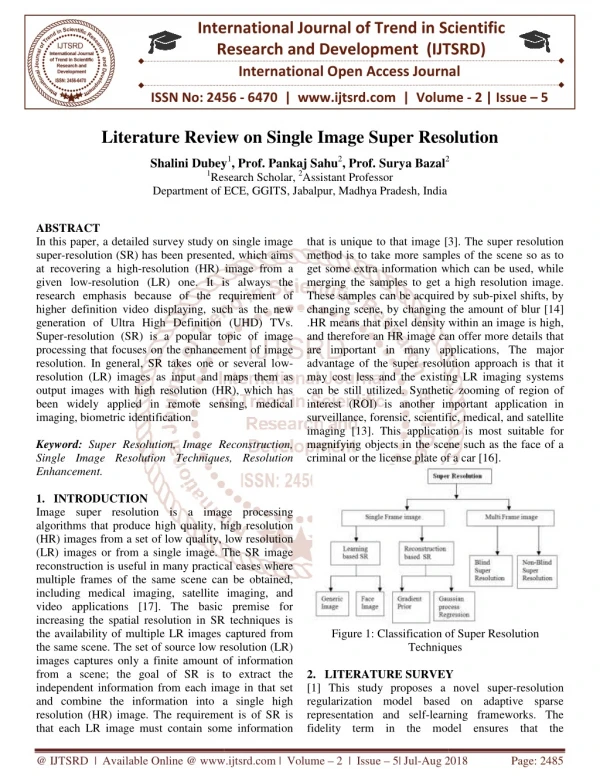

Multi-Image SR “Classical” [Irani91,Capel04,Farisu04] Fusion

Example-based SR [Freeman01, Kim08] Example-based SR (Image Hallucination) Algorithm Blur kernel & scale factor External database of low and high res. image patches

Single Image SR Single-Image SR (Scale-Up) Algorithm Blur kernel & scale factor Image model/prior

Prior art • No blur, only down-sampling Interpolation: • LSI schemes – NN, bilinear, bicubic, etc. • Spatially adaptive and non-linear filters [Zhang08, Mallat10] • Blur + down-sampling: Interpolation Deblurring

Prior art • LS problem: • Add a regularization: • Tikhonov regularization, robust statistics, TV, etc. • Sparsity of global transform coefficients • Parametric learned edge model [Fattal07, Sun08]

Super Resolution from a Single Image D. Glasner, S. Bagon and M. Irani ICCV 2009

BASIC Assumption Patches in a single image tend to redundantly recur many times within and across scales

Statistics of patch recurrence The impact of SR is mostly expressed here

Suggested Approach • Patch recurrence A single image is enough for SR • Adapt ideas from classical and example-based SR: • SR linear constraints – in-scale patch redundancy instead of multi-image • Correspondence between low-high res. patches - cross-scale redundancy instead of external database Unified framework

Exploiting in-scale Patch redundancy • In the low res. – find K NN for each pixel • Compute their subpixel alignment • Different weight for each linear SR constraint (patch similarity)

Exploiting Cross-scale redundancy Unknown, increasing res. by factor α Copy Copy NN Parent Known, decreasing res. by factor α Parent NN

Important Implementation Details • Coarse-to-fine – gradual increase in resolution, for numerical stability, improves results • Back-projection [Irani91] – to ensure consistency of the recovered high res. image with the low res • Color images – convert RGB to YIQ: • For Y – the suggested approach • For I,Q – bicubicinterpolation

Results – Purely repetitive structure Input low res. image

Suggested Approach Bicubic Interpolation

Results – Purely repetitive structure Bicubic interp. Input low res. image In and cross-scale redundancies Only in-scale redundancy High res. detail

Results – TEXT IMAGE Suggested approach Ground Truth Bicubic Interp. Input low res. image Small digits recur only in-scale and cannot be recovered

Results – Natural IMAGE Edge model [Fattal07] Suggested Approach Example-based [Kim08] Bicubic Interp. Input low res. image

Paper evaluation • Reasonable assumption – validated empirically • Very novel – new SR framework, combining two widely used approaches in the SR field • Solution technically sound • Well written, very nice paper! • Many visual results (nice webpage)

Paper evaluation Not fully self-contained – almost no details on: • Subpixel alignment • Weighted classical SR constraints • Back-projection No numerical evaluation (PSNR/SSIM) of the results No code available No details on running time

On Single IMAGE scale-up using sparse-representations R. Zeyde, M. Elad and M. Protter Curves & Surfaces, 2010

Sparse-land Prior • Widely-used signal model with numerous applications • Efficient algorithms for pursuit and dictionary learning m n n d Dictionary signal Non-zeros sparse vector

BASIC Assumptions • Sparse-land prior for image patches • Patches in a high-low res. pair have the same sparse representation over a high-low res. dictionary pair [Yang08] & [Zeyde10] Same procedure for all patches Joint training!

Joint Representation - Justification • Each low res. patch is generated from the high res. using the same LSI operator (matrix): • A pair of high-low res. dictionaries with the correct correspondence leads to joint representation of the patches:

Research Questions • Pre-processing? • How to train the dictionary pair? • What is the training set? • How to utilize the dictionary pair for SR? • Post-processing? [Yang08] & [Zeyde10]

Pre-processing – Low res. 1 2 Low Res. Image Feature Extraction by HPFs Bicubic Interpolation

Pre-processing – Low Res. 3 Features for low res. patches Dimensionality Reduction via PCA Projection onto reduced PCA basis

Pre-processing – High res. - Bicubic Interpolation

Training Phase 1 2 Training the Dictionary Pair

Training the low res. dictionary 1 K-SVD Dictionary Learning

Which training SET? • External set of true high res. image (off-line training): • Generate low res. image • Collect • The image itself (bootstrapping): • Input low res. image = “high res.” • Proceed as before…

reconstruction Phase Low Res. Image Image Reconstruction Pre-processing OMP SR Image

Image reconstruction No need to perform back-projection!

Results – Text Image Suggested Approach Bicubic Interp. Ground Truth Input low res. image Dictionary pair learned off-line from another text image! PSNR=14.68dB PSNR=16.95dB

Numerical Results – Natural Images Dictionary pair learned off-line from a set of training images!

Visual Results – Natural Images Bicubic Interp. Yang et al. Ground Truth Suggested Approach Artifact

Visual Comparison Suggested On-line Approach Bicubic Interp. Suggested Off-line Approach Glasner et al. Input low res. image Appears in Zeyde et al.

Glasner et al. Doesn’t appear in Zeyde et al. Input low res. image Suggested On-line Approach Suggested Off-line Approach Bicubic Interp.

Paper evaluation • Reasonable assumptions – justified analytically • Novel – similar model as Yang et al., new algorithmic framework, improves runtime and image SR quality • Solution technically sound • Well written and self-contained • Code available • Performance evaluation – both visual and numerical

Paper evaluation Experimental validation – not full: • Comparison to other approaches – main focus on bicubic interp. and Yang et al. • No comparison between on-line and off-line learning of the dictionary pair on the same image Only “good” results are shown, running the code reveals weakness with respect to Glasner et al.

Future Directions • Extending the first approach to video: Space-time SR from a single video [Shahar et al., CVPR11] • Merging patch redundancy + sparse representations in the spirit of non-local K-SVD [Mairal et al., ICCV09] • Coarse-to-fine also for the second approach – to improve numerical stability and SR quality

Thank you for your attention!