Graphical Models and Belief Propagation in Learning Algorithms

E N D

Presentation Transcript

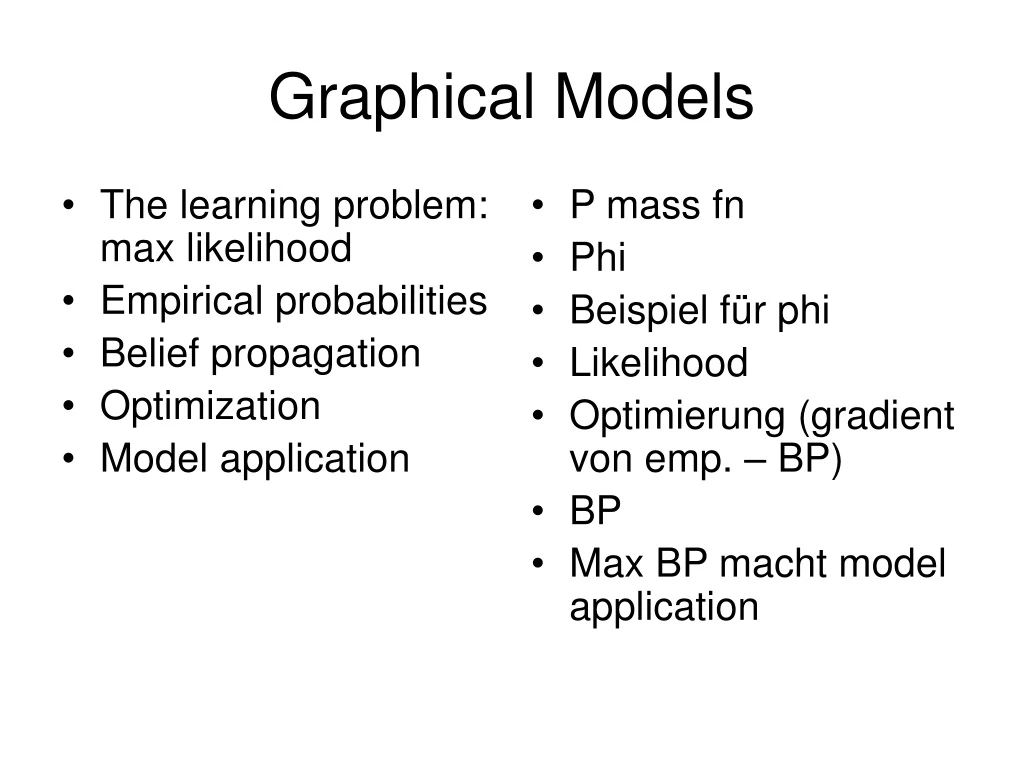

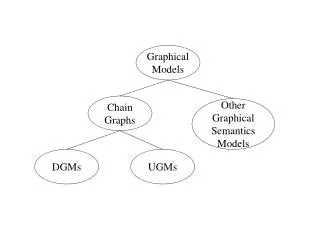

Graphical Models • The learning problem:max likelihood • Empirical probabilities • Belief propagation • Optimization • Model application • P mass fn • Phi • Beispiel für phi • Likelihood • Optimierung (gradient von emp. – BP) • BP • Max BP macht model application

Given data are subsequences of system calls: 1,open,1812,179,178,201,200,firefox,/etc/hosts,524288,438,7 2,read,1812,179,178,201,200,firefox,/etc/hosts,4096, 361 3,read,1812,179,178,201,200,firefox,/etc/hosts,4096, 0 4,close,1812,179,178,201,200,firefox,/etc/hosts timestamp, syscall, thread-id, process-id,parent, user, group, exec, file, parameters (optional), 361 to 0: file is read completely to end of file zero transition zero transition Full transition state firefox-bin/firefox-bin, cookies.sqlite-journal/default/hosts ?/firefox-bin, ?/?/cookies.sqlite-journal firefox-bin/firefox-bin, ?/cookies.sqlite-journal/default

Graphical ModelsConditional Random Fields • Random variablesX= {X1, …,Xr}Y= {Y1, …, Yl}|Y|= l • dom(Xi)={x1i,…,xki}|Xi|= ki • Parameters: Sequence length: T Node + transition + prior Storage need: d + full examples • X = {exec, file} • Y = {full, read, zero} • |Y| = 3 = l • dom(exec)= {?/firefox, firefox/firefox, …} kexec=20 • dom(file)={?/?/cookies, ?/cookies/default, cookies/default/host,…} kfile= 25 • d=20*3 + 25*3 + 20*9 + 25*9 + 3=60+75+180+225 = 540

Learning on GP Graphics Processing Units: CRF • Read batch of B examples counting frequencies for every instance of state/transition features fk. Create a thread for each class and example, B |Y| threads. Each thread calculates for this fixed x and y the msgsof belief propagation. • Deshalbergibtsich: • Z(x) • p(y,y’|x) • p(y|x) • Lookup frequencies fkand use calculations for fixed x from 1. for updating the model. K threads. • Optimization l(q)=p(y,x). Bthreads.

Markov Random Fields • Each node is one random variable: V={lights, rain, jam} • X = (red,yellow,green) x (dry,wet) x (jam, free) • Examples: x1:(red,dry,free) x2:(yellow,dry,free) x3:(green,dry,free) x4:(yellow,dry,jam) • d = 3+2+2 + 3*2 + 3*2 + 2*2 = 23node states+edges values • Random variablesV= {X1, …,Xn}dom(Xi)={x1i,…,xki}|Xi|= ki X = dom(X1) x dom(X2) x …dom(Xn) • Examples: x in X f: X {0,1}d • Parameters for pairwise MRF: Nodes + Edges {dry, wet} Rain Lights Jam {red,yellow,green} {jam, free}

fNodes, Edges f(x): ( /* Nodes*/ • red, /*dom(lights)*/ • yellow, • green, • wet, /*dom(rain)*/ • dry, • jam, /*dom(jam)*/ • free, /*Edges*/ • red, dry, /* edge lights-rain*/ • yellow, dry, • green, dry, • red, wet, • yellow, wet, • green, wet, • red, jam, /*edge lights-jam*/ • yellow, jam, • green, jam, • red, free, • yellow, free, • green, free, • dry, free, /*edge rain-jam*/ • dry, jam, • wet, free, • wet, jam) ) • x1:(red,dry,free) • f(x1)=(1,0,0,0,1,0,1,1,0,0,0,0,0,0,0,0,1,0,0,1,0,0,0) • d = 23 Rain {dry, wet} Lights Jam {red,yellow,green} {jam, free}