Classification

Classification. and application in Remote Sensing . Overview. Introduction to classification problem an application of classification in remote sensing: vegetation classification band selection multi-class classification. Introduction.

Classification

E N D

Presentation Transcript

Classification and application in Remote Sensing

Overview • Introduction to classification problem • an application of classification in remote sensing: vegetation classification • band selection • multi-class classification

Introduction • make program that automatically recognize handwritten numbers:

Introduction classification problem • from raw data to decisions • learn from examples and generalize • Given: Training examples (x, f(x)) for some unknown function f.Find: A good approximation to f.

Examples • Handwriting recognition • x: data from pen motion • f(x): letter of the alphabet • Disease Diagnosis • x: properties of patient (symptoms, lab tests) • f(x): disease (or maybe, recommended therapy) • Face Recognition • x: bitmap picture of person’s face • f(x): name of person • Spam Detection • x: email message • f(x): spam or not spam

Steps for building a classifier • data acquisition / labeling (ground truth) • preprocessing • feature selection / feature extraction • classification (learning/testing) • post-processing • decision

Data acquisition • acquiring the data and labeling • data is independently randomly sample according to unknown distribution P(x,y)

Pre-processing • e.g. image processing: • histogram equalization, • filtering • segmentation • data normalization

Feature selection/extraction • This is generally the most important step • conveying the information in the data to classifier • the number of features: • should be high: more info is better • should be low: curse of dimensionality • will include prior knowledge of problem • in part manual, in part automatic

Feature selection/extraction • User knowledge • Automatic: • PCA: reduce number of feature by decorrelation • look which feature give best classification result

K=3 Class A Class B value feature 2 Class C value feature 1 Feature scatterplot

Classification • learn from the features and generalize • learning algorithm analyzes the examples and produces a classifier f • given a new data point (x,y), the classifier is given x and predicts ŷ = f(x) • the loss L(ŷ,y) is then measured • goal of the learning algorithm: Find the f that minimizes the expected loss

Classification: Bayesian decision theory • fundamental statistical approach to the problem of pattern classification • assuming that the descision problem is posed in probabilistic terms • using P(y|x) posterior probability, make classification (Maximum aposteriori classification)

Classification • need to estimate p(y) and p(x|y), prior and class-conditional probability density using only the data: density estimation. • often not feasible: too little data in to high-dimensional space: • assume simple parametric probability model (normal) • non-parametric • directly find discriminant function

Post-processing • include context • e.g. in images, signals • integrate multiple classifiers

Decision • minimize risk, considering cost of misclassification : when unsure, select class of minimal cost of error.

no free lunch theorem • don’t wait until the a “generic” best classifier is here!

Remote Sensing : acquisition • image are acquired from air or space.

Westhoek Brugge Hyperspectral sensor: AISA Eagle (July 2004): 400-900nm @1m resolution

Feature extraction • here: exploratory use: Automatically look for relevant features • which spectral bands (wavelength) should be measured at what which spectral resolution (width) for my application. • results can be used for classification, sensor design or interpretation

Feature extraction: Band Selection With spectral response function:

Class Separation Criterion two class Bhattacharyya bound multi-class criterion

Optimization Minimize Gradient descent is possible, but local minima prevent it from giving good optimal values. Therefore, we use global optimization : Simulated Annealing.

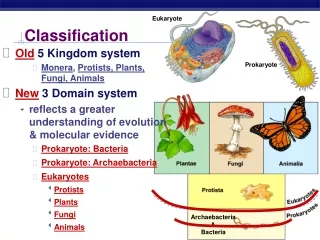

Multi-class Classification • Linear Multi-class Classifier • Combining Binary Classifiers • One against all: K-1 classifiers • One against one: K(K-1)/2 classifiers

AB AC Class A K=3 Class B BC Class C combining linear multi-class classifiers

Combining Binary Classifiers • Maximum Voting: 4 class example Votes: 1 : 0 2 : 2 3 : 1 4 : 3 (Winner)

Problem with max voting • No Probabilities, just class labels • Hard classification • Probabilities are usefull for • spectral unmixing • post-processing

Combining Binary Classifiers :Coupling Probabilities • Look for class probabilities pi: with rij: probability class ωifor binary classifier i-j • K-1 free parameters and K(K-1)/2 constraints ! • Hastie and Tibshirani: find approximations • minimizing Kullback-Leibler distance

Remote Sensing: post-processing • use contextual information to “adjust” classification. • look a classes of neighboring pixels and probabilities, if necessary adjust pixel class