Chapter 6

Chapter 6. Probability: Understanding Random Situations. Introduction. The study of Uncertainty Changes “I’m not sure …” to “I’m positive we’ll succeed … with probability 0.8 ” Can’t predict “for sure” what will happen next But can quantify the likelihood of what might happen

Chapter 6

E N D

Presentation Transcript

Chapter 6 Probability: Understanding Random Situations © Andrew F. Siegel, 2003

Introduction • The study of Uncertainty • Changes “I’m not sure …” • to “I’m positive we’ll succeed … with probability 0.8” • Can’t predict “for sure” what will happen next • But can quantify the likelihood of what might happen • And can predict percentages well over the long run • e.g., a 60% chance of rain • e.g., success/failure of a new business venture • New terminology (words and concepts) • Keeps as much as possible Certain (not random) • Put the randomness in only at the last minute © Andrew F. Siegel, 2003

Terminology • Random Experiment • A procedure that produces an outcome • Not perfectly predictable in advance • There are many random experiments (situations) • We will study them one at a time • Example: Record the income of a random family • Random telephone dialing in a target marketing area, repeat until success (income obtained), round to nearest $thousand • Sample Space • A list of all possible outcomes • Each random experiment has one (i.e., one list) • Example: {0, 1,000, 2,000, 3,000, 4,000, …} © Andrew F. Siegel, 2003

Terminology (continued) • Event • Happens or not, each time random experiment is run • Formally: a collection of outcomes from sample space • A “yes or no” situation: if the outcome is in the list, the event “happens” • Each random experiment has many different events of interest • Example: the event “Low Income” ($15,000 or less) • The list of outcomes is {0, 1, 2, …, 14,999, 15,000} • Example: the event “Six Figures” • The list of outcomes is {100,000, 100,001, 100,002, …, 999,999} • Example: the event “Ten to Forty Thousand” • The list of outcomes is {10,000, 10,001, …, 39,900, 40,000} © Andrew F. Siegel, 2003

Terminology (continued) • Probability of an Event • A number between 0 and 1 • The likelihood of occurrence of an event • Each random experiment has many probability numbers • One probability number for each event • Example: Probability of event “Low Income” is 0.17 • Occurs about 17% over long run, but unpredictable each time • Example: Probability of event “Six Figures” is 0.08 • Not very likely, but reasonably possible • Example: Probability of “10 to 40 thousand” is 0.55 • A little more likely to occur than not always happens never happens © Andrew F. Siegel, 2003

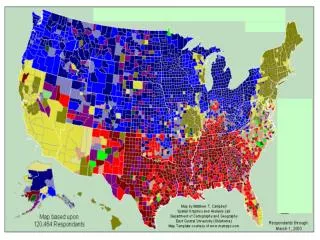

Sources of Probabilities • Relative Frequency • From data • What percent of the time the event happened in the past • Theoretical Probability • From mathematical theory • Make assumptions, draw conclusions • Subjective Probability • Anyone’s opinion, perhaps even without data or theory • Bayesian analysis uses subjective probability with data © Andrew F. Siegel, 2003

Relative Frequency • From data. Run random experiment n times • See how often an event happened • (Relative Frequency of A) = (# of times A happened)/n • e.g., of 12 flights, 9 were on time. • Relative frequency of the event “on time” is 9/12 = 0.75 • Law of Large Numbers • If n is large, then the relative frequency will be close to the probability of an event • Probability is FIXED. Relative frequency is RANDOM • e.g., toss coin 20 times. Probability of “heads” is 0.5 • Relative frequency is 12/20 = 0.6 , or 9/20 = 0.45 , depending © Andrew F. Siegel, 2003

0.5 Relative frequency 0.0 1 2 3 4 5 Number n of times random experiment was run Relative Frequency (continued) • Suppose event has probability 0.25 • In n = 5 runs of random experiment • Event happens: no, yes, no, no, yes • Relative frequency is 2/5 = 0.4 • Graph of relative frequencies for n = 1 to 5 © Andrew F. Siegel, 2003

0.5 Relative frequency Probability = 0.25 0 0 50 100 150 200 Number n of times random experiment was run Relative Frequency (continued) • As n gets larger • Relative frequency gets closer to probability • Graph of relative frequencies for n = 1 to 200 • Relative frequency approaches the probability © Andrew F. Siegel, 2003

Probability Probability Probability 0.500.25 or 0.750.10 or 0.90 n = 10 0.16 0.14 0.09 25 0.10 0.09 0.06 50 0.07 0.06 0.04 100 0.05 0.04 0.03 1,000 0.02 0.01 0.01 Relative Frequency (continued) Table 6.3.1 • About how far from the probability will the relative frequency be? • The random relative frequency will be about one of its standard deviations away from the (fixed) probability • Depends upon the probability and n • Farther apart when more uncertainty (probability near 0.5) Relative frequency will be within about 0.09 of the probability, if n = 25 and probability is 0.75 © Andrew F. Siegel, 2003

Theoretical Probability • From mathematical theory • One example: The Equally Likely Rule • If all N possible outcomes in the sample space are equally likely, then the probability of any event A is • Prob(A) = (# of outcomes in A) / N • Note: this probability is not a random number. The probability is based on the entire sample space • e.g., Suppose there are 35 defects in a production lot of 400. Choose item at random. Prob(defective) = 35/400 = 0.0875 • e.g., Toss coin. Prob(heads) = 1/2 • But: Tomorrow it may snow or not. Prob(snow) 1/2 • because “snow” and “not snow” are not equally likely © Andrew F. Siegel, 2003

Subjective Probability • Anyone’s opinion • What do you think the chances are that the U.S. economy will have steady expansion in the near future? • An economist’s answer • Bayesian analysis • Combines subjective probability with data to get results • Non-Bayesian “Frequentist” analysis computes using only the data • But subjective opinions (prior beliefs) can still play a background role, even when they are not introduced as numbers into a calculation, when they influence the choice of data and the methodology (model) used © Andrew F. Siegel, 2003

Data Bayesian Analysis Results Prior Probabilities Model Frequentist Analysis Results Bayesian and Nonbayesian Analysis Fig 6.3.3 • Bayesian Analysis • Frequentist (non-Bayesian) Analysis Data Prior Beliefs Model © Andrew F. Siegel, 2003

Combining Events • Complement of the event A • Happens whenever A does not happen • Union of events A and B • Happens whenever either Aor B or both events happen • Intersection of A and B • Happens whenever both A and B happen • Conditional Probability of A Given B • The updated probability of A, possibly changed to reflect the fact that B happens © Andrew F. Siegel, 2003

not A A Complement of an Event • The event “not A” happens whenever A does not • Venn diagram: A (in circle), “notA” (shaded) • Prob(not A) = 1 – Prob(A) • If Prob(Succeed) = 0.7, then Prob(Fail) = 1–0.7 = 0.3 © Andrew F. Siegel, 2003

A B Union of Two Events • Union happens whenever either (or both) happen • Venn diagram: Union “A orB” shaded) • e.g., A= “get Intel job offer”, B = “get GM job offer” • Did the union happen? Congratulations! You have a job • e.g., Did I have eggs or cereal for breakfast? “Yes” © Andrew F. Siegel, 2003

A B Intersection of Two Events • Intersection happens whenever both events happen • Venn diagram: Intersection “A andB” shaded) • e.g., A= “sign contract”, B = “get financing” • Did the intersection happen? Great! Project has been launched! • e.g., Did I have eggs and cereal for breakfast? “No” © Andrew F. Siegel, 2003

Relationship Between and and or • Prob(AorB) = Prob(A)+Prob(B)–Prob(A and B) = + – • Prob(AandB) = Prob(A)+Prob(B)–Prob(A or B) • Example: Customer purchases at appliance store • Prob(Washer) = 0.20 • Prob(Dryer) = 0.25 • Prob(WasherandDryer) = 0.15 • Then we must have • Prob(WasherorDryer) = 0.20+0.25–0.15 = 0.30 © Andrew F. Siegel, 2003

Conditional Probability • Examples • Prob (WingivenAhead at halftime) • Higher than Prob (Win) evaluated before the game began • Prob (SucceedgivenGood results in test market) • Higher than Prob (Succeed) evaluated before marketing study • Prob (Get jobgivenPoor interview) • Lower than Prob (Get job given Good interview) • Prob (Have AIDSgivenTest positive) • Higher than Prob (Have AIDS) for the population-at-large © Andrew F. Siegel, 2003

Prob (AandB) Prob (AgivenB) = Prob (B) A B B A and B Conditional Probability (continued) • Given the extra information that B happens for sure, how must you change the probability for A to correctly reflect this new knowledge? • This is a (conditional) probability about A • The event B gives information • Unconditional • The probability of A • Conditional • A new universe, since B must happen © Andrew F. Siegel, 2003

Conditional Probability (continued) • Key words that may suggest conditional probability • By restricting attention to a particular situation where some condition holds (the given information) • Given … • Of those … • If … • When … • Within (this group) … • … © Andrew F. Siegel, 2003

Conditional Probability (continued) • Example: appliance store purchases • Prob(Washer) = 0.20 • Prob(Dryer) = 0.25 • Prob(WasherandDryer) = 0.15 • Conditional probability of buying a Dryergiven that they bought a Washer • Prob(DryergivenWasher) • = Prob(WasherandDryer)/Prob(Washer) = 0.15/0.20 = 0.75 • 75% of those buying a washer also bought a dryer • Conditional probability of WashergivenDryer • = Prob(WasherandDryer)/Prob(Dryer) = 0.15/0.25 = 0.60 • 60% of those buying a dryer also bought a washer Watch the denominator! © Andrew F. Siegel, 2003

Independent Events • Two events are Independent if information about one does not change the likelihood of the other • Three equivalent ways to check independence • Prob (AgivenB) = Prob (A) • Prob (BgivenA) = Prob (B) • Prob (Aand B) = Prob (A) Prob (B) • Two events are Dependent if not independent • e.g., Prob(WasherandDryer) = 0.15 • Prob (Washer) Prob (Dryer) = 0.20 0.25 = 0.05 • Washer and Dryer are not independent • They are dependent If independent, all three are true. Use any one to check. Not equal © Andrew F. Siegel, 2003

A B Mutually Exclusive Events • Two events are Mutually Exclusive if they cannot both happen, that is, if Prob(A and B) = 0 • No overlap in Venn diagram • Examples • Profit and Loss (for a selected business division) • Green and Purple (for a manufactured product) • Country Squire and Urban Poor (marketing segments) • Mutually exclusive events are dependent events © Andrew F. Siegel, 2003

Probability Trees • A method for solving probability problems • Given probabilities for some events (perhaps union, intersection, or conditional) • Find probabilities for other events • Record the basic information on the tree • Usually three probability numbers are given • Perhaps two probability numbers if events are independent • The tree helps guide your calculations • Each column of circled probabilities adds up to 1 • Circled prob times conditional prob gives next probability • For each group of branches • Conditional probabilities add up to 1 • Circled probabilities at end add up to probability at start © Andrew F. Siegel, 2003

Event B P(B given A) P(A and B) Event A Yes P(A) No P(“not B” given A) Yes P(A and “not B”) No P(“not A” and B) P(B given “not A”) Yes P(not A) No P(“not A” and “not B”) P(“not B” given “not A”) Probability Tree (continued) • Shows probabilities and conditional probabilities © Andrew F. Siegel, 2003

Washer? Dryer? These add up to P(Dryer) = 0.25 0.15 Yes 0.20 No Yes No Yes No Example: Appliance Purchases • First, record the basic information • Prob(Washer) = 0.20, Prob(Dryer) = 0.25 • Prob(WasherandDryer) = 0.15 © Andrew F. Siegel, 2003

Washer? Dryer? These add up to P(Dryer) = 0.25 0.15 Yes 0.20 No Yes No 0.10 Yes 0.80 No Example (continued) • Next, subtract: 1–0.20 = 0.80, 0.25–0.15 = 0.10 © Andrew F. Siegel, 2003

Washer? Dryer? 0.15 Yes 0.20 No Yes 0.05 No 0.10 Yes 0.80 No 0.70 Example (continued) • Now subtract: 0.20–0.15 = 0.05, 0.80–0.10 = 0.70 © Andrew F. Siegel, 2003

Washer? Dryer? 0.75 0.15 Yes 0.20 No 0.25 Yes 0.05 No 0.125 0.10 Yes 0.80 No 0.875 0.70 Example (completed tree) • Now divide to find conditional probabilities 0.15/0.20 = 0.75, 0.05/0.20 = 0.25 0.10/0.80 = 0.125, 0.70/0.80 = 0.875 © Andrew F. Siegel, 2003

Washer? Dryer? 0.75 0.15 Yes 0.20 No 0.25 Yes 0.05 No 0.125 0.10 Yes 0.80 No 0.875 0.70 Example (finding probabilities) • Finding probabilities from the completed tree P(Washer) = 0.20 P(Dryer) = 0.15+0.10 = 0.25 P(WasherandDryer) = 0.15 P(WasherorDryer) = 0.15+0.05+0.10 = 0.30 P(Washerand not Dryer) = 0.05 P(DryergivenWasher) = 0.75 P(Dryergiven notWasher) = 0.125 P(WashergivenDryer) = 0.15/0.25 = 0.60 (using the conditional probability formula) © Andrew F. Siegel, 2003

Washer Dryer 0.15 0.05 0.10 0.70 Example: Venn Diagram • Venn diagram probabilities correspond to right-hand endpoints of probability tree P(Washer andDryer) P(“notWasher” andDryer) P(Washer and “notDryer”) P(“notWasher”and “notDryer”) © Andrew F. Siegel, 2003

Washer Yes No Yes 0.15 0.10 0.25 Dryer 0.05 0.70 0.75 No 0.20 0.80 1 Example: Joint Probability Table • Shows probabilities for each event, their complements, and combinations using and • Note: rows add up, and columns add up P(“notWasher” andDryer) P(Washer andDryer) P(Dryer) P(notDryer) P(Washer and “notDryer”) P(“notWasher”and “notDryer”) P(Washer) © Andrew F. Siegel, 2003