Detecting Subtle Sequence Signals: a Gibbs Sampling Strategy for Multiple Alignment

Detecting Subtle Sequence Signals: a Gibbs Sampling Strategy for Multiple Alignment. Lawrence et al. 1993. Presented By: Manish Agrawal Slides adapted from Prof Sinha’s notes. To define a motif, lets say we know where the motif starts in the sequence

Detecting Subtle Sequence Signals: a Gibbs Sampling Strategy for Multiple Alignment

E N D

Presentation Transcript

Detecting Subtle Sequence Signals: a Gibbs Sampling Strategy for Multiple Alignment Lawrence et al. 1993 Presented By: Manish Agrawal Slides adapted from Prof Sinha’s notes.

To define a motif, lets say we know where the motif starts in the sequence The motif start positions in their sequences can be represented as s = (s1,s2,s3,…,st) A motif model Genes regulated by same transcription factor

a G g t a c T t C c A t a c g t Alignment a c g t T A g t a c g t C c A t C c g t a c g G _________________ A3 0 1 0 31 1 0 Matrix C24 0 0 14 0 0 G 0 1 4 0 0 0 31 T 0 0 0 5 1 0 14 _________________ Consensus A C G T A C G T Line up the patterns by their start indexes s = (s1, s2, …, st) Construct “position weight matrix” with frequencies of each nucleotide in columns Consensus nucleotide in each position has the highest frequency in column Motifs: Matrices and Consensus

Motif Finding Problem(Simplified) • Given a set of sequences, find the motif shared by all or most sequences, while its starting position in each sequence is unknown • Assumption: • Each motif appears exactly once in one sequence. • The motif has fixed length.

Generative Model • Suppose the sequences are aligned, the aligned regions are generated from a motif model. • Motif model is a PWM. A PWM is a position-specific multinomial distribution. • For each position i (from 1 to W), a multinomial distribution on amino acids, consisting of variables qi1, qi2,…..,qi20 • The unaligned regions are generated from a background model: p1,p2, ……, p20

Notations • Set of symbols: • Sequences: S = {S1, S2, …, SN} • Starting positions of motifs: A = {a1, a2, …, aN} • Motif model ( ) : qij = P(symbol at the i-th position = j) • Background model: pj = P(symbol = j) • Count of symbols in each column: cij= count of symbol, j, in the i-th column in the aligned region

Scoring Function • Maximize the log-odds ratio: • Is greater than zero if the data is a better match to the motif model than to the background model

Scoring function • A particular alignment “A” gives us the • counts cij. • In the scoring function “F”, use:

Scoring function • Thus, given an alignment A, we can calculate the scoring function F • We need to find A that maximizes this scoring function, which is a log-odds score

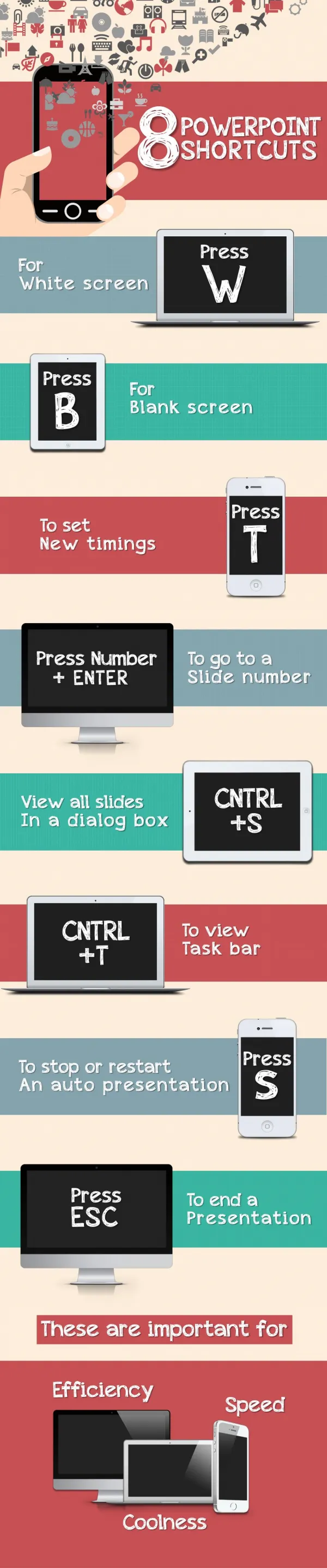

Optimization and Sampling • To maximize a function, f(x): • Brute force method: try all possible x • Sample method: sample x from probability distribution: p(x) ~ f(x) • Idea: suppose xmax is argmax of f(x), then it is also argmax of p(x), thus we have a high probability of selecting xmax

Markov Chain Sampling • To sample from a probability distribution p(x), we set up a Markov chain s.t. each state represents a value of x and for any two states, x and y, the transitional probabilities satisfy: • This would then imply:

Gibbs sampling to maximize F • Gibbs sampling is a special type of Markov chain sampling algorithm • Our goal is to find the optimal A = (a1,…aN) • The Markov chain we construct will only have transitions from A to alignments A’ that differ from A in only one of the ai • In round-robin order, pick one of the ai to replace • Consider all A’ formed by replacing ai with some other starting position ai’ in sequence Si • Move to one of these A’ probabilistically • Iterate the last three steps

Algorithm Randomly initialize A0; Repeat: (1) randomly choose a sequence z from S; A* = At \ az; compute θt from A*; (2) sample az according to P(az = x), which is proportional to Qx/Px; update At+1 = A* x; Select At that maximizes F; Qx: the probability of generating x according to θt; Px: the probability of generating x according to the background model

Algorithm Current solution At

Algorithm Choose one az to replace

Algorithm x For each candidate site xin sequence z, calculate Qx and Px: Probabilities of sampling x from motif model and background model resp.

Algorithm x Among all possible candidates, choose one (say x) with probability proportional to Qx/Px

Algorithm x Set At+1 = A* x

Algorithm x Repeat

Local optima • The algorithm may not find the “global” or true maximum of the scoring function • Once “At” contains many similar substrings, others matching these will be chosen with higher probability • Algorithm will “get locked” into a “local optimum” • all neighbors have poorer scores, hence low chance of moving out of this solution

Phase shifts • After every M iterations, compare the current At with alignments obtained by shifting every aligned substring ai by some amount, either to left or right

Pattern Width • The algorithm described so far requires pattern width(W) to be input. • We can modify the algorithm so that it executes for a range of plausible widths. • The function F is not immediately useful for this purpose as its optimal value always increases with increasing W.

Pattern Width • Another function based on the incomplete-data log-probability ratio G can be used. • Dividing G by the number of free parameters needed to specify the pattern (19W in the case of proteins) produced a statistic useful for choosing pattern width. This quantity can be called information per parameter.

Examples • The algorithm was applied to locate helix-turn-helix (HTH) motif, which represent a large class of sequence-specific DNA binding structures involved in numerous cases of gene regulation. • Detection and alignment of HTH motifs is a well recognized problem because of the great sequence variation.

HTH Motif Complete Sequences Non-site seq Random seq

Time complexity analysis • For a typical protein sequence, it was found that, for a single pattern width, each input sequence needs to be sampled fewer than T = 100 times before convergence. • L*W multiplications are performed in Step2 of the algorithm. • Total multiplications to execute the algorithm = TNLavgW • Linear Time complexity has been observed in applications

Motif finding • The Gibbs sampling algorithm was originally applied to find motifs in amino acid sequences • Protein motifs represent common sequence patterns in proteins, that are related to certain structure and function of the protein • Gibbs sampling is extensively used to find motifs in DNA sequence, i.e., transcription factor binding sites