Resource Representations in GENI: A path forward

150 likes | 264 Views

This document discusses strategies for enhancing resource representation mechanisms in the GENI environment. It covers network link slicing, resource agreements, and the significance of interoperability in resource representation. The paper highlights various types of network resources, including Ethernet links, edge computing/storage resources, and wireless technologies. The importance of consistent connectivity descriptions across layers and outlines practical solutions for edge resource advertising and requests are also discussed. The aim is to facilitate communication and conversion among GENI frameworks to improve resource utilization across platforms.

Resource Representations in GENI: A path forward

E N D

Presentation Transcript

Resource Representations in GENI:A path forward Ilia Baldine, YufengXin ibaldin@renci.org, yxin@renci.org Renaissance Computing Institute, UNC-CH

Agreements – resource representationcycle Possibly fuzzy request Collective possibly filtered manifest Ads from substrate providers Detailed manifest from substrate provider More specific request to substrate provider(s)

GENI Resource representation mechanisms • ‘Traditional’ Network resources • Ethernet links, tunnels, vlans • Edge compute/storage resources • Measurement resources • Including collected measurement data objects • Wireless resources • WiFi, WiMax, motes etc • Lack of agreement on what resources represent will be a significant impediment to interoperability • Agreement on a format is important, but can be dealt with on the engineering level PG Rspec V1 PG Rspec V2 PL RSpec ORCA NDL-OWL OMF

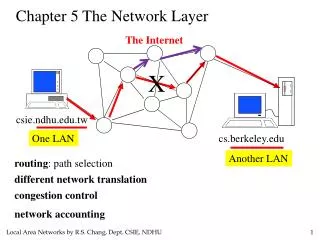

Network resources • Key distinctions • Number of layers • Describing adaptations between layers • Syntax • Tools PL NDL-OWL PG

Aside: why adaptations are critical? • Network adaptations are part of the description of stitching capability • Needed for properly computing paths between aggregates connected by network providers at different layers • E.g. if a host has an interface that has an Ethernet to VLAN adaptation, this interface is capable of stitching to vlans • Consistent way to describe connectivity across layers (tunnels, DWDM, optical) • Also • Important for matching requests to substrate capabilities • E.g. creating a VM is a process of ‘adaptation’ of real hardware to virtualization layer

Network resources: a practical solution • Stay primarily within Ethernet layer • Accept one format to be used between control frameworks • Perform bi-directional format conversion • Only partial may be possible • Hosted services that perform conversion on demand • E.g. NS2/RSpec v1 and v2 request converter to NDL-OWL • http://geni-test.renci.org:11080/ndl_conversion/request.jsp • Works well for several types of links, nodes, interfaces, ip addresses and simple link attributes

Agreeing on wire format • RSpec v2 with extensions is a viable possibility • Conversion fromRSpec v2 is relatively straightforward pending agreement on edge resources • Conversion toRSpec v2 is likely to be partial but probably sufficient for the time being • Nothing below Layer2 is visible to experimenter

Next challenge: Edge compute resources • Ads: • Aggregates can produce (adapt to) different types of nodes • E.g. PL VServer, PG bare hardware node types, ORCA’sXen/KVM virtual machines (and hardware nodes) • Constraints are possible on disk, memory size, number and type of CPU cores • Properties may include location, ownership etc. • Note: internal topology may or may not be part of the site ad. E.g. clouds have no inherent topology that needs to be advertized • Requests: • Based on constraints on type of node, disk, memory size, core type and count, location

Advertising edge resources • A server can be an individual node or a cloud of servers • A site may choose to advertise individual servers/nodes or server clouds • Clouds have no inherent topology, just constraints on the type of topology they can produce and adaptations for nodes • Servers/nodes or server clouds are adaptable to different types of nodes distinguished by • Virtualization (Xen, KVM, VServer, None etc) • Possibly memory, disk sizes, core types and counts, OS

Requesting edge resources • A request for a node specifies multiple constraints on that node • Type of virtualization preferred • Memory, disk size • CPU Type • Core count • OS • Allows policy to pick best sites based on request and resource availability.

Semantic Shortcut examples PlanetLabCluster Produces PL Nodes ProtoGeniCluster Produces PG nodes • Semantic shortcuts • PL node • Virtualiztion: Vserver • Simple PG node • Virtualization: None • CPU type: x86 or ?? or ?? • EC2M1Small • Virtualization: KVM or XEN • CPU count: 1 • Memory size: 128M • EC2M1Large • Virtualization: KVM or XEN • CPU count: 2 • Memory size: 512M

Other considerations • Emerging standards: • OVF – portable appliance images across heterogeneous environments • CF should be able to generate OVF based on combination of request data and substrate information • Higher-level programming environments: • Google App Engine, AppScale • Distributed map/reduce • …

Next steps • Align edge compute resource descriptions • Enable conversion as a GENI-wide service • Test full interoperation PG<->ORCA by GEC11 • Get the conversation started on aligning abstraction models for for • Wireless resources • Max Ott, Hongwei Zhang, ? • Storage (physical and cloud) • Mike Zink, ?