Routing Prefix Caching in Network Processor Design

Routing Prefix Caching in Network Processor Design. ICCCN’01 Oct. 15, 2001. Huan Liu Department of Electrical Engineering Stanford University huanliu@stanford.edu http://www.stanford.edu/~huanliu. Outline.

Routing Prefix Caching in Network Processor Design

E N D

Presentation Transcript

Routing Prefix Caching in Network Processor Design ICCCN’01 Oct. 15, 2001 Huan Liu Department of Electrical Engineering Stanford University huanliu@stanford.edu http://www.stanford.edu/~huanliu

Outline Goal: Advocate routing prefix caching instead of IP address caching • Why prefix caching? • Prefix cache architecture • How to guarantee correct lookup result • Experimental result • Summary

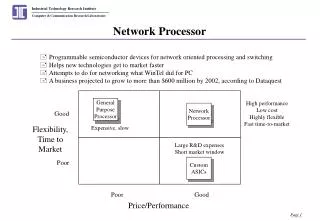

Motivation • Why cache? • Small enough to be integrated on chip => Lower on chip delay than going off chip • Could use fast SRAM instead of DRAM • Smaller capacitance loading => Fast circuit • Why cache prefix instead of IP address? • Solution space is smaller: • # of prefixes << # of IP addresses • # of prefixes at a router is even smaller • Prefixes can be compacted • Data centers host thousands of web servers

IP address caching Routing Prefix Next hop • Problem ?? • Spatial locality : none • Temporal locality : Limited Goal: Fully exploit temporal locality using prefix cache IP address Next hop Micro Engine IP Cache (Fully assoc. = CAM) Routing Table Network Processor

Prefix cache architecture Routing Prefix Next hop Prefix Next hop Micro Engine ASIC Prefix cache (TCAM) Routing Table (TCAM) Network Processor Prefix memory

Alternative prefix cache arch. RAM Prefix Next hop Host CPU Micro Engine Prefix cache (TCAM) SW Routing Table (Trie) Network Processor Prefix memory

IP cache 32 bit tag Tag comparing logic CAM 10T SRAM cell Prefix cache 32 bit tag 32 bit mask Tag comparing logic Masking logic TCAM 16T SRAM cell Density comparison One implementation (Mosaid) Rough estimate: < 2x density difference

Key problem • Not all prefixes are cacheable Prefix memory Cache 0* Lookup 000000 1 0* 0 1 Lookup 010100 0 01010* Wrong!! 01010* should be returned. 0* is non cacheable

Solution #1 • Complete prefix tree expansion (CPTE) Cache Prefix memory 0* Lookup 000000 0 1 00* 00* 0 1 011* 0 1 Lookup 010100 0100* 01010* 0 1 01010* 01011* Problem: Routing table explosion

Solution #2 • Cache IP address instead Prefix memory Cache 0* Lookup 000000 1 000000 0 Lookup 010100 1 01010* 0 01010* Problem: 1. Degrade to IP cache 2. Extra logic to send IP when matched non-cachable prefix

Solution #3 • Partial Prefix Tree Expansion (PPTE)(#1 + #2) Cache Prefix memory 0* Lookup 000000 0 1 00* 00* 0 1 Lookup 010100 0 01010* 01010*

Summary • Prefix caching outperform IP address caching because temporal locality is fully exploited • We show three ways to guarantee correct lookup result • Experiment result: 3x+ improvement with only 2x- more transistors