Agenda

Systems Testing in the SDLC Dr. Robin Poston, Associate Director of the System Testing Excellence Program. Agenda. Testing in Structured Design Tester in the SDLC Testing in Rapid Application and Agile Development. Objectives. Understand Testing in Structured Design

Agenda

E N D

Presentation Transcript

Systems Testing in the SDLC Dr. Robin Poston, Associate Director of the System Testing Excellence Program

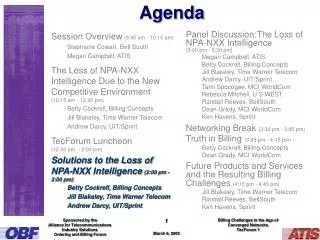

Agenda Testing in Structured Design Tester in the SDLC Testing in Rapid Application and Agile Development

Objectives Understand Testing in Structured Design Understand the role of the Tester in the SDLC Understand Testing in Rapid Application and Agile Development Understand Testing in Enterprise Systems Development Learn the System Testing Phases

Testing in Structured Design Communication project initiation & requirements gathering Planning Estimating, scheduling, tracking Modeling analysis & design Construction code & test Deployment delivery, support, feedback 4 Source: Modern Systems Analysis and Design by J. A. Hoffer , J. F. George, J. S. Valacich, Prentice Hall 2005 The Waterfall Model The V Model

SDLC SDLC Phases Planning Analysis Design Implementation & Maintenance

Testing in Structured Design (cont’d.) Source: Pressman, R.S. Software Engineering: A Practitioner's Approach, Sixth Education, McGraw Hill, New York, 2005. • Common life-cycle model of software development • Development work goes in sequential phases down left side of the V • Test execution phases occurs up the right side of the V • Tests are planned and developed as soon as corresponding development phase begins. • Ex: requirements are the basis for acceptance testing so preparing for testing can start immediately after requirements are captured.

The SDLC Source: Li, 1990 • Generally, the SDLC consists of 3 major stages 1. Analysis: Definition 2. Design: Development & Installation 3. Implementation: Operation • These stages of the SDLC can be further decomposed into 10 phases. 1. Definition consists of 3 Phases: service request/project viability analysis (SR/PVA), system requirements definition (SRD) and system design alternatives (SDA). 2. Development consists of 4 Phases: system external specifications (SES), system internal specifications (SIS), program development (PD) and testing (TST) 3. Testing is interspersed throughout the entire SDLC. 4. Installation and Operation begins with a conversion (CNV) phase, then implementation (IMPL) and ends with a post-implementation review/maintenance (PIR/MN) phase.

SDLC Considerations Source: Li, 1990 • Sequential Orientation: Only when a SDLC phase is completed and signed off on can the next phase proceed. How does this impact testing? • SDLC a.k.a. Waterfall model is generally a document–driven model: How can these documentation orientation be used to facilitate testing. • Tremendous time and cost is incurred by a "major" change in the document of an earlier SDLC phase: Is this a testing issue? • Testing forces changes to earlier phases and documents. • Advanced tools such as CASE tools, automated code generators etc. have facilitated the SDLC. Has testing stayed abreast of these technologies. • Variations of the SDLC to include agile concepts, rapid application methods, joint development techniques have failed to reconsider the role of testing.

Tester Involvement in SDLC Design Code Build1 Code Build2 ….. Code Build N Cleanup Developers Testers Prepare Test Plans Test Build1 Test Build1&2 ….. Test Full System Acceptance Testing Combine system testing and acceptance testing Test group does functional testing--people with domain expertise Begin testing early, as soon as first build is complete Testers test recent completed build while developers move on to next Developers are responsible for low level of integration testing of each build before delivered to testers At end testers perform end-to-end test Source: NASA’s Software Engineering Laboratory

Thought Exercise As a tester, what potential problems do you see with the Li-model? Also think about potential solutions to these problem.

Risks of Structured Design • Difficult to plan accurately that far in advance • Requirements or resources for the project may change by the time testing starts • 20% of medium and 70-90% of large sized projects are delayed or cancelled • Model is driven by schedule and budget risks, with quality taking back seat • If get behind schedule, test time shortens Source: Estimating Software Costs by Capers Jones, McGraw-Hill Companies, July 22, 1998, ISBN: 0079130941.

Risks of Structured Design (Source: www.testing.com, Brian Marick) Testing should start early with groups cooperating and giving feedback Test cycles must be planned realistically including the number of cycles needed to find and fix errors/defects Entry/exit criteria are critical for project status Revisit features/requirements at entry points or use change controls

Testing in Rapid Application Development The Incremental Model (below) The Spiral Model Source: Modern Systems Analysis and Design by J. A. Hoffer , J. F. George, J. S. Valacich, Prentice Hall 2005

Testing in RAD (Source: www.testing.com, Brian Marick) Process progresses in spiral with iterative path More complete software is built as iteration occurs through all phases The first iteration is the most important, as possible risk factors, constraints, requirements are identified and the next iterations get to more complete software Evolution of the software towards a complete system

Advantages of Testing in RAD (Source: www.testing.com, Brian Marick) RAD solves The Structured Design problem of predicting the future Features are not committed to until we know what is possible User interface prototypes get the users involved in early testing which mitigates usability risks Users get confused because RAD prototypes lead to redesigns not a final product at the end of each test period

Testing in Agile Development Extreme Programming (XP) (below) (aka) Agile Development Source: Systems Analysis and Design: An Applied Approach, A. Dennis, B. H. Wixom, and R. M. Roth, 2006.

Testing in Agile Development • Agile Testing: • Treats developers as the customer of testing • Emphasizes a test-first way to create software • Is incremental because software expands from core features that are delivered on a predetermined date • Features are added in chunks to a stable core • As new features are added, new system is stabilized through testing and debugging

Testing in Agile Development • Agile testing: • Abandons the notion that testers get requirements and design documents, and give back test plans and bug reports • The documents used for testing are usually flawed - incomplete, incorrect, and ambiguous • Testers in the past have insisted that the documents be produced better • But "better" will never be good enough

Testing in Agile Development • Design documents can't be an adequate representation of working code • Agile methods encourage ongoing project conversations, where typically: • Testers and developers sit in the same bullpen, share offices, or work in neighboring cubicles • Many testers are assigned to help particular developers, rather than being assigned to test pieces of the product • Test plan grows through a series of short, low-preparation, informal discussions • Results in short memos about specific issues

Risks of Agile Development • Need more testers • Testing starts earlier because need to thoroughly test the core before adding new increments • Vs. Structured Design Model: • Entry criteria for system testing for Structured Model is all features complete & unit tested and requirements set • Agile model can only ask that core features are complete and unit tested. Requirements won’t be set until the end • Automated regression tools can reduce need for staff • It is important to develop organized but flexible test processes and best practices

Thought Exercise Test-driven programmers create tests in technology facing language. Their tests talk about programmatic objects, not business concepts. They learn about business needs through conversations with business experts. But it is hard to learn everything through these conversations. Very often business experts are surprised as something was left out that was obvious. There is no way to eliminate surprises and agile projects make it easier to correct, but it is disappointing. How can we improve these conversations? (Source: www.testing.com, Brian Marick)

References Black, R., “Managing the Testing Process.” Wiley Publishing, New York, NY., (2002). Kaner, C., Bach,J., and Pettichord, B., “Lessons Learned in Software Testing: A Context-Driven Approach.” John Wiley & Sons, Inc., New York, NY., (2002). Camarinha-Matos, L. M., and Afsarmaneshz, H. 2008. "On Reference Models for Collaborative Networked Organizations," International Journal of Production Research (46:9), pp.2453-2469. Chapurlat, V., and Braesch, C. 2008. "Verification, Validation, Qualification, and Certification of Enterprise Models: Statements and Opportunities," Computers in Industry (59), pp. 711-721. Kim, H. M., Fox, M.S., and Sengupta, A. 2007. "How to Build Enterprise Data Models to Achieve Compliance to Standards Or Regulatory Requirements," Journal of the AIS (8:2), pp. 105-128. Kim, T. Y., Lee, S., Kim, K., and Kim, C. H. 2006. "A Modeling Framework for Agile and Interoperable Virtual Enterprises," Computers in Industry (57), pp. 204-217.

Systems Testing in the Review of RequirementsDr. Robin Poston, Associate Director of the System Testing Excellence Program 29

Agenda Business Requirements Specification Role of Requirements in Testing Tester Involvement Obtaining Requirements in Testing Heuristics and Other Test Tools Other Sources of Requirements Test-Driven Development Software Requirements Specification (SRS) Validation and Verification 30

Objectives Understand what Business Requirements Specification are Understand the Role of Requirements in Testing Understand Tester Involvement in Requirements Learn How to Obtain Requirements in Testing Learn about Test-Driven Development Understand what Software Requirements Specification are 31

Statement of what the system must do Statement of characteristics system must have Focus is on business user needs during analysis phase Requirements will change as project moves from analysis -> design -> implementation Business Requirements Specification What is a Requirement? Source: Dennis, Wixom and Roth, Systems Analysis and Design, 2006, Wiley 32

Role of Requirements in Testing Requirements “a quality or condition that matters to someone who matters” (Kaner et al., 2002) Interesting fictions – useful but never sufficient Won’t automatically receive all the requirements Don’t ask for items unless you will use them Helps guide the testing process 33

Tester Involvement with Requirements Analysis • Requirements “analysis” occurs early in the SDLC • It is a representation of user’s needs but usually is not completely accurate • After requirements are set, designers build a picture of a solution through a “design” step 34

Tester Involvement (cont’d.) (Source: www.testing.com, Brian Marick) • At the requirements analysis stage, testers: • Design a few tests but add more after the design phase • Add requirements because they are incomplete • Make clerical checks for consistent, unambiguous and verifiable requirements yet designers can do this • I.e., ‘the transaction shall be processed within three day’ is ambiguous—workdays or calendar days? 35

Tester Involvement (cont’d.) (Source: www.testing.com, Brian Marick) • At requirements analysis stage, testers should: • Understand business domain and create user stories • If untrained users create requirements, tester is needed • If designers are weak or scarce, testers can compensate • As long as testers are trusted and respected by developers, are convincing when finds issues, and are able to envision the architectural implications ok, if not wait to get involved 36

Obtaining Requirements in Testing • 3 primary ways testers obtain requirements information • Conference • Inference • Reference • It is your job to seek out the information you need for testing 37

Obtaining Requirements (cont’d.) • Explicit Requirements Gathering • Acknowledged as authoritative by users • Implicit Requirements Gathering • Useful source of information not acknowledged by users 38

Obtaining Requirements (cont’d.) Implicit Requirements Gathering • Authority comes from persuasiveness and credibility of content, not from the users • Competing & Related products • Older versions of the same product • Email discussions about the project • Comments by customers • Magazine articles (old reviews of product) • Textbooks on related subjects • GUI style guides • O/S compatibility requirements • Your own experience 39

Heuristics • Use heuristics to quickly generate ideas and tests • Testing Examples: • Test at the boundaries • Test every error messages • Test configurations that are different than the programmer’s • Run tests that are annoying to set up • Avoid redundant tests 40

Other Test Tools • Manage bias • Confusion is a test tool • Is the requirement confusing? • Is the product confusing? • Is the user documentation confusing? • Is the underlying problem difficult to understand? • Fresh eyes find failure • Watch for other people’s bias • One outcome testing is a better, smarter tester • Can’t master testing unless you reinvent it • Use the user as a tester 41

Other Sources of Requirements • If you don’t have a good requirements take advantage of other sources of information • User manual draft • Product marketing literature • Marketing presentations • Software change memos (new internal versions) • Internal memos • Published style guide and user interface standards • Published standards & regulations • Third party product compatibility test suites • Bug reports (responses to them) • Interviews (development lead, tech writer, etc.) • Header files, source code, database table definitions • Prototypes and lab notes 42

Thought Exercise (Source: www.testing.com, Michael Hunter, Microsoft Corporation) A new jet fighter was being tested. The test pilot strapped in, started the engines, and flipped the switch to raise the landing gear. The plane wasn’t moving, but the avionics software dutifully raised the landing gear. The plane fell down and broke. It is reasonable to assume the bug in the code was that some code was missing—”if the plane is on the ground, issue an error message”. This is an error/fault/bug of an omission of a requirement and thus lack of adequate testing. Discuss with a partner how you would go about catching these types of “bugs” before the code is released to users. 43

Test-Driven Development (Source: www.testing.com, Brian Marick) • Unit tests performed before coding • Before developer builds new function, first creates test case that verifies it • Test all inputs, outputs, boundary cases, error conditions • Unexpected inputs or error conditions • Planning for ways code might fail first results in catching more when coding software 44

Test-Driven Development (cont’d.) • Developer understand requirements better • But more fun to jump in & start coding! • Creating unit tests firms up requirements in developer’s mind and see what’s missing • Ensures testing gets done • Poor interface designs become apparent early • Complete suite of unit test make it easier to refactor software • Unit test are run after refactoring to see if new defects were added in updating the code 45

Software Requirements Specification (SRS) • Description of the behavior of the software to be developed, includes: • Use cases describe interactions users have with the software • Functional requirements define internal workings of software (calculations etc.) • Non-functional requirements define constraints on design and implementation (performance etc.) • Coding and testing work is based on the SRS 46

SRS (cont’d.) Source: Pfleeger and Atlee, Software Engineering: Theory and Practice, 2006, Pearson/ Prentice Hall Performed by the system analyst Process followed to create the SRS document 47

SRS Checklist Making Requirements Testable • Fit criteria form objective standards for deciding whether a solution satisfies the requirements • Easy to set fit criteria for objective requirements • Hard for subjective quality requirements • Three ways to help make requirements testable • Identify a quantitative description for each adverb and adjective • Replace pronouns with specific names of entities • Ensure every noun is defined in just one place in the requirements documents 48

Validation and Verification Requirements Review Examine the original goals / objectives of the system Compare the requirements with the goals / objectives Examine the environment of the system Examine the information flow and proposed functions Assess and document risks, discuss alternatives Assess how to test the system: how will the requirements be re-validated as they change 49

Thought Exercise What do you think it takes to be a great tester? 50