A 50-Gb/s IP Router

360 likes | 551 Views

A 50-Gb/s IP Router. Authors: Craig Partridge et al. IEEE/ACM TON June 1998 Presenter: Srinivas R. Avasarala CS Dept., Purdue University. Why a Gigabit Router?. Transmission link bandwidths are improving at very fast rates Network usage is expanding

A 50-Gb/s IP Router

E N D

Presentation Transcript

A 50-Gb/s IP Router Authors: Craig Partridge et al. IEEE/ACM TON June 1998 Presenter: Srinivas R. Avasarala CS Dept., Purdue University

Why a Gigabit Router? • Transmission link bandwidths are improving at very fast rates • Network usage is expanding • Host Adapters, OS, switches and MUX also need to get faster for improved network performance • The goal of the work is to show routers can keep pace with other technologies CS Dept., Purdue University

Goals of a Multi-Gigabit Router (MGR) • Enough internal bandwidth to move packets between its interfaces at gigabit rates • Enough packet processing power to forward several million packets per second (MPPS) • Conformance to protocol standards MGR achieves up to 32 MPPS forwarding rates with 50 Gb/s of full duplex backplane capacity CS Dept., Purdue University

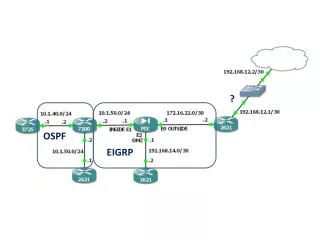

Router Architecture • Multiple line cards, each with one or more network interfaces • Forwarding Engine cards (FEs), to make packet forwarding decisions • High speed switch • Network processor CS Dept., Purdue University

Router Architecture CS Dept., Purdue University

Major Innovations • Each FE has a complete set of the routing tables • A switched fabric is used instead of the traditional shared bus • FEs are on boards distinct from the line cards • Use of an abstract link layer header • Include QoS processing in the router CS Dept., Purdue University

The Forwarding Engine Processor • A 415-MHz DEC Alpha 21164 processor • 64bit 32 register super scalar RISC processor • 2 Integer logic units E0, E1 • 2 Floating point logic units FA, FM • Each cycle schedules one instruction to each logic unit, processing 4 instructions (quad) in a group CS Dept., Purdue University

Forwarding Engine’s Caches • 3 internal caches • First level instruction cache (Icache) 8kB • First-level data cache (Dcache) 8kB • An on-chip secondary cache (Scache) 96kB used as a cache of recent routes. Can store 12000 routes approx. with 64b per route • An external tertiary cache (Bcache) 16 MB • Divided into two 8MB banks • One bank stores entire forwarding table • Other is updated by NW processor via PCI bus CS Dept., Purdue University

Forwarding Engine Hardware • Headers are placed in a request FIFO queue • Alpha reads from queue head, examines header, makes route decision and informs inbound card • Header includes 24/56B of packet + 8B abstract link layer header and alpha reads a min of 32B • Alpha writes out the updated header indicating the outbound interface to use (dispatching info) • Updated header contains the outbound link layer address and a flow id used for packet scheduling • Unique approach to ARP !! CS Dept., Purdue University

Forwarding Engine Software • A few 100 lines of code • 85 instructions in the common case taking a minimum of 42 cycles. • This gives a peak forwarding rate of 9.8MPPS • 415 MHz/42 cycles ~ 9.8MPPS • Fast path of the code is in 3 stages, each with about 20-30 instructions (10-15 cycles) CS Dept., Purdue University

Fast path of the code • Stage 1: • Basic error checking to see if header is from a IP datagram • Confirm packet/header lengths are reasonable • Confirm that IP header has no options • Compute hash offset into route cache and load the route • Start loading of next header CS Dept., Purdue University

Fast path of the code • Stage 2 • Check if cached route matches destination of the datagram • If not then do an extended lookup in the route table in Bcache • Update TTL and CHECKSUM fields • Stage 3 • Put updated ttl, checksum and the route information into IP hdr along with link layer info from the forwarding table CS Dept., Purdue University

An exception !! • IP HDR checksum is not verified but only updated • The incremental update algorithm is safe because if the checksum is bad, it remains bad • Reason: Checksum verification is expensive and is a large penalty to pay for a rare error that can be caught end-to-end • Requires 17 instructions with min of 14 cycles, increasing forwarding time by 21% • IPv6 does not include a header checksum too!! CS Dept., Purdue University

Some datagrams not handled in fast path • Headers whose destination misses in the cache • Headers with errors • Headers with IP options • Datagrams that require fragmentation • Multicast datagrams • Requires multicast routing which is based on source address and inbound link as well • Requires multiple copies of header to be sent to different line cards CS Dept., Purdue University

Instruction set • 27% of them do bit, byte or word manipulation due to extraction of various fields from headers • The above instructions can only be done in E0, resulting in contention (checksum verifying) • Floating point instructions account for 12% but do not have any impact on performance as they only set SNMP values and can be interleaved • There is a minimum of loads(6) and stores(4) CS Dept., Purdue University

Issues in forwarding design • Why not use an ASIC in place of the engine ? • Since IP protocol is stable, why not do it ? • Answer depends on where the router will be deployed: corporate LAN or ISP’s backbone? • How effective is a route cache ? • A full route lookup is 5 times more expensive than a cache hit. So we need modest hit rates. • And modest hit rates seem to be assured because of packet trains CS Dept., Purdue University

Abstract link layer header • Designed to keep the forwarding engine and its code simple CS Dept., Purdue University

The Switched bus • Instead of the conventional shared bus, MGR uses a 15-port point-to-point switch • Limitation of a point-to-point switch is that it does not support one to many transfers • The switch has 2 interfaces to each function card • Data Interface: 75 input, 75 output pins clocked at 51.84 MHz • Allocation Interface: 2 request pins, 2 inhibit pins, 1 input status pin and 1 output status pin clocked at 25.92 MHz CS Dept., Purdue University

Data transfer in the switch • An epoch is 16 ticks of data clock (8 allocation clk) • Up to 15 simultaneous transfers in an epoch • Each transfer is 1024 bits of data + 176 auxiliary bits for parity and control • Aggregate data bandwidth is 49.77 Gb/s. 58.32 Gb/s including the auxiliary bits. 3.3 Gb/s per line card • The 1024 bits are sent in two 64B blocks • Function cards are expected to wait several epochs for another 64B block to fill the transfer CS Dept., Purdue University

Scheduling of the switch • Minimum of 4 epochs to schedule and complete a transfer but scheduling is pipelined. • Epoch1: source card signals that it has data to send to the destination card • Epoch2: switch allocator schedules transfer • Epoch3: source and destination cards are notified and told to configure themselves • Epoch4: transfer takes place • Flow control through inhibit pins CS Dept., Purdue University

The Switch Allocator card • Takes connection requests from function cards • Takes inhibit requests from destination cards • Computes a transfer configuration for each epoch • 15X15 = 225 possible pairings with 15! Patterns • Disadvantages of the simple allocator • Unfair: there is a preference for low-numbered sources • Requires evaluating 225 positions per epoch, which is too fast for an FPGA CS Dept., Purdue University

The Switch Allocator Card CS Dept., Purdue University

The Switch Allocator • Solution to unfairness problem: Random shuffling of sources and destinations • Solution to timing problem: Parallel evaluation of multiple locations • Priority to requests from forwarding engines over line cards to avoid header contention on line cards CS Dept., Purdue University

Line Card Design • A line card in MGR can have up to 16 interfaces on it, all of the same type • Total bandwidth of all interfaces on a card must not exceed 2.5 Gb/s. The difference between 2.5 and 3.3 Gb/s is to allow for transfer of headers to and from forwarding engines • Can support: • 1 OC-48c 2.4 Gb/s SONET interface • 4 OC-12c 622 Mb/s SONET interfaces • 3 HIPPI 800 Mb/s interfaces • 16 100Mb/s Ethernet or FDDI interfaces CS Dept., Purdue University

Line card: Inbound packet processing • Assigns a packet id and breaks data into a chain of 64B • The first page is sent to the FE to get routing info • 2 Complications • Multicasting: FE sends multiple copies of updated first pages for a single packet • ATM: Cells are 53b. So we need SAR. OAM cells between interfaces on the same card must be sent directly in a single page. CS Dept., Purdue University

Line card: Outbound packet processing • Receives pages of a packet from the switch • Assembles them in a list • Creates a packet record pointing to the list • Passes the packet record QoS processor (an FPGA) which does scheduling based on flow ids • Any link layer scheduling is done separately later CS Dept., Purdue University

Network processor, Routing tables • 233-MHz 21064 Alpha processor • Access to line cards through a PCI bridge • Runs 1.1 NetBSD UNIX • All routing protocols run on the NW processor • FEs have only small tables with minimal info • NW processor periodically downloads new tables into FEs • FEs then switch memory banks and invalidate the route cache CS Dept., Purdue University

Conclusions • Makes 2 important contributions • Emphasizes on examining every header: improves robustness and security • Shows it is feasible to build routers that can serve in emerging high-speed networks • In all, an excellent paper providing complete and intricate details about high speed router design CS Dept., Purdue University