CRT Development

CRT Development. Item specifications and analysis. Considerations for CRTs. Unlike NRTs, individual CRT items are not ‘expendable’ because they have been written to assess specific areas of interest

CRT Development

E N D

Presentation Transcript

CRT Development Item specifications and analysis

Considerations for CRTs • Unlike NRTs, individual CRT items are not ‘expendable’ because they have been written to assess specific areas of interest • “If a criterion-referenced test doesn’t unambiguously describe just what it’s measuring, it offers no advantage over norm-referenced measures.” (Popham, 1984, p. 29) Popham, W. J. (1984). Specifying the domain of content or behaviors. In R. A. Berk (Ed.), A guide to criterion-referenced test construction (pp. 29-48). Baltimore, MD: The Johns Hopkins University Press.

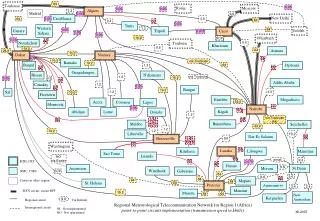

CRT score interpretation ‘Good’ CRT ‘Bad’ CRT (Popham, 1984, p. 31)

Test Specifications • ‘Blueprints’ for creating test items • Ensure that item content matches objectives (or criteria) to be assessed • Though usually associated with CRTs, can also be useful in NRT development (Davidson & Lynch, 2002) • Recent criticism: Many CRT specs (and resulting tests) are too tied to specific item types and lead to ‘narrow’ learning

Specification components • General Description (GD) – brief statement of the focus of the assessment • Prompt Attributes (PA) – details what will be given to the test taker • Response Attributes (RA) – describes what should happen when the test-taker responds to the prompt • Sample Item (SI) • Specification Supplement (SS) – other useful information regarding the item or scoring (Davidson & Lynch, 2002)

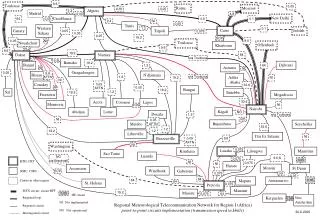

Item – specification congruence (Brown, 1996, p. 78)

CRT Statistical Item Analysis • Based on criterion groups • To select groups, ask: Who should be able to master the objectives and who should not? • Logical group comparisons • Pre-instruction / post-instruction • Uninstructed / instructed • Contrasting groups • The interpretation of the analysis will depend in part on the groups chosen

Advantages Individual as well as group gains can be measured Can give diagnostic information about progress and program Disadvantages Requires post-test Potential for test effect Pre-instruction / post-instruction Berk, R. A. (1984). Conducting the item analysis. In R. A. Berk (Ed.), A guide to criterion-referenced test construction (pp. 97-143). Baltimore, MD: The Johns Hopkins University Press.

Advantages Analysis can be conducted at one point in time Test can be used immediately for mastery / non-mastery decisions Disadvantages Group identification might be difficult Group performance might be affected by a variety of factors (i.e., age, background, etc.) Uninstructed / instructed

Advantages Does not equate instruction with mastery Sample of masters is proportional to population Disadvantages Defining ‘mastery’ can be difficult Individually creating each group is time consuming Extraneous variables Contrasting groups

Uninstructed Non-masters Instructed Masters Item discrimination for groups DI = IF (‘master’) – IF (‘non-master’) Sometimes called DIFF (difference score) (Berk, 1984, p. 194)

Item analysis interpretation (Berk, 1984, p. 125)

Distractor efficiency analysis • Each distractor should be selected by more students in the uninstructed (or incompetent) group than in the instructed (or competent) group. • At least a few uninstructed (or incompetent) students (5 – 10%) should choose each distractor. • No distractor should receive as many responses by the instructed (or competent) group as the correct answer. (Berk, 1984, p. 127)

The B-index • Difficulty index calculated from one test administration • The criterion groups are defined by their passing or failing the test • Failing is defined as falling below a predetermined cut score • The validity of the cut score decision will affect the validity of the B-index