Evaluation-Function Based Monte-Carlo LOA

Evaluation-Function Based Monte-Carlo LOA. Mark H.M. Winands and Yngvi Björnsson. Outline. Background MC-LOA Evaluators and Simulations Experiments Conclusions. Introduction. Monte-Carlo Tree Search (Kocsis et al., 2006, Coulom 2007) Revolution in Go

Evaluation-Function Based Monte-Carlo LOA

E N D

Presentation Transcript

Evaluation-Function Based Monte-Carlo LOA Mark H.M. Winands and Yngvi Björnsson

Outline • Background • MC-LOA • Evaluators and Simulations • Experiments • Conclusions

Introduction • Monte-Carlo Tree Search (Kocsis et al., 2006, Coulom 2007) • Revolution in Go • Applied in Amazons, Clobber, Havannah, Hex, GGP etc • Here we focus on the sudden-death game Lines of Action (LOA)

Test Domain: Lines of Action (LOA) • Two-person zero-sum chess-like connection game • A move takes place in a straight line, exactly as many squares as there are pieces of either colour anywhere along the line of movement • Goal is to group your pieces into one connected unit

Computer LOA • 1st LOA program, Stanford AI laboratory 1975 • Since 2000, it is also played at the Computer Olympiad • Several programs: YL (Björnnson), Mona (Billings), Bing (Helmstetter), T-T (JAIST Lab), Lola (Coulom) • All programs use αβ search + evaluation function • αβ search: null-move, multi-cut, RPS • Evaluation functions are quite advanced

Play-out Monte-Carlo Tree Search (MCTS) • A best-first search guided by the results of Monte-Carlo simulations • We will discuss the steps for MC-LOA

Selection Step • Traversal from the root until we reach a position which is not part of the tree • Selection Strategy: Controls exploitation and exploration balance • UCT (Kocsis, 2006) and Progressive BIAS (PB) (Chaslot et al. 2008) • Selects the child k of the node p according: • UCT: vi is the value of the node i, niis the visit count of i, and np is the visit count of p. C is a coefficient • PB: Pmc is the transition probability of a move category mc, W is a constant UCT PB

Progressive Bias: Transition Probabilities • Transition Probabilities originally used in Realization Probability Search (Tsuruoka, 2002) • Transition Probability: Probability that a move belonging to a category mc will be played • Statistics are retrieved from self-play

Progressive Bias: Move Categories • Move categories in LOA: • Captures or non-captures • Origin and destination of the move’s from and to squares • Number of squares traveled from- or towards to the centre-of-mass • 277 move categories

Simulation / Play-out • If the node has been visited fewer times than a threshold T, the next move is selected pseudo-randomly according to the simulation strategy • Their move category weights • At a leaf node: • All moves are generated and checked for a mate in one • Play-out step: outside the tree, self-play

Backpropagation Step • The result of a simulated game k is back-propagated from the leaf node L, through the previously traversed path • (+1 win, 0 draw, -1 loss) • The value v of a node is computed by taking the average of the results of all simulated games made through this node

MCTS – Solver: Backpropagation • MCTS-Solver (Winands et al. 2008) • For terminal positions assign the values ∞ (Win) or -∞ (Loss) • The internal nodes are assigned in a minimax way

Simulation Strategies • MCTS does not require a positional evaluation function, but is based on the results of simulations • Not good enough for LOA • How to use a positional evaluation function? • Four different strategies • Evaluation Cut-off • Corrective • Greedy • Mixed

Evaluation function: (MIA 2006) • Concentration • Centralisation • Centre-of-mass position • Quads • Mobility • Walls • Connectedness • Uniformity • Variance

Evaluation Cut-Off • Stops a simulated game before a terminal state is reached if, according to a heuristic knowledge, the game is judged to be effectively over • Starting at second ply in the play-out, every three ply the evaluation function is called • If the value exceeds a certain threshold, a win is scored (700) • If the value is below a certain threshold, a loss is scored (-700) • Function can return a quite trustworthy score, more so than even elaborate simulation strategies • The game can thus be safely terminated both earlier and with a more accurate score than if continuing the simulation

Corrective • Minimizing the risk of choosing an obviously bad move • First, we evaluate the position for which we are choosing a move • Next, we generate the moves and scan them to get their weights • If the move leads to a successor which has a lower evaluation score than its parent, we set the weight of a move to a preset minimum value (close to zero) • If a move leads to a win, it will be immediately played

Greedy • The move leading to the position with the highest evaluation score is selected • We evaluate only moves that have a good potential for being the best • The k-best moves according to their move category weights are fully evaluated (k = 7) • When a move leads to a position with an evaluation over a preset threshold, the play-out is stopped and scored as a win • The remaining moves — which are not heuristically evaluated — are checked for a mate • Evaluation function has small a random factor

Mixed • Greedy strategy could be too deterministic • The Mixed strategy combines the Corrective strategy and the Greedy strategy • The Corrective strategy is used in the selection step, i.e., at tree nodes where a simulation strategy is needed (i.e., n < T ) as well as in the first position entered in the play-out step • For the remainder of the play-out the Greedy strategy is applied

Experiments: MIA • Test the strength against a non-MC program: MIA • Won the 8th, 9th and 11th Computer Olympiad • αβ depth-first iterative-deepening search in the PVS frame work • Enhancements: Transposition Table, ETC, killer moves, relative history heuristic etc. • Variable depth: Multi-cut, (adaptive) null move, Enhanced realization probability search, quiescence search

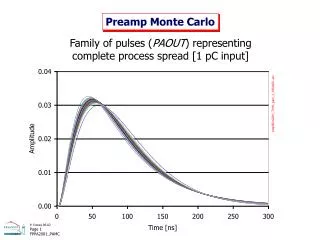

Parameter Tuning: Evaluation Cut-off • Tune the bounds of Evaluation Cut-off Strategy • 3 programs: No-Bound, MIA III and MIA 4.5 • 1000 game results, 1 sec per move

Round-Robin Tournament Results • 1000 Games • 1 sec per move

MC-LOA vs MIA • Mixed Strategy • 5 sec a move • Root-Parallelization

MC-LOA vs MIA • No parallel version of MIA • Two-threaded MC-LOA program competed against MIA, where MIA was given 50% more time • Simulating a search-efficiency increase of 50% if MIA were to be given two processors • A 1,000 game match resulted in a 52% winning percentage for MC-LOA

Conclusions (1) • Applying an evaluation function to stop simulations, increases the playing strength • Mixed strategy of playing greedily in the play-out phase, but exploring more in the earlier selection phase, works the best

Conclusions (2) • MCTS using simple root-parallelization outperforms MIA • MCTS programs are competitive with αβ-based programs in the game LOA