Monte Carlo Simulation

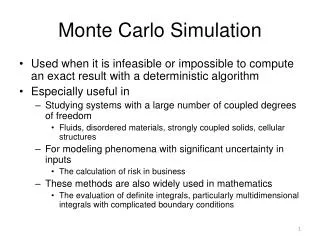

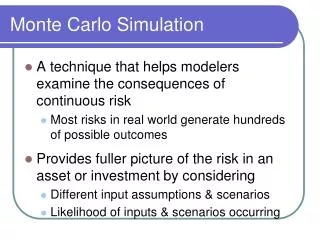

Monte Carlo Simulation. Used when it is infeasible or impossible to compute an exact result with a deterministic algorithm Especially useful in Studying systems with a large number of coupled degrees of freedom Fluids, disordered materials, strongly coupled solids, cellular structures

Monte Carlo Simulation

E N D

Presentation Transcript

Monte Carlo Simulation • Used when it is infeasible or impossible to compute an exact result with a deterministic algorithm • Especially useful in • Studying systems with a large number of coupled degrees of freedom • Fluids, disordered materials, strongly coupled solids, cellular structures • For modeling phenomena with significant uncertainty in inputs • The calculation of risk in business • These methods are also widely used in mathematics • The evaluation of definite integrals, particularly multidimensional integrals with complicated boundary conditions

Monte Carlo Simulation • No single approach, multitude of different methods • Usually follows pattern • Define a domain of possible inputs • Generate inputs randomly from the domain • Perform a deterministic computation using the inputs • Aggregate the results of the individual computations into the final result • Example: calculate Pi

Monte Carlo: Algorithm for Pi • The value of PI can be calculated in a number of ways. Consider the following method of approximating PI Inscribe a circle in a square • Randomly generate points in the square • Determine the number of points in the square that are also in the circle • Let r be the number of points in the circle divided by the number of points in the square • PI ~ 4 r • Note that the more points generated, the better the approximation • Algorithm : npoints = 10000 circle_count = 0 do j = 1,npoints generate 2 random numbers between 0 and 1 xcoordinate = random1 ; ycoordinate = random2 if (xcoordinate, ycoordinate) inside circle then circle_count = circle_count + 1 end do PI = 4.0*circle_count/npoints

OpenMP Pi Calculation Initialize variables Initialize OpenMP parallel environment N WorkerThreads Master Thread Generate random X,Y Generate random X,Y Generate random X,Y Calculate Z=X^2+Y^2 Calculate Z =X^2+Y^2 Calculate Z =X^2+Y^2 N N If point lies within the circle If point lies within the circle If point lies within the circle N Y Y Y Count ++ Count ++ Count ++ Reduction ∑ Calculate PI Print value of pi

OpenMP Calculating Pi #include <omp.h> #include <stdlib.h> #include <stdio.h> #include <time.h> #define SEED 42 main(intargc, char* argv) { int niter=0; double x,y; inti,tid,count=0; /* # of points in the 1st quadrant of unit circle */ double z; double pi; time_trawtime; struct tm * timeinfo; printf("Enter the number of iterations used to estimate pi: "); scanf("%d",&niter); time ( &rawtime ); timeinfo = localtime ( &rawtime ); Seed for generating random number http://www.umsl.edu/~siegelj/cs4790/openmp/pimonti_omp.c.HTML

OpenMP Calculating Pi printf ( "The current date/time is: %s", asctime (timeinfo) ); /* initialize random numbers */ srand(SEED); #pragma omp parallel for private(x,y,z,tid) reduction(+:count) for ( i=0; i<niter; i++) { x = (double)rand()/RAND_MAX; y = (double)rand()/RAND_MAX; z = (x*x+y*y); if (z<=1) count++; if (i==(niter/6)-1) { tid = omp_get_thread_num(); printf(" thread %i just did iteration %i the count is %i\n",tid,i,count); } if (i==(niter/3)-1) { tid = omp_get_thread_num(); printf(" thread %i just did iteration %i the count is %i\n",tid,i,count); } if (i==(niter/2)-1) { tid = omp_get_thread_num(); printf(" thread %i just did iteration %i the count is %i\n",tid,i,count); } Initialize random number generator; srand is used to seed the random number generated by rand() Initialize OpenMP parallel for with reduction(∑) Randomly generate x,y points Calculate x^2+y^2 and check if it lies within the circle; if yes then increment count http://www.umsl.edu/~siegelj/cs4790/openmp/pimonti_omp.c.HTML

Calculating Pi if (i==(2*niter/3)-1) { tid = omp_get_thread_num(); printf(" thread %i just did iteration %i the count is %i\n",tid,i,count); } if (i==(5*niter/6)-1) { tid = omp_get_thread_num(); printf(" thread %i just did iteration %i the count is %i\n",tid,i,count); } if (i==niter-1) { tid = omp_get_thread_num(); printf(" thread %i just did iteration %i the count is %i\n",tid,i,count); } } time ( &rawtime ); timeinfo = localtime ( &rawtime ); printf ( "The current date/time is: %s", asctime (timeinfo) ); printf(" the total count is %i\n",count); pi=(double)count/niter*4; printf("# of trials= %d , estimate of pi is %g \n",niter,pi); return 0; } Calculate PI based on the aggregate count of the points that lie within the circle http://www.umsl.edu/~siegelj/cs4790/openmp/pimonti_omp.c.HTML

Demo : OpenMP Pi [LSU760000@n00 PA1]$ ./omcpi Enter the number of iterations used to estimate pi: 100000 The current date/time is: Mon Mar 15 23:36:25 2010 thread 1 just did iteration 16665 the count is 3262 thread 5 just did iteration 66665 the count is 3263 thread 2 just did iteration 33332 the count is 6564 thread 6 just did iteration 83332 the count is 6596 thread 3 just did iteration 49999 the count is 9769 thread 7 just did iteration 99999 the count is 9810 The current date/time is: Mon Mar 15 23:36:25 2010 the total count is 78534 # of trials= 100000 , estimate of pi is 3.14136

Creating Custom Communicators Communicators define groups and the access patterns among them Default communicator is MPI_COMM_WORLD Some algorithms demand more sophisticated control of communications to take advantage of reduction operators MPI permits creation of custom communicators MPI_Comm_create

MPI Monte Carlo Pi Computation Server Master Worker Initialize MPI Environment Initialize MPI Environment Initialize MPI Environment Broadcast Error Bound Receive Error Bound Send Request to Server Receive Request Send Request to Server Receive Random Array Receive Random Array Compute Random Array Perform Computations Perform Computations Propagate Number of Points (Allreduce) Send Array to Requestor Propagate Number of Points (Allreduce) Output Partial Result Last Request? N Stop Condition Satisfied? Stop Condition Satisfied? N N Y Y Y Finalize MPI Print Statistics Finalize MPI Finalize MPI

Monte Carlo : MPI - Pi (source code) #include <stdio.h> #include <math.h> #include "mpi.h“ #define CHUNKSIZE 1000 #define INT_MAX 1000000000 #define REQUEST 1 #define REPLY 2 int main( int argc, char *argv[] ) { int iter; int in, out, i, iters, max, ix, iy, ranks[1], done, temp; double x, y, Pi, error, epsilon; int numprocs, myid, server, totalin, totalout, workerid; int rands[CHUNKSIZE], request; MPI_Comm world, workers; MPI_Group world_group, worker_group; MPI_Status status; MPI_Init(&argc,&argv); world = MPI_COMM_WORLD; MPI_Comm_size(world,&numprocs); MPI_Comm_rank(world,&myid); Initialize MPI environment

Monte Carlo : MPI - Pi (source code) server = numprocs-1; /* last proc is server */ if (myid == 0) sscanf( argv[1], "%lf", &epsilon ); MPI_Bcast( &epsilon, 1, MPI_DOUBLE, 0, MPI_COMM_WORLD ); MPI_Comm_group( world, &world_group ); ranks[0] = server; MPI_Group_excl( world_group, 1, ranks, &worker_group ); MPI_Comm_create( world, worker_group, &workers ); MPI_Group_free(&worker_group); if (myid == server) { do { MPI_Recv(&request, 1, MPI_INT, MPI_ANY_SOURCE, REQUEST, world, &status); if (request) { for (i = 0; i < CHUNKSIZE; ) { rands[i] = random(); if (rands[i] <= INT_MAX) i++; }/* Send random number array*/ MPI_Send(rands, CHUNKSIZE, MPI_INT, status.MPI_SOURCE, REPLY, world); } }while( request>0 ); } else { /* Begin Worker Block */ request = 1; done = in = out = 0; max = INT_MAX; /* max int, for normalization */ MPI_Send( &request, 1, MPI_INT, server, REQUEST, world ); MPI_Comm_rank( workers, &workerid ); iter = 0; Broadcast Error Bounds: epsilon Create a custom communicator Server process : 1. Receives request to generate a random ,2. Computes the random number array, 3. Send array to requestor Worker process : Request the server to generate a random number array

Monte Carlo : MPI - Pi (source code) while (!done) { iter++; request = 1; /* Recv. random array from server*/ MPI_Recv( rands, CHUNKSIZE, MPI_INT, server, REPLY, world, &status ); for (i=0; i<CHUNKSIZE-1; ) { x = (((double) rands[i++])/max) * 2 - 1; y = (((double) rands[i++])/max) * 2 - 1; if (x*x + y*y < 1.0) in++; else out++; } MPI_Allreduce(&in, &totalin, 1, MPI_INT, MPI_SUM, workers); MPI_Allreduce(&out, &totalout, 1, MPI_INT, MPI_SUM, workers); Pi = (4.0*totalin)/(totalin + totalout); error = fabs( Pi-3.141592653589793238462643); done = (error < epsilon || (totalin+totalout) > 1000000); request = (done) ? 0 : 1; if (myid == 0) { /* If “Master” : Print current value of PI */ printf( "\rpi = %23.20f", Pi ); MPI_Send( &request, 1, MPI_INT, server, REQUEST, world ); } else { /* If “Worker” : Request new array if not finished */ if (request) MPI_Send(&request, 1, MPI_INT, server, REQUEST, world); } } MPI_Comm_free(&workers); } Worker : Receive random number array from the Server Worker: For each pair of x,y in the random number array, calculate the coordinates Determine if the number is inside or out of the circle Compute the value of pi and Check if error is within threshhold Print current value of PI and request for more work

Monte Carlo : MPI - Pi (source code) if (myid == 0) { /* If “Master” : Print Results */ printf( "\npoints: %d\nin: %d, out: %d, <ret> to exit\n", totalin+totalout, totalin, totalout ); getchar(); } MPI_Finalize(); } Print the final value of PI

Demo : MPI Monte Carlo, Pi [LSU760000@n00 PA1]$ mpiexec-np 4 ./monte 1e-7 pi = 3.14159265262020515053 points: 12957000 in: 10176404, out: 2780596