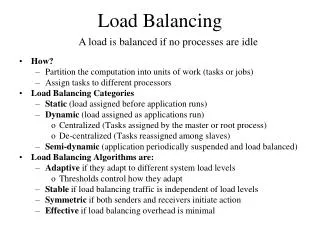

Load-Balancing

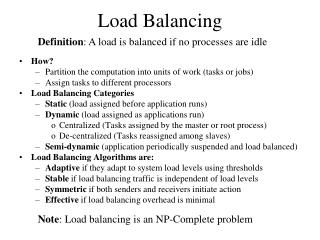

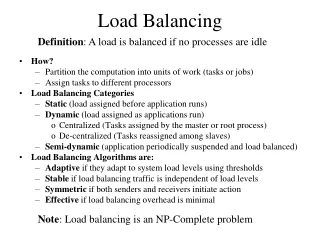

Load-Balancing. Load-Balancing. What is load-balancing? Dividing up the total work between processes when running codes on a parallel machine Load-balancing constraints Minimize interprocess communication Also called: partitioning, mesh partitioning, (domain decomposition).

Load-Balancing

E N D

Presentation Transcript

Load-Balancing High Performance Computing 1

Load-Balancing • What is load-balancing? • Dividing up the total work between processes when running codes on a parallel machine • Load-balancing constraints • Minimize interprocess communication • Also called: • partitioning, mesh partitioning, (domain decomposition) High Performance Computing 1

Know your data and memory • Memory is organized by banks. Between access to any bank, there is a latency period. • Matrix entries are stored column-wise in FORTRAN. High Performance Computing 1

Matrix addressing in FORTRAN is addressed High Performance Computing 1

Addressing Memory • For illustration purposes, lets imagine 8 banks [128 or 256 common on chips today], with bank busy time (bbt) of 8 cycles between accesses. Thus we have: data a13 a23 a33 a43 a14 a24 a34 a44 data a11 a21 a31 a41 a12 a22 a32 a42 bank 1 2 3 4 5 6 7 8 High Performance Computing 1

Addressing Memory • If we access data column-wise, we proceed through each bank in order. By the time we call a13, we (just) avoid bbt. • On the other hand, if we access data row-wise, we get a11 in bank 1, a12 in bank 5, a13 in bank 1 again - so instead of access on clock cycle 3, we have to wait until cycle 9. Then we get a14 in bank 5 again on cycle 10, etc. High Performance Computing 1

Indirect addressing • If addressing is indirect we may wind up jumping all over, and suffer performance hits because of it. High Performance Computing 1

Shared Memory • Bank conflicts depend on granularity of memory • If N memory refs per cycle, p processors, memory with b cycles bbt, need p*N*b memory banks to see uninterrupted access of data • With B banks, granularity is g = B/(p*N*b) High Performance Computing 1

Moral • Separate selection of data from its processing • Each subtask requires its own data structure. Be prepared to change structures between tasks High Performance Computing 1

Load-balancing nomenclature Objects get distributed among different processes Edges represent information that need to be shared between objects Object Edge High Performance Computing 1

Partitioning • Divides up the work • 5 & 4 objects assigned to processes • Creates “edge-cuts” • Necessary communications between processes High Performance Computing 1

Work/Edge Weights • Need a good measure of what the expected work may be • Molecular dynamics: • number of molecules • regions • FEM/finite difference/finite volume, etc: • Degrees of freedom • Cells/elements • If edge weights are used, also need a good measure on how strongly objects are coupled to each other High Performance Computing 1

Static/Dynamic Load-Balancing • Static load-balancing • Done as a “preprocessing” step before the actual calculation • If the objects and edges don’t change very much or at all, can do static load-balancing • Dynamic load-balancing • Done during the calculation • Significant changes in the objects and/or edges High Performance Computing 1

Dynamic Load-Balancing Example • h-adapted mesh • Workload is changing as the computation proceeds • Calculate a new partition • Need to migrate the elements to their assigned process High Performance Computing 1

Static vs. Dynamic Load Balancing • Static partitioning insufficient for many applications • Adaptive mesh refinement • Multi-phase/Multi-physics computations • Particle simulations • Crash simulations • Parallel mesh generation • Heterogeneous computers • Need dynamic load balancing High Performance Computing 1

Dynamic Load-Balancing Constraints • Minimize load-balancing time • Memory constraints • Minimize data migration -- incremental partitions • Small changes in the computation should result in small changes in the partitioning • Calculating new partition and data migration should take less time than the amount of time saved by performing computations on new grid • Done in parallel High Performance Computing 1

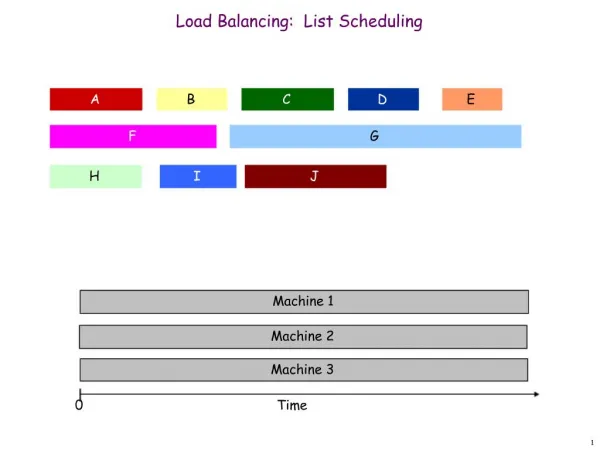

Methods of Load-Balancing • Geometric • Based on geometric location • Faster load-balancing time with medium quality results • Graph-based • Create a graph to represent the objects and their connections • Slower load-balancing time but high quality results • Incremental methods • Use graph representation and “shuffle” around objects High Performance Computing 1

Choosing a Load-Balancing Algorithm/Method No algorithm/method is appropriate for all applications! • Graph load-balancing algorithms for: • Static load-balancing • Computations where computation to load-balancing time ratio is high • Implicit schemes with a linear and non-linear solution scheme High Performance Computing 1

Choosing a Load-Balancing Algorithm/Method • Geometric load-balancing algorithms for: • Computations where computation to load-balancing time ratio is low • For explicit time stepping calculations with many time steps and varying workload (MD, FEM crash simulations, etc.) • Problems with many load-balancing objects High Performance Computing 1

Geometric Load-Balancing • Based on the objects’ coordinates • Want a unique coordinate associated with an object • Node coordinates, element centroid, molecule coordinate/centroid, etc. • Partition “space” which results in a partition of the load-balancing objects • Edge cuts are usually not explicitly dealt with High Performance Computing 1

Geometric Load-Balancing Assumptions • Objects that are close will likely need to share information • Want compact partitions • High volume to surface area or high area to perimeter length ratios • Coordinate information • Bounded domain High Performance Computing 1

Geometric Load-Balancing Algorithms • Recursive Coordinate Bisection (RCB) • Berger & Bokhari • Recursive Inertial Bisection (RIB) • Taylor & Nour-Omid • Space Filling Curves (SFC) • Warren & Salmon, Ou, Ranka, & Fox, Baden & Pilkington • Octree Partitioning/Refinement-tree Partitioning • Loy & Flaherty, Mitchell High Performance Computing 1

Recursive Coordinate Bisection • Choose an axis for the cut • Find the proper location of the cut • Group objects together according to location relative to cut • If more partitions are needed, go to step 1 High Performance Computing 1

Recursive Inertial Bisection • Choose a direction for the cut • Find the proper location of the cut • Group objects together according to location relative to cut • If more partitions are needed, go to step 1 High Performance Computing 1

Space Filling Curves A Space Filling Curve is a 1-dimensional curve which passes through every point in an n-dimensional domain High Performance Computing 1

Load-Balancing with Space Filling Curves • The SFC gives a 1-dimensional ordering of objects located in an n-dimensional domain • Easier to work with objects in 1 dimension than in n dimensions • Algorithm: • Sort objects by their location on the SFC • Calculate cuts along the SFC High Performance Computing 1

Tree based algorithms for applications with multiple levels of data, simulation accuracy, etc. Tree is usually built from specific computational schemes Tightly coupled with the simulation Octree Partitioning/Refinement-Tree Partitioning High Performance Computing 1

Comparisons of RCB, RIB, and SFC • RCB and RIB usually give slightly better partitions than SFC • SFC is usually a little faster • SFC is a little better for incremental partitions • RIB can be real unstable for incremental partitions High Performance Computing 1

Load-Balancing Libraries • There are many load-balancing libraries downloadable from the web • Mostly graph partitioning libraries • Static: Chaco, Metis, Party, Scotch • Dynamic: ParMetis, DRAMA, Jostle, Zoltan • Zoltan (www.cs.sandia.gov/Zoltan) • Dynamic load-balancing library with: • SFC, RCB, RIB, Octree, ParMetis, Jostle • Same interface to all load-balancing algorithms High Performance Computing 1

Methods to Avoid Communication • Avoiding load-balancing • Load-balancing not needed every time the workload and/or edge connectivity changes • Ghost cells • Predictive load-balancing High Performance Computing 1

Accessing Information on Other Processors • Need communication between processors • Use ‘ghost’ cells – need to maintain consistency of data in ghost cells High Performance Computing 1

Ghost Cells • Copies of cells assigned to other processors • Make needed information available • No solution values are computed at the ghost cells • Ghost cell information needs to be updated whenever necessary • Ghost cells need to be calculated dynamically because of changing mesh and dynamic load-balancing High Performance Computing 1

Predictive Load-Balancing • Predict the workload and/or edge connectivity and load-balance with that information • Assumes that you can predict the workload and/or edge connectivity • Still need to perform communication but reduces data migration High Performance Computing 1

Predictive Load-Balancing • Refine then load-balance – 4 objects migrated • Predictive load-balance then refine – 1 object migrated High Performance Computing 1