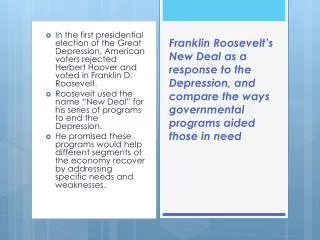

Putting Pointer Analysis to Work

Putting Pointer Analysis to Work. Rakesh Ghiya and Laurie J. Hendren. Presented by Shey Liggett & Jason Bartkowiak. Introduction. Paper addresses the problem of how to apply pointer analysis to a wide variety of compiler applications.

Putting Pointer Analysis to Work

E N D

Presentation Transcript

Putting Pointer Analysis to Work Rakesh Ghiya and Laurie J. Hendren Presented by Shey Liggett & Jason Bartkowiak

Introduction • Paper addresses the problem of how to apply pointer analysis to a wide variety of compiler applications. • Shows how to put points-to analysis and connection analysis to work • Compute read/write sets for indirections • stack-directed pointers: points-to information • heap directed pointers: connection analysis + anchor handles • Based on the read/write sets extend traditional optimizations.

Stack vs. Heap Directed Pointers • Stack directed pointers: pointer to stack objects. • Objects on the stack have appropriate variable namesint t, *pt1;pt1 = &t; • Heap directed pointers: pointers to heap objects • Dynamically-allocated objectsint *pt2;pt2 = malloc();

Approach • Resolve all pointer relationships on the stack using points-to analysis • Further analyze heap pointers using connection analysis • Examine how the combination of the above analyses can be used to compute applicable information

Pointer Analysis in General • Identify the set of locations read/written by a given statement or program region. S: x = y + z; Read(S) = {y,z} Write(S) = {x} T: p = *q;Read(T) = {q,*q} Write(T) = {p} … U: *q = y;Read(U) = {y,q} Write(U) = {*q} • In order to relate read/write sets of statements: resolve indirect references into a set of static locations

Points-to Analysis(As explained by Emami) • Approximate relationships between named objects (stored-based). • Calculate pointer targets in terms of triplets of the form (x, y, D) / (x, y, P) Variable x definitely/possibly contains the address of the location corresponding to y. • Heap locations are abstracted as one symbolic stack location named heap

Points-to Analysis (cont.) C: (s, ptA, D), (t, ptB, D) mapping: U: (c, 1_c, D), (d, 1_d, D) Read(U) = {c, d, 1_c.x, 1_d.y} Write(U): {1_c.x} Read(V) = {c, d, 1_c.y, 1_d.x} Write(V): {1_c.y} Read(sum) = Read(U) + Read(V) Write(sum): Write(U) +Write(V)

Points-to Analysis (cont.) Read(sum) = {c, d, 1_c.x, 1_d.y , 1_c.y, 1_d.x} Write(sum): {1_c.x, 1_c.y} Unmapping: Read(C) = {s, t, ptA.x, ptB.y , ptA.y, ptB.x} Write(C): {ptA.x, ptA.y}

Points-to Analysis (cont.) • D: (s, heap, P), (t, heap, P) mapping: flip:(a, heap, P), (b, heap, P) • Read(S) = Read(T) = {b, a, heap) Write(S) = Write(T) = {heap} • False dependence between S and T

Connection Analysis • Computing connection relationships between pointers (instead of explicitly computing potential targets of pointers) • Performed after point-to analysis • Focuses on heap-directed pointers • Two heap directed pointers are connected if they possibly point to heap objects belonging to the same data structure. • They are NOT connected if they definitely point to objects belonging to disjoint data structures

Connection Analysis (cont.) • Problem: computing read/write sets based on connection analysis

Introducing Anchor Handles • Motivation: The same programmer defined name may refer to different objects at different program points • Solution: Invent enough new names: Anchor handles • Calculating read/write sets: anchor handle p is read/written each time any pointer connected to p is read/written.

Introducing Anchor Handles (cont.) HeapWrite(S) = {a@t-flip->x, a@s->x}, HeapRead(T) = {a@t-flip->x, a@s->x}Detect flow dependence from S to T

Introducing Anchor Handles (cont.) Function level information:HeapWrite(t_flip) = HeapRead(t_flip) = {a@t_flip->x, b@t_flip->y}Useful to prefetch a->x and b->y (but not a->y and b->x)No changes to the “listness” of the data structure

Introducing Anchor Handles (cont.) • Select the locations to be anchored • Generate anchor handles for each: • heap directed formal parameters • heap directed global pointer accessed in function • call site that can read/write a heap location • heap related indirect reference - *p if (p,heap,P) • Use SSA numbers to further reduce number of anchors • a@t_flip, a@S anchor the same location (pointer a hasn’t been updated between them) • same handle can be used to anchor all indirect references involving a given definition of a pointer.

Applications - extend several scalar compiler optimizations • Loop Invariant Removal (LIR) • Variables that do not change in a loop (always evaluate the same value). Remove from the loop. • Location Invariant Removal (LcIR) • Memory reference that accesses the same memory location in all iterations of a loop. • Common Sub-expression Elimination (CSE) • Computations that are always performed at least twice on a given execution path. Eliminate second and later occurrences.

Example of LIR For(I= ) temp = *a; { for(I= ) Array[I] = *a; { } Array[I] = temp; } (a) (b) Loop Invariants

Another Example of LcIR For(I= ) temp = r->t; { for(I= ) r->t = p->I; { } temp=p->I; } r->t = temp; (a) (b) Location Invariants

Example of CSE For(I= ) temp = (a*b)/c; { for(I= ) Array[I] = (a*b)/c; { Array2[I] = (a*b)/c; Array[I] = temp; } Array2[I] = temp; } (a) (b) Common Sub-expression Elimination

Experimental Results Analysis Efficiency(UltraSparc) • quite efficient for moderate size benchmarks • average number of anchor handles per indirect reference is 0.50

Experimental Results (cont.) Optimization Opportunities • expr invariants cannot always be identified without pointer analysis • limited applications of LcIR. Numerous applications for CSE, LIR

Experimental Results (cont.) Benefits of using heap read/write sets • LIR and CSE: number of optimizations increases moderately, for all benchmarks; stack analysis is able to detect most of them (heap read/write info doesn’t bring any added advantage in the case of address exposed variables, or if the code fragment doesn’t involve any write to heap) • LcIR: increases in the two applications.

Experimental Results (cont.) Measure additional benefits of the analyses over a state-of-the-art optimizing compiler Runtime Improvement

Experimental Results (cont.) • Optimized versions achieve significant reduction in the number of memory references (7% to 35.56%). • There may not always be a direct correlation between the number of times optimizations are applied and the actual run time improvement. • Percentage decrease is always equal or higher for Hopt compared to Sopt. • Some of the applications show significant speedup over “gcc -O3” Runtime Improvement

More Applications • Improving array dependence tests.In C, arrays are mostly implemented using pointers to dynamically-allocated storage. Pointer based array references pose problems for array dependence tester. Pointer analyses can make it more effective. • Program Understanding/Debugging.Based on the summary of read/write sets can make observations about the effect of a function on data structures passed to it (which fields are/not updated by the function). • Guide data prefetching for recursive heap data structures

Contributions • Provided a new method for computing read/write sets for connection analysis, introducing the notion of anchor handles. • Demonstrated a variety of applications: extending standard scalar compiler optimizations, array dependence testers and program understanding. • Provided extensive measurements. Demonstrate up to 10% improvement over gcc -O3.

Conclusions • Pointer analysis is an important part of an optimizing C compiler, and one can achieve significant benefits from such an analysis. • Future work will be in three major directions: • Effect of stack and heap read/write sets on fine-grain parallelism and instruction scheduling • Benefits of context-sensitive, flow-sensitive analyses vs. flow-insensitive analyses • Continue to develop new transformations for pointer-intensive programs