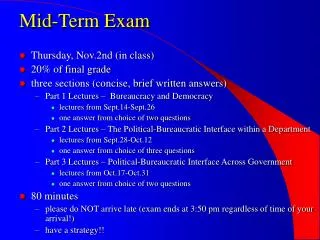

Middle Term Exam

Middle Term Exam. 03/04, in class. Project. It is a team work No more than 2 people for each team Define a project of your own Otherwise, I will assign you to a “tough” project Important date 03/23: project proposal 04/27 and 04/29: presentation 05/02: final report.

Middle Term Exam

E N D

Presentation Transcript

Middle Term Exam 03/04, in class

Project • It is a team work • No more than 2 people for each team • Define a project of your own • Otherwise, I will assign you to a “tough” project • Important date • 03/23: project proposal • 04/27 and 04/29: presentation • 05/02: final report

Project Proposal Introduction: describe the research problem Related wok: describe the existing approaches and their deficiency Proposed approaches: describe your approaches and its potential to overcome the shortcomings of existing approaches Plan: the plan for this project (code development, data sets, and evaluation) Format: it should look like a research paper The required format (both Microsoft Word and Latex) can be downloaded from www.cse.msu.edu/~cse847/assignments/format.zip Warning: any submission that does not follow the format will be given zero score.

Project Report • The same format as the proposal • Expand the proposal with detailed description of your algorithm and evaluation results • Presentation • 25 minute presentation • 5 minute discussion

Introduction to Information Theory Rong Jin

Information • Information knowledge • Information: reduction in uncertainty • Example: • flip a coin • roll a die • #2 is more uncertain than #1 • Therefore, more information is provided by the outcome of #2 than #1

Definition of Information • Let E be some event that occurs with probability P(E). If we are told that E has occurred, then we say we have received I(E)=log2(1/P(E)) bits of information • Example: • Result of a fair coin flip (log22=1 bit) • Result of a fair die roll (log26=2.585 bits)

Entropy A zero-memory information source S is a source that emits symbols from an alphabet {s1, s2,…, sk} with probability {p1, p2,…,pk}, respectively, where the symbols emitted are statistically independent. Entropy is the average amount of information in observing the output from S

Entropy • 0 H(P) logk • Measures the uniformness of a distribution P: The further P is from uniform, the lower the entropy. • For any other probability distribution {q1,…,qk},

A Distance Measure Between Distributions Kullback-Leibler distance between distributions P and Q 0 D(P, Q) The smaller D(P, Q), the more Q is similar to P Non-symmetric: D(P, Q) D(Q, P)

Mutual Information Indicate the amount of information shared between two random variables Symmetric: I(X;Y) = I(Y;X) Zero iff X and Y are independent

Maximum Entropy Rong Jin

Motivation • Consider a translation example • English ‘in’ French {dans, en, à, au-cours-de, pendant} • Goal: p(dans), p(en), p(à), p(au-cours-de), p(pendant) • Case 1: no prior knowledge on translation • Case 2: 30% of times either dans or en is used

Maximum Entropy Model: Motivation • Case 3: 30% of time dans or en is used, and 50% of times dans or à is used • Need a measure the uninformness of a distribution

Maximum Entropy Principle (MaxEnt) • p(dans) = 0.2, p(a) = 0.3, p(en)=0.1 • p(au-cours-de) = 0.2, p(pendant) = 0.2

MaxEnt for Classification Objective is to learn p(y|x) Constraints Appropriate normalization

MaxEnt for Classification Constraints Consistent with data Feature function Model mean of feature functions Empirical mean of feature functions

MaxEnt for Classification No assumption about p(y|x) (non-parametric) Only need the empirical mean of feature functions

MaxEnt for Classification Feature function

Solution to MaxEnt • Identical to conditional exponential model • Solve W by maximum likelihood estimation

Iterative Scaling (IS) Algorithm • Assume

Iterative Scaling (IS) Algorithm • Compute the empirical mean for every feature and every class • Initialize • Repeat • Compute p(y|x) for each training example (xi, yi) using W • Compute the model mean of every feature for every class • Update W

Iterative Scaling (IS) Algorithm • It guarantees that the likelihood function always increases

Iterative Scaling (IS) Algorithm • How about features that can take both positive and negative values? • How about the sum of features is not a constant?