MATLAB Neural Network Toolbox Overview

Dive into the basics of neural networks in MATLAB using simple abstractions modeled for supervised learning, from perceptrons to adaptive filters. Explore topics for designing and training neural networks.

MATLAB Neural Network Toolbox Overview

E N D

Presentation Transcript

Neural Network Toolbox COMM2M Harry R. Erwin, PhD University of Sunderland

Resources • Martin T. Hagan, Howard B. Demuth & Mark Beale, 1996, Neural Network Design, Martin/Hagan (Distributed by the University of Colorado) • http://www.mathworks.com/access/helpdesk/help/pdf_doc/nnet/nnet.pdf • This can be found in the COMM2M Lectures folder as NNET.PDF.

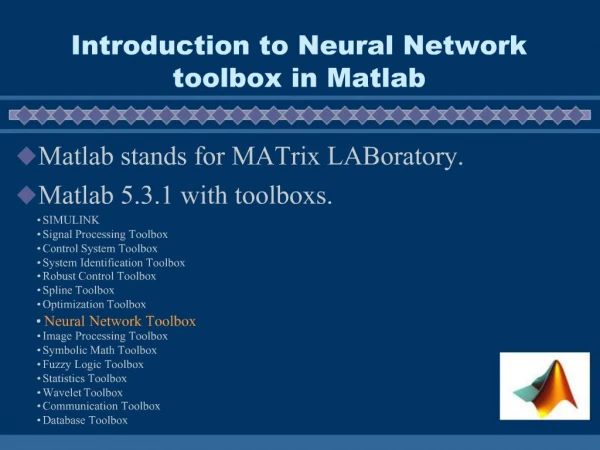

Neural Networks in MATLAB • The MATLAB neural network toolkit does not model biological neural networks. • Instead, it models simple abstractions corresponding to biological models, typically trained using some sort of supervised learning, although unsupervised learning and direct design are also supported.

Notation • It helps if you have some understanding of mathematical notation and systems analysis. • Synaptic weights are typically represented in matrices {wi,j}. Sparse matrices (I.e., mostly zero) are the most biologically realistic. • Biases are used to control spiking probabilities or rates relative to some nominal monotonic function of membrane potential at the soma (cell body).

How Does This Relate to Biological Neural Networks? • The inputs correspond to action potentials (or AP rates) received by the dendritic tree. • The weights correspond to: • Conductance density in the post-synaptic membrane • Signal strength reduction between the synapse and the cell soma • The output corresponds to action potentials or spiking rates at the axonal hillock in the soma. • The neurons are phasic, not tonic.

Assumptions • Linearity is important. The membrane potential at the soma is a weighted linear sum of the activations at the various synapses. • The weightings reflect both synaptic conductances and the transmission loss between the synapse and the soma. • Time is usually quantized.

Topics Covered in the User’s Guide • Neuron models. • Perceptrons • Linear filters • Back-propagation • Control systems • Radial basis networks • Self-organizing and LVQ function networks • Recurrent networks • Adaptive filters

Neuron models. • Scalar input with bias. Membrane potential at the soma is the scalar input plus the bias. • Output is computed by applying a monotonic transfer function to the scalar input. This can be hard-limit, linear, threshold linear, log-sigmoid, or various other. • A ‘layer’ is a layer of transfer functions. This often corresponds to a layer of cells, but local non-linearities can create multi-layer cells.

Network Architectures • Neural network architectures usually consist of multiple layers of cells. • A common architecture consists of three layers (input, hidden, and output). • This has at least a notional correspondence to how neocortex is organized in your brain. • Dynamics of these networks can be analyzed mathematically.

Perceptrons • Perceptron neurons perform hard limited (hardlim) transformations on linear combinations of their inputs. • The hardlim transformation means that a perceptron classifies vector inputs into two subsets separated by a plane (linearly separable). The bias moves the plane away from the origin. • Smooth transformations can be used. • A perceptron architecture is a single layer of neurons.

Learning Rules • A learning rule is a procedure for modifying the weights of a neural network • Based on examples and desired outputs in supervised learning, • Based on input values only in unsupervised learning. • Perceptrons are trained using supervised learning. Convergence rate of training is good.

Linear filters • Neural networks similar to perceptrons, but with linear transfer functions, are called linear filters. • Limited to linearly separable problems. • Error is minimized using the Widrow-Hoff algorithm. • Minsky and Papert criticized this approach.

Back-propagation • Invented by Paul Werbos (now at NSF). • Allows multi-layer perceptrons with non-linear differentiable transfer functions to be trained. • The magic is that errors can be propagated backward through the network to control weight adjustment.

Control systems • Neural networks can be used in predictive control. • Basically, the neural network is trained to represent forward dynamics of the plant. Having that neural network model allows the system to predict the effects of control value changes. • Useful in real computer applications. • Would be very useful in modeling reinforcement learning in biological networks if we could identify how forward models are learned and stored in the brain.

Radial basis networks • Perceptron networks work for linearly separable data. Suppose the data is locally patchy instead. RBF networks were invented for that. • The input to the transfer function is the bias times the distance between a preferred vector of the cell and the input vector. • The transfer function is e-n*n, for n being the input. • Two-layer networks. Usually trained by exact design or by adding neurons until the error falls below a threshold.

Self-organizing and LVQ function networks • The neurons move around in input space until the set of inputs is uniformly covered. • They can also be trained in a supervised manner (LVQ). • Kohonen networks.

Recurrent networks • Elman and Hopfield networks • These model how neocortical principal cells are believed to function. • Elman networks are two-layer back-propagation networks with recurrence. Can learn temporal patterns. Whether they’re sufficiently general to model how the brain does the same thing is a research question. • Hopfield networks give you autoassociative memory.

Adaptive filters • Similar to perceptrons with linear transfer functions. Limited to linearly separable problems. • Powerful learning rule. • Used in signal processing and control systems.

Conclusions • Neural networks are powerful but sophisticated (gorilla in a dinner jacket). • They’re also a good deal simpler than biologically neural networks. • One of the things to do is to learn how to use the MATLAB toolbox functions, but another is how to extend the toolbox.

Tutorial • Poirazi, Brannon, and Mel, 2003, “Pyramidal Neuron as a Two-Layer Neural Network,” Neuron, 37:989-999, March 27, 2003, suggests that cortical pyramidal cells can be validly modeled as two-layer neural networks. • Tutorial assignment, investigate that, using some variant of back-propagation to train the network to recognize digits. Remember the weights of the individual branches are constant; it’s only the synaptic weights that are trained. • A test and training dataset is provided (Numbers.ppt).