Efficient Association Analysis for Itemset Generation

Learn how to efficiently generate itemsets, prune infrequent candidates, utilize hash structures, and generate association rules based on confidence thresholds. Includes detailed examples and algorithms.

Efficient Association Analysis for Itemset Generation

E N D

Presentation Transcript

C1 F1 Itemset Itemset Sup. count {I1} {I1} 6 {I2} {I2} 7 {I3} {I3} 6 {I4} {I4} 2 {I5} {I5} 2 Example Min_sup_count = 2

C2 Itemset {I1,I2} {I1,I3} {I1,I4} {I1,I5} {I2,I3} {I2,I4} {I2,I5} {I3,I4} {I3,I5} {I4,I5} Generate C2 from F1F1 Min_sup_count = 2 F1

Generate C3 from F2F2 Min_sup_count = 2 F2 Prune F3

Generate C4 from F3F3 Min_sup_count = 2 C4 {I1,I2,I3,I5} is pruned because {I2,I3,I5} is infrequent F3

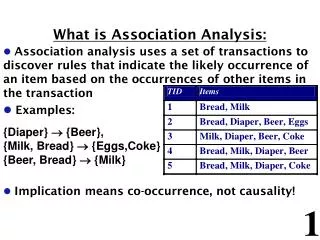

Transactions TID Items 1 Bread, Milk 2 Bread, Diaper, Beer, Eggs 3 Milk, Diaper, Beer, Coke 4 Bread, Milk, Diaper, Beer 5 Bread, Milk, Diaper, Coke Candidate support counting • Scan the database of transactions to determine the support of each candidate itemset • Brute force: Match each transaction against every candidate. • Too many comparisons! • Better method: Store the candidate itemsets in a hash structure • A transaction will be tested for match only against candidates contained in a few buckets Hash Structure k N Buckets

Generate Hash Tree Hash function 3,6,9 1,4,7 2,5,8 2 3 4 5 6 7 3 6 7 3 6 8 1 4 5 3 5 6 3 5 7 6 8 9 3 4 5 1 3 6 1 2 4 4 5 7 1 2 5 4 5 8 1 5 9 • You need: • A hash function (e.g. p mod 3) • Max leaf size: max number of itemsets stored in a leaf node (if number of candidate itemsets exceeds max leaf size, split the node) • Suppose you have 15 candidate itemsets of length 3 and leaf size is 3: • {1 4 5}, {1 2 4}, {4 5 7}, {1 2 5}, {4 5 8}, {1 5 9}, {1 3 6}, {2 3 4}, {5 6 7}, {3 4 5}, {3 5 6}, {3 5 7}, {6 8 9}, {3 6 7}, {3 6 8}

Hash function 3,6,9 1,4,7 2,5,8 Generate Hash Tree Suppose you have 15 candidate itemsets of length 3 and leaf size is 3: {1 4 5}, {1 2 4}, {4 5 7}, {1 2 5}, {4 5 8}, {1 5 9}, {1 3 6}, {2 3 4}, {5 6 7}, {3 4 5}, {3 5 6}, {3 5 7}, {6 8 9}, {3 6 7}, {3 6 8} 2 3 4 5 6 7 1 4 5 1 3 6 1 2 4 4 5 7 1 2 5 4 5 8 1 5 9 3 5 6 3 5 7 6 8 9 3 4 5 3 6 7 3 6 8 Split nodes with more than 3 candidates using the second item

Hash function 3,6,9 1,4,7 2,5,8 Generate Hash Tree Suppose you have 15 candidate itemsets of length 3 and leaf size is 3: {1 4 5}, {1 2 4}, {4 5 7}, {1 2 5}, {4 5 8}, {1 5 9}, {1 3 6}, {2 3 4}, {5 6 7}, {3 4 5}, {3 5 6}, {3 5 7}, {6 8 9}, {3 6 7}, {3 6 8} 2 3 4 5 6 7 3 5 6 3 5 7 6 8 9 3 4 5 3 6 7 3 6 8 1 4 5 1 3 6 1 2 4 4 5 7 1 2 5 4 5 8 1 5 9 Now split nodes using the third item

Hash function 3,6,9 1,4,7 2,5,8 Generate Hash Tree Suppose you have 15 candidate itemsets of length 3 and leaf size is 3: {1 4 5}, {1 2 4}, {4 5 7}, {1 2 5}, {4 5 8}, {1 5 9}, {1 3 6}, {2 3 4}, {5 6 7}, {3 4 5}, {3 5 6}, {3 5 7}, {6 8 9}, {3 6 7}, {3 6 8} 2 3 4 5 6 7 3 5 6 3 5 7 6 8 9 3 4 5 3 6 7 3 6 8 1 4 5 1 3 6 1 2 4 4 5 7 1 2 5 4 5 8 1 5 9 Now, split this similarly.

Subset Operation Given a (lexicographically ordered) transaction t, say {1,2,3,5,6} how can we enumerate the possible subsets of size 3?

3 + 2 + 1 + 5 6 1 2 3 5 6 3 5 6 2 3 5 6 1 4 5 4 5 8 1 2 5 2 3 4 1 2 4 3 6 7 3 6 8 6 8 9 3 5 7 3 5 6 5 6 7 4 5 7 Subset Operation Using Hash Tree Hash Function transaction 1,4,7 3,6,9 2,5,8 1 3 6 3 4 5 1 5 9

1 5 + 2 + 3 + 1 3 + 1 2 + 1 + 6 5 6 1 2 3 5 6 5 6 3 5 6 3 5 6 2 3 5 6 1 4 5 4 5 8 3 6 8 3 6 7 1 2 4 2 3 4 1 2 5 3 5 6 3 5 7 6 8 9 4 5 7 5 6 7 Subset Operation Using Hash Tree Hash Function transaction 1,4,7 3,6,9 2,5,8 1 3 6 3 4 5 1 5 9

Hash Function 2 + 1 5 + 1 + 3 + 1 3 + 1 2 + 6 3 5 6 5 6 5 6 1 2 3 5 6 2 3 5 6 3 5 6 1,4,7 3,6,9 2,5,8 1 4 5 4 5 8 1 2 4 2 3 4 3 6 8 3 6 7 1 2 5 3 5 7 3 5 6 6 8 9 4 5 7 5 6 7 Subset Operation Using Hash Tree transaction 1 3 6 3 4 5 1 5 9 Match transaction against 7 out of 15 candidates

Rule Generation • An association rule can be extracted by partitioning a frequent itemset Y into two nonempty subsets, X and Y -X, such that XY-X satisfies the confidence threshold. • Each frequent k-itemset, Y, can produce up to 2k-2 association rules • ignoring rules that have empty antecedents or consequents. Example Let Y = {1, 2, 3} be a frequent itemset. Six candidate association rules can be generated from Y: {1, 2} {3}, {1, 3}{2}, {2, 3} {1}, {1}{2, 3}, {2} {1, 3}, {3} {1, 2}. Computing the confidence of an association rule does not require additional scans of the transactions. Consider {1, 2}{3}. The confidence is ({1, 2, 3}) / ({1, 2}) Because {1, 2, 3} is frequent, the antimonotone property of support ensures that {1, 2} must be frequent, too, and we know the supports of frequent itemsets.

Confidence-Based Prunning I Theorem. If a rule XY – X does not satisfy the confidence threshold, then any rule X ’Y – X ’, where X ’ is a subset of X, cannot satisfy the confidence threshold as well. Proof. Consider the following two rules: X ’ Y – X ’ and XY – X, where X ’X. The confidence of the rules are (Y ) / (X ’) and (Y ) / (X), respectively. Since X ’ is a subset of X, (X ’) (X). Therefore, the former rule cannot have a higher confidence than the latter rule.

Confidence-Based Prunning II • Observe that: X ’Ximplies thatY – X ’ Y – X X’ X Y

Confidence-Based Prunning III • Initially, all the highconfidence rules that have only one item in the rule consequent are extracted. • These rules are then used to generate new candidate rules. • For example, if • {acd} {b} and {abd} {c} are highconfidence rules, then the candidate rule {ad} {bc} is generated by merging the consequents of both rules.

Confidence-Based Prunning IV {Bread,Milk}{Diaper} (confidence = 3/3)threshold=50% {Bread,Diaper}{Milk} (confidence = 3/3) {Diaper,Milk}{Bread} (confidence = 3/3) Items (1-itemsets) Pairs (2-itemsets) Triplets (3-itemsets)

Confidence-Based Prunning V Merge: {Bread,Milk}{Diaper} {Bread,Diaper}{Milk} {Bread}{Diaper,Milk} (confidence = 3/4) …

Compact Representation of Frequent Itemsets • Some itemsets are redundant because they have identical support as their supersets • Number of frequent itemsets • Need a compact representation

Maximal Frequent Itemsets An itemset is maximal frequent if none of its immediate supersets is frequent Maximal Itemsets Maximal frequent itemsets form the smallest set of itemsets from which all frequent itemsets can be derived. Infrequent Itemsets Border

Maximal Frequent Itemsets • Despite providing a compact representation, maximal frequent itemsets do not contain the support information of their subsets. • For example, the support of the maximal frequent itemsets {a, c, e}, {a, d}, and {b,c,d,e} do not provide any hint about the support of their subsets. • An additional pass over the data set is therefore needed to determine the support counts of the nonmaximal frequent itemsets. • It might be desirable to have a minimal representation of frequent itemsets that preserves the support information. • Such representation is the set of the closed frequent itemsets.

Closed Itemset • An itemset is closed if none of its immediate supersets has the same support as the itemset. • Put another way, an itemset X is not closed if at least one of its immediate supersets has the same support count as X. • An itemset is a closed frequent itemset if it is closed and its support is greater than or equal to minsup.

Lattice Transaction Ids Not supported by any transactions

Maximal vs. Closed Itemsets Closed but not maximal Minimum support = 2 Closed and maximal # Closed = 9 # Maximal = 4

Maximal vs Closed Itemsets All maximal frequent itemsets are closed because none of the maximal frequent itemsets can have the same support count as their immediate supersets.

Deriving Frequent Itemsets From Closed Frequent Itemsets • Consider {a, d}. • It is frequent because {a, b, d} is. • Since it isn't closed, its support count must be identical to one of its immediate supersets. • The key is to determine which superset among {a, b, d}, {a, c, d}, or {a, d, e} has exactly the same support count as {a, d}. • The Apriori principle states that: • Any transaction that contains the superset of {a, d} must also contain {a, d}. • However, any transaction that contains {a, d} does not have to contain the supersets of {a, d}. • So, the support for {a, d} must be equal to the largest support among its supersets. • Since {a, c, d} has a larger support than both {a, b, d} and {a, d, e}, the support for {a, d} must be identical to the support for {a, c, d}.

Algorithm Let C denote the set of closed frequent itemsets Let kmax denote the maximum length of closed frequent itemsets Fkmax ={f | fC, | f | = kmax } {Find all frequent itemsets of size kmax} fork = kmax – 1 downto 1 do Set Fk to be all sub-itemsets of length k from the frequent itemsets in Fk+1 plus the closed frequent itemsets of size k. for eachfFkdo iffCthen f.support = max{f’.support | f’Fk+1, f f’} end if end for end for

Example C = {ABC:3, ACD:4, CE:6, DE:7} kmax=3 F3 = {ABC:3, ACD:4} F2 = {AB:3, AC:4, BC:3, AD:4, CD:4, CE:6, DE:7} F1 = {A:4, B:3, C:6, D:7, E:7}

Computing Frequent Closed Itemsets • How? • Use the Apriori Algorithm. • After computing, say Fk and Fk+1, check whether there is some itemset in Fk which has a support equal to the support of one of its supersets in Fk+1. Purge all such itemsets from Fk.