Feature Selection as Relevant Information Encoding

This paper presents a novel approach to feature selection, emphasizing the extraction of relevant information from data without making generative modeling assumptions. It introduces the Information Bottleneck method, demonstrating how mutual information serves as a measure to determine relationships between variables. We explore iterative algorithms like the Generalized Balahut-Arimoto and their application in document classification, data clustering, and bioinformatics. By leveraging information theory, our approach aims to unify learning, feature extraction, and prediction, ultimately enhancing data analysis across various domains.

Feature Selection as Relevant Information Encoding

E N D

Presentation Transcript

Feature Selectionas Relevant Information Encoding Naftali Tishby School of Computer Science and Engineering The Hebrew University, Jerusalem, Israel NIPS 2001

Many thanks to: Noam Slonim Amir Globerson Bill Bialek Fernando Pereira Nir Friedman

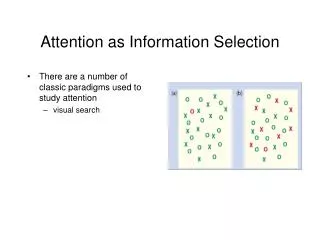

Feature Selection? • NOT generative modeling! • no assumptions about the source of the data • Extracting relevant structure from data • functions of the data (statistics) that preserve information • Information about what? • Approximate Sufficient Statistics • Need a principle that is both general and precise. • Good Principles survive longer!

A new compact representation The document clusters preserve the relevant information between the documents and words

Documents Words

Mutual information How much X is telling about Y? I(X;Y): function of the joint probability distribution p(x,y) - minimal number of yes/no questions (bits) needed to ask about x, in order to learn all we can about Y. Uncertainty removed about X when we know Y: I(X;Y) = H(X) - H( X|Y) = H(Y) - H(Y|X) I(X;Y) H(X|Y) H(Y|X)

Relevant Coding • What are thequestionsthat we need to askaboutXin orderto learn about Y? • Need to partition X into relevant domains, or clusters, between which we really need to distinguish... P(x|y1) X|y1 y1 y2 X|y2 P(x|y2) X Y

Bottlenecks and Neural Nets • Auto association: forcing compact representations • is a relevant code of w.r.t. Input Output Sample 1 Sample 2 Past Future

Q: How many bits are needed to determine the relevant representation? • need to index the max number of non-overlapping green blobs inside the blue blob: (mutual information!)

The idea: find a compressed signal that needs short encoding ( small ) while preserving as much as possible the information on the relevant signal ( )

A Variational Principle We want a short representation of X that keeps the information about another variable, Y, if possible.

TheSelf Consistent Equations • Marginal: • Markov condition: • Bayes’ rule:

The emerged effective distortion measure: • Regular if is absolutely continuous w.r.t. • Small if predicts y as well as x:

The iterative algorithm: (Generalized Blahut-Arimoto) Generalized BA-algorithm

The Information BottleneckAlgorithm “free energy”

The Information - plane, the optimal for agiven is a concave function: impossible Possible phase

Manifold of relevance The self consistent equations: Assuming acontinuous manifoldfor Coupled (local in ) eigenfunction equations, with as an eigenvalue.

Multivariate Information Bottleneck • Complex relationship between many variables • Multiple unrelated dimensionality reduction schemes • Trade between known and desired dependencies • Express IB in the language of Graphical Models • Multivariate extension of Rate-Distortion Theory

Multivariate Information Bottleneck: Extending the dependency graphs (Multi-information)

Sufficient Dimensionality Reduction(with Amir Globerson) • Exponential families have sufficient statistics • Given a joint distribution , find an approximation of the exponential form: This can be done by alternating maximization of Entropy under the constraints: The resulting functions are our relevant features at rank d.

Summary • We present a general information theoretic approach for extracting relevant information. • It is a natural generalization of Rate-Distortion theory with similar convergence and optimality proofs. • Unifies learning, feature extraction, filtering, and prediction... • Applications (so far) include: • Word sense disambiguation • Document classification and categorization • Spectral analysis • Neural codes • Bioinformatics,… • Data clustering based on multi-distance distributions • …