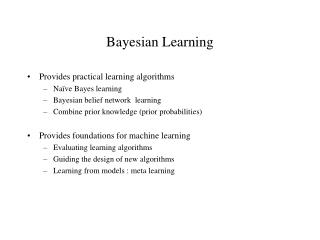

Bayesian Learning

Provides practical learning algorithms Naïve Bayes learning Bayesian belief network learning Combine prior knowledge (prior probabilities) Provides foundations for machine learning Evaluating learning algorithms Guiding the design of new algorithms Learning from models : meta learning.

Bayesian Learning

E N D

Presentation Transcript

Provides practical learning algorithms Naïve Bayes learning Bayesian belief network learning Combine prior knowledge (prior probabilities) Provides foundations for machine learning Evaluating learning algorithms Guiding the design of new algorithms Learning from models : meta learning Bayesian Learning

P(h) = prior probability of hypothesis h P(D) = prior probability of training data D P(h|D) = probability of h given D (posterior density ) P(D|h) = probability of D given h (likelihood of D given h) Basic Approach Bayes Rule: The Goal of Bayesian Learning: the most probable hypothesis given the training data (Maximum A Posteriori hypothesis )

Basic Formulas for Probabilities • Product Rule : probability P(AB) of a conjunction of two events A and B: • Sum Rule: probability of a disjunction of two events A and B: • Theorem of Total Probability : if events A1, …., An are mutually exclusive with

An Example Does patient have cancer or not? A patient takes a lab test and the result comes back positive. The test returns a correct positive result in only 98% of the cases in which the disease is actually present, and a correct negative result in only 97% of the cases in which the disease is not present. Furthermore, .008 of the entire population have this cancer.

MAP Learner For each hypothesis h in H, calculate the posterior probability Output the hypothesis hmap with the highest posterior probability Comments: Computational intensive Providing a standard for judging the performance of learning algorithms Choosing P(h) and P(D|h) reflects our prior knowledge about the learning task

Occam’s razor : “ choose the shortest explanation for the observed data: short trees; minimal rules….’’ MML: An abstraction of syntactical simplicity: Given a data set D Given a set of hypothesis {h1, h2, …, hn} that describe D MML Principle : always choose Hi for which that quantity: Mlength (hi) + Mlength (D | hi) is minimised MML: Best hypothesis is one which allows for most compact encoding of given data Minimum Description Length Principle Example: • Represent the set {2, 4, 6, ….., 100} • Solution 1: list all 49 integers • Solution 2: “All even numbers <= 100 and >= 2 • MML: Solution2 is worth somet5hing • As the size of set increases, the cost of solution 1 increases much more rapidly than • cost of solution 1 • If the set is random then the cost of solution 1 is minimum

Bayesian Interpretation of MML From Information Theory: The optimal (shortest expected coding length) code for an object (event) with probability p is -log2p bits So: Think about the decision tree example: prefer the hypothesis that minimizes: mlength(h) + mlength (misclassification)

Question: Given new instance x, what is its most probable classification? Hmap(x) is not the most probable classification! Example: Let P(h1|D) = .4, P(h2|D) = .3, P(h3 |D) =.3 Given new data x, we have h1(x)=+, h2(x) = -, h3(x) = - What is the most probable classification of x ? Bayes optimal classification: Bayes Optimal Classifier Example: P(h1| D) =.4, P(-|h1)=0, P(+|h1)=1 P(h2|D) =.3, P(-|h2)=1, P(+|h2)=0 P(h3|D)=.3, P(-|h3)=1, P(+|h3)=0

Naïve Bayes Learner Assume target function f: X-> V, where each instance x described by attributes <a1, a2, …., an>. Most probable value of f(x) is: Naïve Bayes assumption: (attributes are conditionally independent)

Example : Naïve Bayes Predict playing tennis in the day with the condition <sunny, cool, high, strong> (P(v| o=sunny, t= cool, h=high w=strong)) using the following training data: Day Outlook Temperature Humidity Wind Play Tennis 1 Sunny Hot High Weak No 2 Sunny Hot High Strong No 3 Overcast Hot High Weak Yes 4 Rain Mild High Weak Yes 5 Rain Cool Normal Weak Yes 6 Rain Cool Normal Strong No 7 Overcast Cool Normal Strong Yes 8 Sunny Mild High Weak No 9 Sunny Cool Normal Weak Yes 10 Rain Mild Normal Weak Yes 11 Sunny Mild Normal Strong Yes 12 Overcast Mild High Strong Yes 13 Overcast Hot Normal Weak Yes 14 Rain Mild High Strong No we have :

Naïve Bayes Algorithm Naïve_Bayes_Learn (examples) for each target value vj estimate P(vj) for each attribute value ai of each attribute a estimate P(ai | vj ) Classify_New_Instance (x) Typical estimation of P(ai | vj) Where n: examples with v=v; p is prior estimate for P(ai|vj) nc: examples with a=ai, m is the weight to prior

Naïve Bayes assumption of conditional independence too restrictive But it is intractable without some such assumptions Bayesian Belief network (Bayesian net) describe conditional independence among subsets of variables (attributes): combining prior knowledge about dependencies among variables with observed training data. Bayesian Net Node = variables Arc = dependency DAG, with direction on arc representing causality Bayesian Belief Networks

Bayesian Networks: Multi-variables with Dependency • Bayesian Belief network (Bayesian net) describe conditional independence among subsets of variables (attributes): combining prior knowledge about dependencies among variables with observed training data. • Bayesian Net • Node = variables and each variable has a finite set of mutually exclusive states • Arc = dependency • DAG, with direction on arc representing causality • To each variables A with parents B1, …., Bn there is attached a conditional probability table P (A | B1, …., Bn)

Bayesian Belief Networks • Age, Occupation and Income determine if customer will buy this product. • Given that customer buys product, whether there is interest in insurance is now independent of Age, Occupation, Income. • P(Age, Occ, Inc, Buy, Ins ) = P(Age)P(Occ)P(Inc) • P(Buy|Age,Occ,Inc)P(Int|Buy) • Current State-of-the Art: Given structure and probabilities, existing algorithms can handle inference with categorical values and limited representation of numerical values Occ Age Income Buy X Interested in Insurance

Nodes as Functions A node in BN is a conditional distribution function P(X|A=a, B=b) 0.1 0.3 0.6 l m h ab ~ab a~b ~a~b a A 0.1 0.3 0.6 0.4 0.4 0.2 0.2 0.5 0.3 0.7 0.2 0.1 l m h b B X • input: parents state values • output: a distribution over its own value

Special Case : Naïve Bayes h e1 e2 …………. en P(e1, e2, ……en, h ) = P(h) P(e1 | h) …….P(en | h)

Inference in Bayesian Networks Age Income How likely are elderly rich people to buySun? P( paper = Sun | Age>60, Income > 60k) House Owner Living Location Newspaper Preference EU Voting Pattern

Inference in Bayesian Networks Age Income How likely are elderly rich people who voted labour to buy Daily Mail? P( paper = DM | Age>60, Income > 60k, v = labour) House Owner Living Location Newspaper Preference EU Voting Pattern

Bayesian Learning Burglary Earthquake B E A C N ~b e a c n b ~e ~a ~c n ………………... Alarm Newscast Call • Input : fully or partially observable data cases • Output : parameters AND also structure • Learning Methods: • EM (Expectation Maximisation) • using current approximation of parameters to estimate filled in data • using filled in data to update parameters (ML) • Gradient Ascent Training • Gibbs Sampling (MCMC)