Overview

"Stated Preference Theory (Conjoint Analysis) is the Best Way to Assess Health-Related Quality of Life for Economic Assessment of Drugs and Medical Interventions: An Application in Alzheimer Disease" Joel Hay, PhD Department of Pharmaceutical Economics and Policy

Overview

E N D

Presentation Transcript

"Stated Preference Theory (Conjoint Analysis) is the Best Way to Assess Health-Related Quality of Life for Economic Assessment of Drugs and Medical Interventions: An Application in Alzheimer Disease" Joel Hay, PhD Department of Pharmaceutical Economics and Policy University of Southern California April 21 2006 Presented at ISPOR Student Teleconference Research supported by the State of California Alzheimer Disease Research Centers Network JHay@usc.edu

Overview • Measuring Health Related QOL for Cost Effectiveness Analysis • Problems with CE ratios in making decisions • Justifications for Net Monetary Benefits based on willingness to pay JHay@usc.edu

Overview • Introduction to Conjoint Analysis • Alzheimer Conjoint Experiment Design • Results for the Alzheimer CA Experiment • Conclusions & Future Directions JHay@usc.edu

Stated Preference Elicitation Methods • Important to the design of successful drugs and other interventions • Can reduce R&D costs enormously by focusing on treatment and disease symptoms that are most important • Can be used to weigh utility of outcomes in cost-effectiveness analysis JHay@usc.edu

Stated Preference Methods • Willingness to Pay/ Stated Value • Standard Gamble / Time Trade-off • Global Assessment / Visual Analog • Health Status Assessment / Multi-attribute Scales • Conjoint Analysis/ Discrete Choice Experiments JHay@usc.edu

Stated Preference Methods • Stated preference is not revealed preference • All stated preference methods ask the subject to make hypothetical choices • All stated preference methods require the subject (patient) to consider or imagine the value of health states they don’t actually experience JHay@usc.edu

Denominator of CEA Ratio • [Cost(A) - Cost(B)] / [QALY(A) - QALY(B)] • How are QALYs determined? • Patient or subject survey of health states • EQ-5D, HUI, QWB, SF-## • Results converted into QOL-weighted time • Uses someone’s utility weighting algorithm • Utility translation imprecision is generally ignored JHay@usc.edu

Problems with QALYs • Doesn’t accurately reflect individual utilities • Implies that people have constant preference for healthy years • Ignores risky behaviors • Ignores life expectancy constraints • Ignores alternative consumption opportunties • Creates serious analytic problems in a CE ratio JHay@usc.edu

STOCHASTIC UNCERTAINTY / VARIABILITY • Feiller’s Theorem Probability Ellipses JHay@usc.edu

Analyzing CEA Ratio Results ΔC • Computing 95% CI around mean ratio is plagued with many problems ΔE JHay@usc.edu

Analyzing CEA Ratio Results ΔC • Computing 95% CI around mean ratio is plagued with many problems • CI can include undefined values • ΔC / ΔE • What if ΔE = 0? • CI = (LL, ∞) U (∞, UL) ΔE JHay@usc.edu

Analyzing CEA Ratio Results • CI can be undefined • Computing 95% CI around mean ratio is plagued with many problems JHay@usc.edu

Analyzing CEA Ratio Results ΔC • Computing 95% CI around mean ratio is plagued with many problems • The same value for a CER can have 2 completely different meanings • a1 = a2 but many prefer a2 • b1 = b2 but in reality, b1 << b2 b1 a2 ΔE a1 b2 JHay@usc.edu

Analyzing CEA Ratio Results ΔC • Computing 95% CI around mean ratio is plagued with many problems • Not properly ordered outside trade-off quadrants • b better than c • c better than a • a and b equal when in reality b >> a ΔE a c b JHay@usc.edu

Analyzing CEA Ratio Results • Acceptability Curves JHay@usc.edu

Acceptability Curves • Cost Effectiveness Acceptability Curves • Shows the probability that the new therapy will be cost effective as a function of the societal willingness-to pay (for a QALY) threshold • Gets around the problems of CEA confidence intervals • Main advantage: Adopts the natural perspective / interpretation of decision makers: “how likely is it that the intervention will be cost effective” JHay@usc.edu

Acceptability Curves JHay@usc.edu

Acceptability Curves • Analysts have had the natural tendency to interpret the results as the probability that the cost-effectiveness ratio is lower or equal than a specific threshold (given the data) • AC are not always monotonically increasing! Their shape depends on the data – they can go up and down! • 95% intervals cannot always be defined JHay@usc.edu

Using Net Monetary Benefits to Make Decisions • The incr net benefit (INB) was introduced to estimate whether a treatment is cost effective • INB = IC – λ *IE • λ is decisionmaker willingness to pay for QALY • A therapy, for which the INB is lower than zero, may be considered cost-effective • However, even when acceptability curve indicates that new treatment is cost-effective at a λ the decision maker is willing to pay, there is still a probability that the decision to reimburse the new treatment is wrong JHay@usc.edu

Using Net Monetary Benefits to Make Decisions • Incr Net Ben = Incr Cost – λ * Incr Effect • λ reflects the decision maker’s willingness to pay for new therapy • Why are we using subjects to generate HRQOL and QALYs but not using subjects to generate λ (willingness to pay for QALYs)? • The CEA researcher is throwing away the opportunity to capture subject willingness to pay FOR NO REASON!! JHay@usc.edu

EXPECTED VALUE OF PERFECT INFORMATION • Is quantifying uncertainty useful to decision makers? • Focus should be on determining the value of collecting new information (= cost of uncertainty) • New information valued in terms of expected reductions in decision errors (NMB loss in money) See Claxton K. The irrelevance of inference: a decision making approach to the stochastic evaluation of health care technologies Journal of Health Economics, 1999;18:341-64 JHay@usc.edu

Why not Capture Willingness to Pay Directly? • Gives a more accurate and precise measure of subject trade-off between money and health states • Doesn’t force health utilities into an artificial QOL framework • Allows direct assessment of Net Benefits rather than artificial CEA ratios JHay@usc.edu

Why not Capture Willingness to Pay Directly? • Even if data come from current HRQOL measures it’s possible to develop WTP conversion scales • Need to subject existing HRQOL instruments to WTP assessment • Conjoint Analysis is the way to do this JHay@usc.edu

Advantages of Conjoint Analysis • Rigorous behavioral choice model: • Random Utility Theory • Actually estimates utility parameters • Experimental design efficiently maps choice preferences in multi-attribute space • Subjects can’t “game” responses JHay@usc.edu

Random Utility Maximization • Uiq = Viq + iq • Uiq > Ujq for all j i element of A • Pi = Pr[(Viq - Vjq) > (jq - iq)] JHay@usc.edu

Stated Preference Elicitation in Alzheimer Disease • AD treatments involve trade-offs across different functional domains • Important, since AD patients have difficulty in making & expressing choices • Relies on proxy responders, usually AD caregivers JHay@usc.edu

Stated Preference Elicitation in Alzheimer Disease • Develop a CA experiment to elicit preferences across function domains for hypothetical AD patients • Include treatment costs to estimate willingness-to-pay for improvements/decreases in daily function JHay@usc.edu

Stated Preference Elicitation in Alzheimer Disease • Validate the CA design in a sample of pharmacy students • Apply the CA experiment to a sample of AD caregivers • Compare results JHay@usc.edu

CA Experiment • Attributes & Levels from Health Utilities Instrument-- Mark 3 • This instrument is widely used and validated • It was developed to map into a standard gamble utility scale valid for cost effectiveness analysis • Utility scores from the HUI can be compared to RUT scores JHay@usc.edu

Survey • PILOT STUDY • The CA design was validated in a sample of pharmacy students: • HUI functional domain attributes were shown to be significant and consistent predictors of choice - Total HUI utility score is much better predictor of choice than individual components JHay@usc.edu

Survey • DEMOGRAPHICS • Survey was administered to 74 AD caregivers enrolled in the California AD Research Centers. • STATED PREFERENCE SCENARIOS • HUI-3 for the AD PATIENT, as PROXIED by the CAREGIVER • HUI-3 for the CAREGIVER • SF-36 for the CAREGIVER JHay@usc.edu

Survey STATED PREFERENCE SCENARIOS Fractional Factorial Experimental Design Attributes and Levels taken from the Health Utilities Instrument - Mark 3 best – 3rd – worst 243 combinations of comparisons that were not dominant or dominated choices 25 x 10 choice sets each 61 x 4 choice sets each Cost of each choice = $100 / $50 / $0 JHay@usc.edu

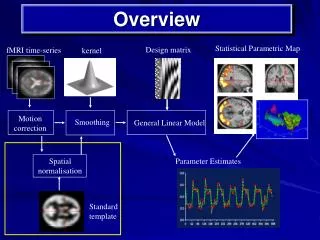

Speech Ambulation Dexterity JHay@usc.edu

Scoring the HUI • Patient HUI as proxied by the CG • Caregiver HUI • HUI of each choice set • Multi-attribute utility function: u = 0.371(b1*b2*b3*b4*b5*b6*b7*b8)-0.371 JHay@usc.edu

Analysis Plan • Demographics • MNL for HUI, cost in predicting choice • Strength of each attribute in determining subject choice • Subject WTP for various choice attributes – determining strength of cost attribute • Estimate subject utility • - Health utility of AD patients • - Mapping of the HUI-3 to the SF-36 for AD JHay@usc.edu

Results: Descriptives JHay@usc.edu

Results: Correlations JHay@usc.edu

Results: Regression JHay@usc.edu

Results • In a multinomial logistic regression, treatment choice was positively related to HUI score for the chosen intervention and negatively related to treatment costs (P < 0.01). • At the margin, caregivers would be willing to spend an additional $5-$7 per month for any AD intervention that increased patient HUI utility scores by 1%. • The strongest rankings include improvements in ambulation, emotion and cognition. JHay@usc.edu

Results • While HUI scores are related to SF-36 scores, the magnitude of response is fairly small. • Neither HUI score is related to SF-36 Vitality and the Caregiver HUI score is not significantly related to Physical Role Function. • Regression based on the HUI multi-attribute score was superior to any single-attribute model and non-inferior to models allowing unconstrained parameter weights for each HUI attribute domain. JHay@usc.edu

Conclusions • Conjoint Analysis is a useful method for benchmarking the potential values for AD treatments trade-offs in terms of their costs and impacts on patient functioning. • As found in prior studies, HUI is a useful scale for characterizing proxy patient utility levels. JHay@usc.edu

Conclusions • Consistent with utility theory, results show a methodologically independent and innovative validation of the HUI utility scale as a strong predictor of subject health state preferences. • Demonstrates that we can convert HUI, SF-36 and other HRQOL scales directly into willingness to pay values for alternative health states • Do not need to increase imprecision and difficulty by using Cost Effectiveness Ratios JHay@usc.edu

Conclusions • These methods will become even more relevant as we increasingly utilize brain scanning methods to map utility of health states and utility of money directly • Functional Magnetic Resonance Imaging • Positron Emission Tomography JHay@usc.edu

SUGGESTED READINGS Handling Variability and Uncertainty (1) Briggs AH, Fenn P. Confidence intervals or sufaces? Uncertainty on the cost-effectiveness plane. Health Econ 1998; 7:723-740. Briggs AH. Handling uncertainty in cost-effectiveness models. Pharmacoeconomics 2000; 17(5):479-500. Clark DE. Computational methods for probabilistic decision trees. Comput Biomed Res 1997; 30(1):19-33. Critchfield GC, Willard KE, Connelly DP. Probabilistic sensitivity analysis methods for general decision models. Comput Biomed Res 1986; 19(3):254-265. Dittus RS, Roberts SD, Wilson JR. Quantifying uncertainty in medical decisions. J Am Coll Cardiol 1989; 14(3 Suppl A):23A-28A. Doubilet P, Begg CB, Weinstein MC, Braun P, McNeil BJ. Probabilistic sensitivity analysis using Monte Carlo simulation. A practical approach. Med Decis Making 1985; 5(2):157-177. Glick HA, Briggs AH, Polsky D. Quantifying stochastic uncertainty and presenting results of cost-effectiveness analyses. Expert Rev Pharmacoeconomics Outcomes Res 2001; 1(1):25-36. JHay@usc.edu