Review

Review. Rong Jin. Comparison of Different Classification Models. The goal of all classifiers Predicating class label y for an input x Estimate p ( y | x ). (k=4). (k=1). Probability interpretation: estimate p ( y | x ) as. K Nearest Neighbor (kNN) Approach.

Review

E N D

Presentation Transcript

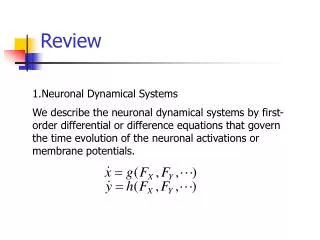

Review Rong Jin

Comparison of Different Classification Models • The goal of all classifiers • Predicating class label y for an input x • Estimate p(y|x)

(k=4) (k=1) Probability interpretation: estimate p(y|x) as K Nearest Neighbor (kNN) Approach

K Nearest Neighbor Approach (KNN) • What is the appropriate size for neighborhood N(x)? • Leave one out approach • Weight K nearest neighbor • Neighbor is defined through a weight function • Estimate p(y|x) • How to estimate the appropriate value for 2?

K Nearest Neighbor Approach (KNN) • What is the appropriate size for neighborhood N(x)? • Leave one out approach • Weight K nearest neighbor • Neighbor is defined through a weight function • Estimate p(y|x) • How to estimate the appropriate value for 2?

K Nearest Neighbor Approach (KNN) • What is the appropriate size for neighborhood N(x)? • Leave one out approach • Weight K nearest neighbor • Neighbor is defined through a weight function • Estimate p(y|x) • How to estimate the appropriate value for 2?

Weighted K Nearest Neighbor • Leave one out + maximum likelihood • Estimate leave one out probability • Leave one out likelihood of training data • Search the optimal 2 by maximizing the leave one out likelihood

Weight K Nearest Neighbor • Leave one out + maximum likelihood • Estimate leave one out probability • Leave one out likelihood of training data • Search the optimal 2 by maximizing the leave one out likelihood

Gaussian Generative Model • p(y|x) ~ p(x|y) p(y): posterior = likelihoodprior • Estimate p(x|y) and p(y) • Allocate a separate set of parameters for each class • {1, 2,…,c} • p(xly;) p(x;y) • Maximum likelihood estimation

Gaussian Generative Model • p(y|x) ~ p(x|y) p(y): posterior = likelihoodprior • Estimate p(x|y) and p(y) • Allocate a separate set of parameters for each class • {1, 2,…,c} • p(xly;) p(x;y) • Maximum likelihood estimation

Gaussian Generative Model • Difficult to estimate p(x|y) if x is of high dimensionality • Naïve Bayes: • Essentially a linear model • How to make a Gaussian generative model discriminative? • (m,m) of each class are only based on the data belonging to that class lack of discriminative power

How to optimize this objective function? Gaussian Generative Model • Maximum likelihood estimation

Gaussian Generative Model • Bound optimization algorithm

Gaussian Generative Model We have decomposed the interaction of parameters between different classes Question: how to handle x with multiple features ?

Logistic Regression Model • A linear decision boundary: wx+b • A probabilistic model p(y|x) • Maximum likelihood approach for estimating weights w and threshold b

Regularization term Logistic Regression Model • Overfitting issue • Example: text classification • Words that appears in only one document will be assigned with infinite large weight • Solution: regularization

Non-linear Logistic Regression Model • Kernelize logistic regression model

r(x) Group Layer Group 1 g1(x) Group 2 g2(x) ExpertLayer m2,1(x) m2,2(x) m1,1(x) m1,2(x) Non-linear Logistic Regression Model • Hierarchical Mixture Expert Model • Group linear classifiers into a tree structure Products generates nonlinearity in the prediction function

Non-linear Logistic Regression Model • It could be a rough assumption by assuming all data points can be fitted by a linear model • But, it is usually appropriate to assume a local linear model • KNN can be viewed as a localized model without any parameters • Can we extend the KNN approach by introducing a localized linear model?

Localized Logistic Regression Model • Similar to the weight KNN • Weigh each training example by • Build a logistic regression model using the weighted examples

Localized Logistic Regression Model • Similar to the weight KNN • Weigh each training example by • Build a logistic regression model using the weighted examples

Conditional Exponential Model • An extension of logistic regression model to multiple class case • A different set of weights wy and threshold b for each class y • Translation invariance

Maximize Entropy Prefer uniform distribution Constraints Enforce the model to be consistent with observed data Maximum Entropy Model • Finding the simplest model that matches with the data • Iterative scaling methods for optimization

Classification Margin Support Vector Machine • Classification margin • Maximum margin principle: • Separate data far away from the decision boundary • Two objectives • Minimize the classification error over training data • Maximize the classification margin • Support vectors • Only support vectors have impact on the location of decision boundary denotes +1 denotes -1

Support Vectors Support Vector Machine • Classification margin • Maximum margin principle: • Separate data far away from the decision boundary • Two objectives • Minimize the classification error over training data • Maximize the classification margin • Support vectors • Only support vectors have impact on the location of decision boundary denotes +1 denotes -1

Support Vector Machine • Separable case • Noisy case

Quadratic programming! Support Vector Machine • Separable case • Noisy case

Different loss function for punishing mistakes Identical terms Logistic Regression Model vs. Support Vector Machine • Logistic regression model • Support vector machine

Logistic Regression Model vs. Support Vector Machine Logistic regression differs from support vector machine only in the loss function

Kernel Tricks • Introducing nonlinearity into the discriminative models • Diffusion kernel • A graph laplacian L for local similarity • Diffusion kernel • Propagate local similarity information into a global one

Original Input Space Measure the similarity in the model space Fisher Kernel • Derive a kernel function from a generative model • Key idea • Map a point x in original input space into the model space • The similarity of two data points are measured in the model space Model Space

Kernel Methods in Generative Model • Usually, kernels can be introduced to a generative model through a Gaussian process • Define a “kernelized” covariance matrix • Positive semi-definitive, similar to Mercer’s condition

Multi-class SVM • SVMs can only handle two-class outputs • One-against-all • Learn N SVM’s • SVM 1 learns “Output==1” vs “Output != 1” • SVM 2 learns “Output==2” vs “Output != 2” • : • SVM N learns “Output==N” vs “Output != N”

S1 S2 S3 S4 Error Correct Output Code (ECOC) • Encode each class into a bit vector 1 1 2 x 1 1 1 0

w’ ‘good’ ‘OK’ ‘bad’ Ordinal Regression • A special class of multi-class classification problem • There a natural ordinal relationship between multiple classes • Maximum margin principle • The computation of margin involves multiple classes

Decision Tree From slides of Andrew Moore

Decision Tree • A greedy approach for generating a decision tree • Choose the most informative feature • Using the mutual information measurements • Split data set according to the values of the selected feature • Recursive until each data item is classified correctly • Attributes with real values • Quantize the real value into a discrete one

Decision Tree • The overfitting problem • Tree pruning • Reduced error pruning • Rule post-pruning

Decision Tree • The overfitting problem • Tree pruning • Reduced error pruning • Rule post-pruning

Attribute 1 Attribute 2 classifier Generalize Decision Tree Each node is a linear classifier + + + + a decision tree using classifiers for data partition a decision tree with simple data partition