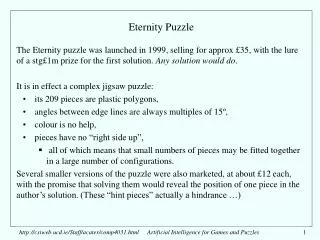

Eternity Puzzle

Eternity Puzzle. The Eternity puzzle was launched in 1999, selling for approx £35, with the lure of a stg£1m prize for the first solution. Any solution would do . It is in effect a complex jigsaw puzzle: its 209 pieces are plastic polygons,

Eternity Puzzle

E N D

Presentation Transcript

Eternity Puzzle • The Eternity puzzle was launched in 1999, selling for approx £35, with the lure of a stg£1m prize for the first solution. Any solution would do. • It is in effect a complex jigsaw puzzle: • its 209 pieces are plastic polygons, • angles between edge lines are always multiples of 15º, • colour is no help, • pieces have no “right side up”, • all of which means that small numbers of pieces may be fitted together in a large number of configurations. • Several smaller versions of the puzzle were also marketed, at about £12 each, with the promise that solving them would reveal the position of one piece in the author’s solution. (These “hint pieces” actually a hindrance …) http://csiweb.ucd.ie/Staff/acater/comp30260.html Artificial Intelligence for Games and Puzzles

The 209 pieces - “polydudes”, “dodecadudes” • The pieces all covered the same area - 12 “tridrafters” - but with different shapes. • Each one could be imagined as a clump of interlocking equilateral triangles with half-triangles arranged on the outside, with two restrictions: • no angles of 15º or 345º • no “narrow necks”, less than triangle altitude - for manufacturing reasons? http://csiweb.ucd.ie/Staff/acater/comp30260.html Artificial Intelligence for Games and Puzzles

The area to be filled • The area to be filled was a dodecahedron, exactly the right size for 209 pieces, symmetrical but slightly irregular: • proceeding clockwise, its edges alternate between • “full equilateral triangles” • “half equilateral triangles” http://csiweb.ucd.ie/Staff/acater/comp30260.html Artificial Intelligence for Games and Puzzles

Filling a region of size 4 http://csiweb.ucd.ie/Staff/acater/comp30260.html Artificial Intelligence for Games and Puzzles

Filling a region of size 4 http://csiweb.ucd.ie/Staff/acater/comp30260.html Artificial Intelligence for Games and Puzzles

Filling a region of size 4 http://csiweb.ucd.ie/Staff/acater/comp30260.html Artificial Intelligence for Games and Puzzles

Filling a region of size 4 http://csiweb.ucd.ie/Staff/acater/comp30260.html Artificial Intelligence for Games and Puzzles

Filling a region of size 4 http://csiweb.ucd.ie/Staff/acater/comp30260.html Artificial Intelligence for Games and Puzzles

Alex Selby’s winning solution The publishers stated that in September 2000, and every year thereafter, scrutineers would inspect all claimed solutions. To their horror, this correct solution was submitted in time for Sept 2000. (spot the parallelogram?) Selby won the £1m prize. http://csiweb.ucd.ie/Staff/acater/comp30260.html Artificial Intelligence for Games and Puzzles

Guenter Stertenbrink’s non-winning solution This different correct solution was also submitted in time for Sept 2000. He left with nothing! The two solutions were found by independent programs working on generally the same principles. http://csiweb.ucd.ie/Staff/acater/comp30260.html Artificial Intelligence for Games and Puzzles

Complexity • Mailing list contributors estimated that • a search of the entire space - not stopping after finding one solution but instead seeking all solutions - would generate approx 10300 partial layouts • small puzzles of this type are easy, larger ones rapidly get more difficult • but a puzzle of this type has a critical size: • beyond that size, a puzzle of this type will tend to have multiple solutions, not just one solution planted by the puzzle’s creator • this puzzle was too big: • critical size ~70-80 pieces: with 209 there would be ~1095 solutions • Even so, with ~1095 solutions spread around a tree with ~10300 nodes, there still are ~10205 nodes expected before finding the first solution. • Far too much time required for depth-first search. • Far too much space needed for breadth-first search. http://csiweb.ucd.ie/Staff/acater/comp30260.html Artificial Intelligence for Games and Puzzles

The puzzle creator’s mistake • It is believed that the creator of the puzzle, C. Monckton, generated the puzzle: • using all 770 possible dodecadudes to find a tiling of the specified area, • then discarding the 551 not used in the solution. • A program set up to solve this kind of problem would show a dramatic increase in problem space complexity as the size of region to be tiled grew larger, from say 10 to 20 to 30. • Probably the creator assumed that this growth in complexity would continue, so that 209 pieces would be, in practical terms, impossible to solve. • … unaware of the existence of a critical size beyond which puzzles get easier • … unaware that hints about his solution were worse than useless http://csiweb.ucd.ie/Staff/acater/comp30260.html Artificial Intelligence for Games and Puzzles

Two phases of search • With DFS and BFS overwhelmed, some heuristic search would be required. • Several mailing-list participants pursued the following line of reasoning: • When there are many pieces available, it is relatively easy to find a piece to fill a particular site; • When only a few pieces remain, it becomes much harder to fill a site. • Some pieces are intrinsically easier than others to place in contact with neighbouring pieces in tiling arrangements. • A heuristic search that preferred to use up awkward pieces early, leaving nicer pieces for later, would have better chance of success. • Therefore, use a heuristically-guided beam search for the early stages of filling the area, followed by an exhaustive search when only a relatively small region (size 30 perhaps) and small number of pieces remain. http://csiweb.ucd.ie/Staff/acater/comp30260.html Artificial Intelligence for Games and Puzzles

Quantifying the awkwardness of pieces • Selby, Stertenbrink, and others, assessed piece awkwardness as follows: • For each of a number of smallish regions (size 24, say), • For each of several randomly chosen relatively large subsets of the 209 pieces (say 90 pieces per collection) • Find all possible ways of tiling the area with the pieces. • For each such solution, for each piece it contains, increment a count of the number of solutions the piece appears in. • The greater the number, the nicer the piece; the less the number, the more awkward the piece. • Selby used a logarithmic formula for Niceness of a piece. http://csiweb.ucd.ie/Staff/acater/comp30260.html Artificial Intelligence for Games and Puzzles

The pieces according to (Selby’s) “niceness” or “tilability” The graph plots piece niceness against the pieces’ position in the ranking, so it is by definition monotonically nondecreasing Nicest piece has score ~+0.66 Nastiest has score ~-2.8 http://csiweb.ucd.ie/Staff/acater/comp30260.html Artificial Intelligence for Games and Puzzles

From “the superpiece” to “the beast piece” http://csiweb.ucd.ie/Staff/acater/comp30260.html Artificial Intelligence for Games and Puzzles

Site selection - Selby • Selby’s program used • a vector-based representation of pieces, • a vector-based representation of the edge of the area currently filled. • a tabulation of • eleven-vector subsequences capable of being matched on both sides, • pieces that fit the vertex in the middle of the eleven • Placement of a piece is rejected outright if the edge it forms cannot be tiled with the remaining pieces - using the tabulation. (2-ply lookahead an option) • The tabulation also allows identification of the most constrained site along the edge of a partially tiled area. • Program aimed (in both beam-search and DFS) to fill the most constrained site. http://csiweb.ucd.ie/Staff/acater/comp30260.html Artificial Intelligence for Games and Puzzles

Selby’s Site Selection There is no authority for this example! It is meant to illustrate the principle of site selection. The area to the left of the line might have been filled, the area to the right remains to be filled, the most constrained site is obvious! http://csiweb.ucd.ie/Staff/acater/comp30260.html Artificial Intelligence for Games and Puzzles

Stertenbrink’s site selection • Stertenbrink partitioned the board into two regions: • an “endgame” region, with a shape with no nasty edge points • the rest of the area • A fixed ordering of sites in the larger area was used in the beam-search phase, • Whenever that was filled, the remaining (generally easier) pieces were used to tile the endgame region, with an exhaustive depth-first search. • Months before Selby found his solution, Stertenbrink began finding 208-piece partial solutions by this method - but that last piece would never fit the space available dammit! http://csiweb.ucd.ie/Staff/acater/comp30260.html Artificial Intelligence for Games and Puzzles

Stertenbrink’s endgame This is one of several “good endgames”, size 64. #35 #51 #52 #53 #54 http://csiweb.ucd.ie/Staff/acater/comp30260.html Artificial Intelligence for Games and Puzzles

Hybrid search • In both cases, a beam search was used for the opening phase: • width of beam is a parameter: • widths around 100-10000 were tried, (and 1M later), widening beam gives more chance of a solution but at greater cost in memory and greater move-generation cost • tradeoff between effort spent in the endgame and getting to the endgame • When the endgame is sufficiently small, exhaustive search (by a fast program) becomes feasible. • Exhaustive enumeration of solutions to numerous small underconstrained problems allows generation of piece niceness data which can be used for heuristically guided search. http://csiweb.ucd.ie/Staff/acater/comp30260.html Artificial Intelligence for Games and Puzzles

Reference • http://www.archduke.demon.co.uk/eternity/talk/notes.html • Caution: • if inventing a puzzle, think very hard before offering a big prize. • News: • The Eternity II puzzle has been on sale since Summer 2007, with $2M prize. http://csiweb.ucd.ie/Staff/acater/comp30260.html Artificial Intelligence for Games and Puzzles