Online Learning for Matrix Factorization and Sparse Coding

Online Learning for Matrix Factorization and Sparse Coding. Julien Mairal, Francis Bach, Jean Ponce and Guillermo Sapiro Journal of Machine Learning Research 2010. Introduction. This paper focuses on the large scale matrix factorization problem, including Dictionary learning for sparse coding

Online Learning for Matrix Factorization and Sparse Coding

E N D

Presentation Transcript

Online Learning for Matrix Factorization and Sparse Coding Julien Mairal, Francis Bach, Jean Ponce and Guillermo Sapiro Journal of Machine Learning Research 2010

Introduction • This paper focuses on the large scale matrix factorization problem, including • Dictionary learning for sparse coding • Non-negative matrix factorization (NMF) • Sparse principal component analysis (SPCA) • Contributions of this paper: • An iterative online algorithm is proposed for large scale matrix factorization • This algorithm is proved to converge almost surely to a stationary point of the objective function • This algorithm is shown to be much faster than previous methods in the experiment.

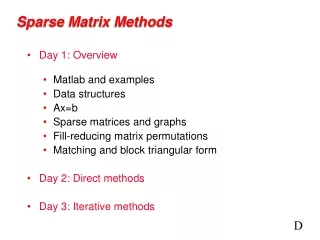

Problem Statement • Classical dictionary learning problem Given a finite training set , the objective is to optimize the following function where • Online Learning This algorithm process one sample (or a mini-batch) at a time and sequentially minimize the following function:

Optimizing the Algorithm • Handling fixed-sized data sets • Scaling the “past” data • Mini-batch extension

Proof of Convergence • Assumptions: • Main results

Extensions to Matrix Factorization • Non-negative matrix factorization (NMF) • Non-negative sparse coding (NNSC) • Sparse principal component analysis (SPCA)

Data for Experiment • 1.25 million patches from Pascal VOC’06 image database

Online VS. Batch • Training data size: 1 million • OL1: • OL2: • OL3:

Comparison with NMF and NNSC • NMF • NNSC

Inpainting Results • Image size: 12-Megapixel • Dictionary with 256 elements • Training data: 7 million 12 by 12 color patches

Conclusion • A new online algorithm for learning dictionaries adapted to sparse coding tasks, and proven its convergence. • Experiments demonstrate that this algorithm is significantly faster than existing batch methods. • This algorithm can be extended to other matrix factorization problems such as non-negative matrix factorization and sparse principal component analysis.