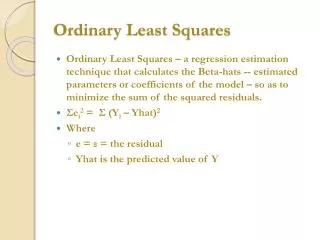

Ordinary Least Squares

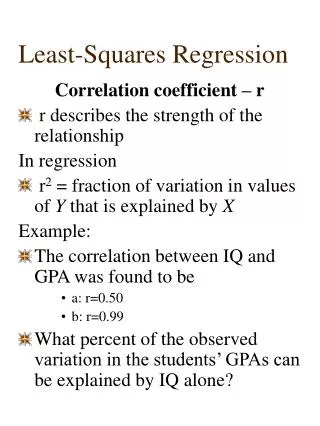

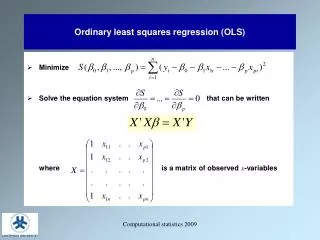

Ordinary Least Squares. Ordinary Least Squares – a regression estimation technique that calculates the Beta-hats -- estimated parameters or coefficients of the model – so as to minimize the sum of the squared residuals. Σ e i 2 = Σ (Y i – Yhat) 2 Where e = ε = the residual

Ordinary Least Squares

E N D

Presentation Transcript

Ordinary Least Squares • Ordinary Least Squares – a regression estimation technique that calculates the Beta-hats -- estimated parameters or coefficients of the model – so as to minimize the sum of the squared residuals. • Σei2 = Σ (Yi – Yhat)2 • Where • e = ε = the residual • Yhat is the predicted value of Y

Why OLS? • OLS is relative easy to use. For a model with one or two independent variables you one can run OLS with a simple spreadsheet (without using the regression function). • The goal of minimizing the sum of squared residuals is appropriate from a theoretical point of view. • OLS estimates have a number of useful characteristics.

Why not minimize residuals? • Residuals can be positive and negative. Just minimizing residuals can produce an estimator with large positive and negative errors that cancel each other out. • Minimizing the absolute value of the sum of residuals poses mathematical problems. Plus, we wish to minimize the possibility of very large errors.

Useful Characteristics of OLS • Estimated regression line goes through the means of Y and X. In equation form Mean of Y = Β0 + Β1(Mean of X) • The sum of residuals is exactly zero. • OLS estimators, under a certain set of assumptions (which we discuss later), are BLUE (Best Linear Unbiased Estimators). Note: OLS is the estimator, the coefficients or parameters are the estimates.

Classical Assumption 1 • The error term has a zero population mean. • We impose this assumption via the constant term. • The constant term equals the fixed portion of the Y that cannot be explained by the independent variables. • The error term equals the stochastic portion of the unexplained value of Y.

Classical Assumption 2 • The error term has a constant variance • Heteroskedasticity • Where does this most often occur? Cross-sectional data • Why does this occur in cross-sectional data?

Classical Assumption 3 • Observations of the error term are uncorrelated with each other. • Serial Correlation or Autocorrelation • Where does this most often occur? Time-series data • Why does this occur in time-series data?

Classical Assumption 4-5 • The data for the dependent variable and independent variable(s) do not have significant measurement errors. • The regression model is linear in the coefficients, is correctly specified, and has an additive error term.

Classical Assumption 6 • The error term is normally distributed • This is an optional assumption, but a good idea. Why? • One cannot use the t-statistic or F-statistic unless this holds (will explain these later).

Five More Assumptions • All explanatory variables are uncorrelated with the error term. • When would this not be the case? Then a system of equations is needed (i.e. supply and demand). • What are the consequences? Estimation of slope coefficient for correlated X terms is biased. • No explanatory variable is a perfect linear function of any other explanatory variable. • Perfect collinearity or multicollinearity • Consequence: OLS cannot distinguish the impact of each X on Y. • X values are fixed in repeated sampling. • The number of observations n must be greater than the number of parameters to be estimated. • There must be variability in X and Y values.

The Gauss-Markov Theorem • Given Classical Assumptions, the OLS estimator βk is the minimum variance estimator from among the set of all linear unbiased estimators of βk. • In other words, OLS is BLUE • Best Linear Unbiased Estimator • Where Best = Minimum Variance

Given assumptions… • The OLS coefficient estimators will be • unbiased • have minimum variance • are consistent • are normally distributed. • The last characteristic is important if we wish to conduct statistical tests of these estimators, the topic of the next chapter.

Unbiased Estimator and Small Variance • Unbiased estimator – an estimator whose sampling distributions has as its expected value the true value of β. • In other words... the mean value of the distribution of estimates equals the true mean of the item being estimated. • In addition to an unbiased estimate, we also prefer a “small” variance.

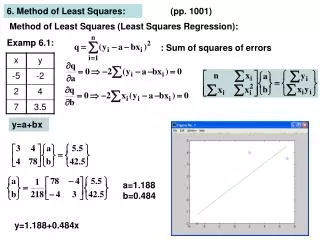

How does OLS Work?The Univariate Model • Y = β0 + β1X + ε • For Example: Wins = β0 + β1Payroll + ε • How do we calculate β1? • Intuition: β1 equals the joint variation of X and Y (around their means) divided by the variation of X around its mean. Thus it measures the portion of the variation in Y that is associated with variation in X. • In other words, the formula for the slope is: • Slope = COV(X,Y)/V(X) • or the covariance of the two variables divided by the variance of X.

How do we calculate β1?Some Simple Math β1 = Σ [(Xi - mean of X) * (Yi - mean of Y)] / Σ (Xi - mean of X)2 • If • xi = Xi - mean of X and • yi = Yi - mean of Y then • β1 = Σ[(xi*yi)] / Σ(xi)2

How do we calculate β0?Some Simple Math • β0 = mean of Y – β1*mean of X • β0 is defined to ensure that the regression equation does indeed pass through the means of Y and X.

Multivariate Regression • Multivariate regression – an equation with more than one independent variable. • Multivariate regression is necessary if one wishes to impose “ceteris paribus.” • Specifically, a multivariate regression coefficient indicates the change in the dependent variable associated with a one-unit increase in the independent variable in question holding constant the other independent variables in the equation.

Omitted Variables, again • If you do not include a variable in your model, then your coefficient estimate is not calculated with the omitted variable held constant. • In other words, if the variable is not in the model it was not held constant. • Then again.... there is the Principle of Parsimony or Occam’s razor (that descriptions be kept as simple as possible until proven inadequate). • So we don’t typically estimate regressions with “hundreds” of independent variables.

The Multivariate Model • Y = β0 + β1X1 + β2X2 + ....... βnXn + ε • For Example, a model where n=2: • Wins = β0 + β1PTS + β2DPTS + ε • Where • PTS = Points scored in a season • DPTS = Points surrendered in a season

How do we calculate β1 and β2?Some Less Simple Math Remember: xi = Xi - mean of X and yi = Yi - mean of Y • β1 = [Σ(x1*yi)*Σ(x2)2 - Σ(x2*yi)*Σ(x1*x2)] / [Σ(x1)2 * Σ(x2)2 - Σ(x1*x2 )2] • β2 = [Σ(x2*yi)*Σ(x1)2 - Σ(x1*yi)*Σ(x1*x2 )] / [Σ(x1)2 * Σ(x2)2 - Σ(x1*x2 )2] • β0 = mean of Y – β1*mean of X – β2*mean of X

Issues to Consider when reviewing regression results • Is the equation supported by sound theory? • How well does the estimated regression fit the data? • Is the data set reasonably large and accurate? • Is OLS the best estimator for this equation? • How well do the estimated coefficients correspond to our expectations? • Are all the obviously important variables included in the equation? • Has the correct functional form been used? • Does the regression appear to be free of major econometric problems? NOTE: This is just a sample of questions one can ask.