Enhancing Paraphrase Identification with Attention Mechanisms | Overview, Applications, and Impact

E N D

Presentation Transcript

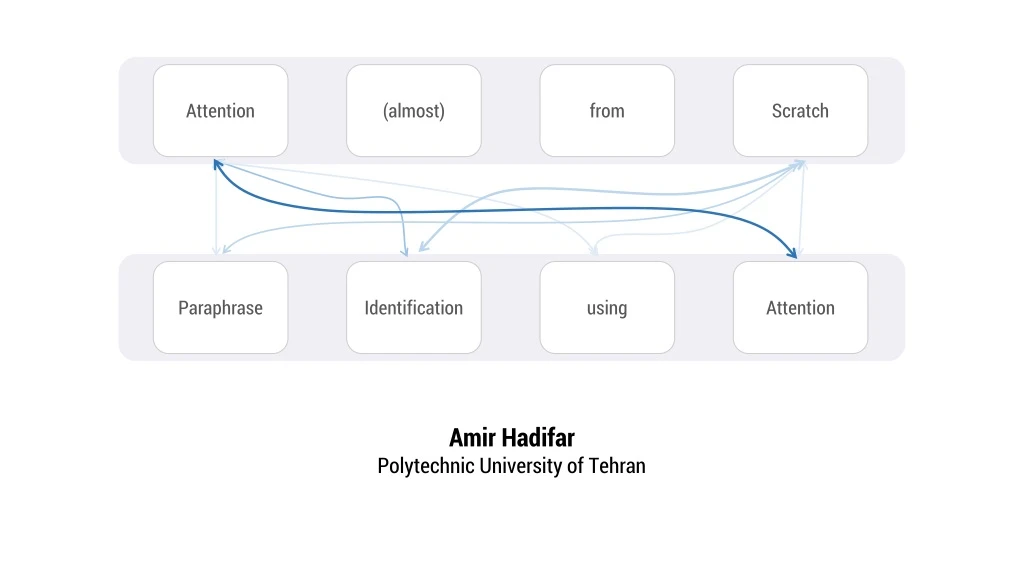

Attention (almost) from Scratch Paraphrase Identification using Attention Amir Hadifar Polytechnic University of Tehran

Overview • What problem attention solve? • What is Attention? • Some Applications

[Recurrent Models of Visual Attention by Mnih et.al 2014] [Photo from videoblocks.com]

A A A A I am a Sentence [Cho et.al 2014; Sutskever et.al 2014; Goldberg 2017; Olah2015] [RNN & Attention diagrams derived from distil.pub]

A A A 1 3 1.2 This is Good Softmax 0.10 0.77 0.13

A [Cho et.al 2014; Sutskever et.al 2014; Goldberg 2017; Olah2015]

سلام دنیا B A B A World Hello

سلام دنیا B A B A World Hello [Bahdanao et.al 2015]

B B A A A A … [Bahdanao et.al 2015]

B B A A A A … [Loung et.al 2015]

B B A A A A … [Loung et.al 2015]

B B A A A A …

Entails Premise: دیروز باران آمد Contradict Hypothesis: هوا ابری بود Neither [Images are from blog.fastforwardlabs.com; KDNuggets.com]

… Word Attention A A A A Word Encoder … … [Li et.al 2015; Yang et.al 2016]

… Word Attention A A A A Word Encoder … … [Li et.al 2015; Yang et.al 2016]

Softmax Sentence Attention B B B B Sentence Encoder … … [Li et.al 2015; Yang et.al 2016]

[Hierarchical Attention Networks for Documents Classification by yang et.al 2016]

A A A A A A چربیهای کمکردن مقدار کاهش بدن BMI

A A A A A A چربیهای کمکردن مقدار کاهش بدن BMI

A A A A A A چربیهای کمکردن مقدار کاهش بدن BMI

A A A A A A چربیهای کمکردن مقدار کاهش بدن BMI

B B B B بدن چربیهای کمکردن 0 مقدار BMI کاهش 0 Pooling Pooling چربیهای بدن کمکردن مقدار BMI کاهش 0 0

Duplicate: Yes/No Feed Forward Network Attention Layer B B B B بدن چربیهای کمکردن 0 مقدار BMI کاهش 0

Performance for paraphrase identification on the Qoura dataset [Rows 2 to 8 are taken from Tomaret.al 2017]

According to Quora: ground truth labels contains some amount of noise Error analysis for paraphrase identification on the Qoura dataset [www.data.quora.com/First-Quora-Dataset-Release-Question-Pairs]

Last words • Still needed to explore • Skip-Connection • Other family members • Neural Turing Machine • Adaptive Computation Time [distill.pub/2016/augmented-rnns/]

References • Y. Goldberg. (2017). Neural Network Methods for Natural Language Processing • D. Bahdanau, K. Cho, and Y. Bengio. (2015). Neural Machine Translation by Jointly Learning to Align and Translate • Z. Yang, D. yang, C. Dyer, X. He, A. Smola, and E. Hovy. (2016). Hierarchical Attention Networks for Document Classification • I. Sutskever, O. Vinyals, and Q. Le. (2014). Sequence to Sequence Learning with Neural Networks • K. Cho, B. Merrienboer, C. Gulcehre, F. Bougares, H. Schwenk, and Y. Bengio. (2014). Learning Phrase Representation using RNN Encoder-Decoder for Statistical Machine Translation • CrisOlah. (2015). colah.github.io/posts/2015-08-Understanding-LSTMs • M. Loung, H. Pham, and C. Manning. (2015). Effective Approaches to Attention-based Neural Machine Translation • J. Li, M. Loung, and D. Jurafsky. (2015). A Hierarchical Neural Auto-encoder for Paragraphs and Documents • Z. Wang, W. Hamza, and R. Florian. (2017). Bilateral Multi-perspective Matching for Natural Language Sentences • G. Tomar, T. Duque, O. Tackstrom, J. Uszkoreit, and D. Das. (2017). Neural Paraphrase Identification of Questions with Noisy Pretraining

Any Question? A A A A Attention Thanks for your