Data Analysis: Simple Statistical Tests

Data Analysis: Simple Statistical Tests. Goals. Understand confidence intervals and p-values Learn to use basic statistical tests including chi square and ANOVA . Types of Variables. Types of variables indicate which estimates you can calculate and which statistical tests you should use

Data Analysis: Simple Statistical Tests

E N D

Presentation Transcript

Goals • Understand confidence intervals and p-values • Learn to use basic statistical tests including chi square and ANOVA

Types of Variables • Types of variables indicate which estimates you can calculate and which statistical tests you should use • Continuous variables: • Always numeric • Generally calculate measures such as the mean, median and standard deviation • Categorical variables: • Information that can be sorted into categories • Field investigation – often interested in dichotomous or binary (2-level) categorical variables • Cannot calculate mean or median but can calculate risk

Measures of Association • Strength of the association between two variables, such as an exposure and a disease • Two measure of association used most often are the relative risk, or risk ratio (RR), and the odds ratio (OR) • The decision to calculate an RR or an OR depends on the study design • Interpretation of RR and OR: • RR or OR = 1: exposure has no association with disease • RR or OR > 1: exposure may be positively associated with disease • RR or OR < 1: exposure may be negatively associated with disease

Risk Ratio or Odds Ratio? • Risk ratio • Used when comparing outcomes of those who were exposed to something to those who were not exposed • Calculated in cohort studies • Cannot be calculated in case-control studies because the entire population at risk is not included in the study • Odds ratio • Used in case-control studies • Odds of exposure among cases divided by odds of exposure among controls • Provides a rough estimate of the risk ratio

Analysis Tool: 2x2 Table • Commonly used with dichotomous variables to compare groups of people • Table puts one dichotomous variable across the rows and another dichotomous variable along the columns • Useful in determining the association between a dichotomous exposure and a dichotomous outcome

Calculating an Odds Ratio Table 1. Sample 2x2 table for Hepatitis A at Restaurant A • Table displays data from a case control study conducted in Pennsylvania in 2003 (2) • Can calculate the odds ratio: • *OR = ad = (218)(85) = 19.6 bc (45)(21)

Confidence Intervals • Point estimate – a calculated estimate (like risk or odds) or measure of association (risk ratio or odds ratio) • The confidence interval (CI) of a point estimate describes the precision of the estimate • The CI represents a range of values on either side of the estimate • The narrower the CI, the more precise the point estimate (3)

Confidence Intervals - Example • Example—large bag of 500 red, green and blue marbles: • You want to know the percentage of green marbles but don’t want to count every marble • Shake up the bag and select 50 marbles to give an estimate of the percentage of green marbles • Sample of 50 marbles: • 15 green marbles, 10 red marbles, 25 blue marbles

Confidence Intervals - Example • Marble example continued: • Based on sample we conclude 30% (15 out of 50) marbles are green • 30% = point estimate • How confident are we in this estimate? • Actual percentage of green marbles could be higher or lower, ie. sample of 50 may not reflect distribution in entire bag of marbles • Can calculate a confidence interval to determine the degree of uncertainty

Calculating Confidence Intervals • How do you calculate a confidence interval? • Can do so by hand or use a statistical program • Epi Info, SAS, STATA, SPSS and Episheet are common statistical programs • Default is usually 95% confidence interval but this can be adjusted to 90%, 99% or any other level

Confidence Intervals • Most commonly used confidence interval is the 95% interval • 95% CI indicates that our estimated range has a 95% chance of containing the true population value • Assume that the 95% CI for our bag of marbles example is 17-43% • We estimated that 30% of the marbles are green: • CI tells us that the true percentage of green marbles is most likely between 17 and 43% • There is a 5% chance that this range (17-43%) does not contain the true percentage of green marbles

Confidence Intervals • If we want less chance of error we could calculate a 99% confidence interval • A 99% CI will have only a 1% chance of error but will have a wider range • 99% CI for green marbles is 13-47% • If a higher chance of error is acceptable we could calculate a 90% confidence interval • 90% CI for green marbles is 19-41%

Confidence Intervals • Very narrow confidence intervals indicate a very precise estimate • Can get a more precise estimate by taking a larger sample • 100 marble sample with 30 green marbles • Point estimate stays the same (30%) • 95% confidence interval is 21-39% (rather than 17-43% for original sample) • 200 marble sample with 60 green marbles • Point estimate is 30% • 95% confidence interval is 24-36% • CI becomes narrower as the sample size increases

Confidence Intervals • Returning to example of Hepatitis A in a Pennsylvania restaurant: • Odds ratio = 19.6 • 95% confidence interval of 11.0-34.9 (95% chance that the range 11.0-34.9 contained the true OR) • Lower bound of CI in this example is 11.0 (e.g., >1) • Odds ratio of 1 means there is no difference between the two groups, OR > 1 indicates a greater risk among the exposed • Conclusion: people who ate salsa were truly more likely to become ill than those who did not eat salsa

Confidence Intervals • Must include CIs with your point estimates to give a sense of the precision of your estimates • Examples: • Outbreak of gastrointestinal illness at 2 primary schools in Italy (4) • Children who ate corn/tuna salad had 6.19 times the risk of becoming ill as children who did not eat salad • 95% confidence interval: 4.81 – 7.98 • Pertussis outbreak in Oregon (5) • Case-patients had 6.4 times the odds of living with a 6-10 year-old child than controls • 95% confidence interval: 1.8 – 23.4 • Conclusion: true association between exposure and disease in both examples

Analysis of Categorical Data • Measure of association (risk ratio or odds ratio) • Confidence interval • Chi-square test • A formal statistical test to determine whether results are statistically significant

Chi-Square Statistics • A common analysis is whether Disease X occurs as much among people in Group A as it does among people in Group B • People are often sorted into groups based on their exposure to some disease risk factor • We then perform a test of the association between exposure and disease in the two groups

Chi-Square Test: Example • Hypothetical outbreak of Salmonella on a cruise ship • Retrospective cohort study conducted • All 300 people on cruise ship interviewed, 60 had symptoms consistent with Salmonella • Questionnaires indicate many of the case-patients ate tomatoes from the salad bar

Chi-Square Test: Example (cont.) Table 2a. Cohort study: Exposure to tomatoes and Salmonella infection • To see if there is a statistical difference in the amount of illness between those who ate tomatoes (41/130) and those who did not (19/170) we could conduct a chi-square test

Chi-Square Test: Example (cont.) • To conduct a chi-square the following conditions must be met: • There must be at least a total of 30 observations (people) in the table • Each cell must contain a count of 5 or more • To conduct a chi-square test we compare the observed data (from study results) with the data we would expect to see

Chi-Square Test: Example (cont.) Table 2b. Row and column totals for tomatoes and Salmonella infection • Gives an overall distribution of people who ate tomatoes and became sick • Based on these distributions we can fill in the empty cells with the expected values

Chi-Square Test: Example (cont.) • Expected Value = Row Total x Column Total Grand Total • For the first cell, people who ate tomatoes and became ill: • Expected value = 130 x 60 = 26 300 • Same formula can be used to calculate the expected values for each of the cells

Chi-Square Test: Example (cont.) Table 2c. Expected values for exposure to tomatoes • To calculate the chi-square statistic you use the observed values from Table 2a and the expected values from Table 2c • Formula is [(Observed – Expected)2/Expected] for each cell of the table

Chi-Square Test: Example (cont.) Table 2d. Expected values for exposure to tomatoes • The chi-square (χ2) for this example is 19.2 • 8.7 + 2.2 + 6.6 + 1.7 = 19.2

Chi-Square Test • What does the chi-square tell you? • In general, the higher the chi-square value, the greater the likelihood there is a statistically significant difference between the two groups you are comparing • To know for sure, you need to look up the p-value in a chi-square table • We will discuss p-values after a discussion of different types of chi-square tests

Types of Chi-Square Tests • Many computer programs give different types of chi-square tests • Each test is best suited to certain situations • Most commonly calculated chi-square test is Pearson’s chi-square • Use Pearson’s chi-square for a fairly large sample (>100)

Using Statistical Tests:Examples from Actual Studies • In each study, investigators chose the type of test that best applied to the situation (Note: while the chi-square value is used to determine the corresponding p-value, often only the p-value is reported.) • Pearson (Uncorrected) Chi-Square : A North Carolina study investigated 955 individuals because they were identified as partners of someone who tested positive for HIV. The study found that the proportion of partners who got tested for HIV differed significantly by race/ethnicity (p-value <0.001). The study also found that HIV-positive rates did not differ by race/ethnicity among the 610 who were tested (p = 0.4). (6)

Using Statistical Tests:Examples from Actual Studies • Additional examples: • Yates (Corrected) Chi-Square: In an outbreak of Salmonella gastroenteritis associated with eating at a restaurant, 14 of 15 ill patrons studied had eaten the Caesar salad, while 0 of 11 well patrons had eaten the salad (p-value <0.01). The dressing on the salad was made from raw eggs that were probably contaminated with Salmonella. (7) • Fisher’s Exact Test: A study of Group A Streptococcus (GAS) among children attending daycare found that 7 of 11 children who spent 30 or more hours per week in daycare had laboratory-confirmed GAS, while 0 of 4 children spending less than 30 hours per week in daycare had GAS (p-value <0.01). (8)

P-Values • Using our hypothetical cruise ship Salmonella outbreak: • 32% of people who ate tomatoes got Salmonella as compared with 11% of people who did not eat tomatoes • How do we know whether the difference between 32% and 11% is a “real” difference? • In other words, how do we know that our chi-square value (calculated as 19.2) indicates a statistically significant difference? • The p-value is our indicator

P-Values • Many statistical tests give both a numeric result (e.g. a chi-square value) and a p-value • The p-value ranges between 0 and 1 • What does the p-value tell you? • The p-value is the probability of getting the result you got, assuming that the two groups you are comparing are actually the same

P-Values • Start by assuming there is no difference in outcomes between the groups • Look at the test statistic and p-value to see if they indicate otherwise • A low p-value means that (assuming the groups are the same) the probability of observing these results by chance is very small • Difference between the two groups is statistically significant • A high p-value means that the two groups were not that different • A p-value of 1 means that there was no difference between the two groups

P-Values • Generally, if the p-value is less than 0.05, the difference observed is considered statistically significant, ie. the difference did not happen by chance • You may use a number of statistical tests to obtain the p-value • Test used depends on type of data you have

Chi-Squares and P-Values • If the chi-square statistic is small, the observed and expected data were not very different and the p-value will be large • If the chi-square statistic is large, this generally means the p-value is small, and the difference could be statistically significant • Example: Outbreak of E. coli O157:H7 associated with swimming in a lake (1) • Case-patients much more likely than controls to have taken lake water in their mouth (p-value =0.002) and swallowed lake water (p-value =0.002) • Because p-values were each less than 0.05, both exposures were considered statistically significant risk factors

Note: Assumptions • Statistical tests such as the chi-square assume that the observations are independent • Independence: value of one observation does not influence value of another • If this assumption is not true, you may not use the chi-square test • Do not use chi-square tests with: • Repeat observations of the same group of people (e.g. pre- and post-tests) • Matched pair designs in which cases and controls are matched on variables such as sex and age

Analysis of Continuous Data • Data do not always fit into discrete categories • Continuous numeric data may be of interest in a field investigation such as: • Clinical symptoms between groups of patients • Average age of patients compared to average age of non-patients • Respiratory rate of those exposed to a chemical vs. respiratory rate of those who were not exposed

ANOVA • May compare continuous data through the Analysis Of Variance (ANOVA) test • Most statistical software programs will calculate ANOVA • Output varies slightly in different programs • For example, using Epi Info software: • Generates 3 pieces of information: ANOVA results, Bartlett’s test and Kruskal-Wallis test

ANOVA • When comparing continuous variables between groups of study subjects: • Use a t-test for comparing 2 groups • Use an f-test for comparing 3 or more groups • Both tests result in a p-value • ANOVA uses either the t-test or the f-test • Example: testing age differences between 2 groups • If groups have similar average ages and a similar distribution of age values, t-statistic will be small and the p-value will not be significant • If average ages of 2 groups are different, t-statistic will be larger and p-value will be smaller (p-value <0.05 indicates two groups have significantly different ages)

ANOVA and Bartlett’s Test • Critical assumption with t-tests and f-tests: groups have similar variances (e.g., “spread” of age values) • As part of the ANOVA analysis, software conducts a separate test to compare variances: Bartlett’s test for equality of variance • Bartlett’s test: • Produces a p-value • If Bartlett’s p-value >0.05, (not significant) OK to use ANOVA results • Bartlett’s p-value <0.05, variances in the groups are NOT the same and you cannot use the ANOVA results

Kruskal-Wallis Test • Kruskal-Wallis test: generated by Epi Info software • Used only if Bartlett’s test reveals variances dissimilar enough so that you can’t use ANOVA • Does not make assumptions about variance, examines the distribution of values within each group • Generates a p-value • If p-value >0.05 there is not a significant difference between groups • If p-value < 0.05 there is a significant difference between groups

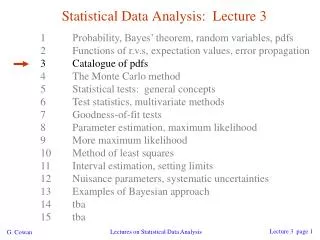

Bartlett’s test for equality of variance p-value >0.05? YES NO Use ANOVA test Use Kruskal-Wallis test test p<0.05 p>0.05 p<0.05 p>0.05 Difference between groups is statistically significant Difference between groups is NOT statistically significant Difference between groups is statistically significant Difference between groups is NOT statistically significant Analysis of Continuous Data Figure 1. Decision tree for analysis of continuous data.

Conclusion • In field epidemiology a few calculations and tests make up the core of analytic methods • Learning these methods will provide a good set of field epidemiology skills. • Confidence intervals, p-values, chi-square tests, ANOVA and their interpretations • Further data analysis may require methods to control for confounding including matching and logistic regression

References 1. Bruce MG, Curtis MB, Payne MM, et al. Lake-associated outbreak of Escherichia coli O157:H7 in Clark County, Washington, August 1999. Arch Pediatr Adolesc Med. 2003;157:1016-1021. 2. Wheeler C, Vogt TM, Armstrong GL, et al. An outbreak of hepatitis A associated with green onions. N Engl J Med. 2005;353:890-897. 3. Gregg MB. Field Epidemiology. 2nd ed. New York, NY: Oxford University Press; 2002. 4. Aureli P, Fiorucci GC, Caroli D, et al. An outbreak of febrile gastroenteritis associated with corn contaminated by Listeria monocytogenes. N Engl J Med. 2000;342:1236-1241.

References 5. Schafer S, Gillette H, Hedberg K, Cieslak P. A community-wide pertussis outbreak: an argument for universal booster vaccination. Arch Intern Med. 2006;166:1317-1321. 6. Centers for Disease Control and Prevention. Partner counseling and referral services to identify persons with undiagnosed HIV --- North Carolina, 2001. MMWR Morb Mort Wkly Rep.2003;52:1181-1184. 7. Centers for Disease Control and Prevention. Outbreak of Salmonella Enteritidis infection associated with consumption of raw shell eggs, 1991. MMWR Morb Mort Wkly Rep. 1992;41:369-372. 8. Centers for Disease Control and Prevention. Outbreak of invasive group A streptococcus associated with varicella in a childcare center -- Boston, Massachusetts, 1997. MMWR Morb Mort Wkly Rep. 1997;46:944-948.