Final Exam Announcement and Homework Due Dates for Image Processing Course

770 likes | 898 Views

Attention students! The final exam timeline and programming deadline have been set for our image processing course. The final exam will be posted no later than 4 PM on specified dates, with all written homework also due. Key topics covered include basic signal processing, image features, segmentation, camera calibration, and edge detection. Ensure all assignments are submitted via email for confirmation. Be prepared to demonstrate your understanding of concepts such as Fourier transforms, Gaussian smoothing, and edge localization. Good luck!

Final Exam Announcement and Homework Due Dates for Image Processing Course

E N D

Presentation Transcript

Review April 24

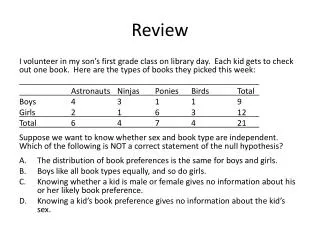

Timeline Final Exam will be posted no later than 4pm. M Tu W Th F Sa Su Progamming #3 due • 23 24 25 26 27 28 29 • 1 2 3 4 5 6 • 7 Final exam due No later than 4pm! All written homework due Send me email to confirm that I do receive your final exam.

Topics Covered • Basic signal processing • Image features and segmentation • Camera calibration • Geometry reconstruction • Shape from shading • Motion (optical flows) • Pattern classification Past 4 weeks Short chapter in Horn

Image Formation Image formation

Image Formation Image irradiance is proportional to scene radiance!

Signal Processing Image smoothing and Filtering Problem: Given an image I corrupted by noise n, attenuate n as much as possible (ideally, eliminate it altogether) without change I significantly. Linear Filter: Convolving the image with a constant matrix, called mask or kernel. Analyzing this process requires Fourier analysis. Main idea: Decompose the signal (image) into frequency components.

Example: Mean Filter If the entries of A are non-negative, the filters performs average smoothing. Mean filter: take A to be the following matrix (with m=3) Effect: replaces a pixel value with the mean of its neighborhood. Intuitively, averaging takes out small variations: averaging m2 noise values divides the standard deviation of the noise by m.

Fourier Transform We can expand the function in the “Fourier” basis The “coefficient function” is given by the Fourier transform of f(x, y) Recall the convolution between two functions, f and h

Frequency domain Spatial domain Fourier transform Coefficients of the wave components Domain on which the function is defined Inverse Fourier transform

Important properties of fourier transform 1. Fourier Transform of a convolution is a product. Convolution in the spatial domain becomes multiplication in the frequency domain. Using inverse transform we have Product in the spatial domain becomes convolution in the frequency domain. 2. Raleigh’s theorem (Parseval’s theorem)

Gaussian smoothing: Kernel is a 2-D Gaussian Fourier transform of a Gaussian is still a Gaussian and no secondary lobes. This makes the Gaussian kernel a better low-pass filter than the mean filter. Another important fact about Gaussian kernel: It is separable. In practice, this means that the 2D convolution can be computed first by convolving all row and then all columns with a 1-D Gaussian have the same standard deviation.

Image Features Corners and Edges Edges (or Edge points) are pixels at or around which the image values undergo a sharp variation. Edge Detection: Given an image corrupted by acquisition noise, locate the edges most likely to be generated by scene elements, not by noise.

Edge Formation: • Occluding Contours • Two regions are images of two different surfaces • Discontinuity in surface orientation or reflectance properties

More Realistically, due to blurring and noise, we generally have Image Gradients Direction in which the values of the function change most rapidly. Its magnitude gives you the rate of change.

Three Steps of Edge Detection • Noise Smoothing: Suppress as much of the image noise as possible. In the absence of specific information, assume the noise white and Gaussian • Edge Enhancement: Design a filter responding to edges. The filter’s output is large at edge pixels and low elsewhere. Edges can be located as the local maxima in the filters’ output. • Edge Localization: Decide which local maxima in the filter’s output are edges and which are just caused by noise. • Thinning wide edges to 1-pixel with (nonmaximum suppression); • Establishing the minimum value to declare a local maximum an edge (thresholding)

Edge Descriptors (The output of an edge detector) • Edge normal: The direction of the maximum intensity variation at the edge point. • Edge direction: The direction tangent to the edge. • Edge Position : The location of the edge in image • Edge strength: A measure of local image contrast. How significance the intensity variation is across the edge.

Image Segmentation Active Contour • How to represent contours? • Explicit scheme • Implicit scheme • How to characterize the desired contour • As the (global) minimum of the a cost function. • How to deform the contour? • Discrete optimization (typically for explicit scheme) • Partial differential equation (for implicit scheme).

Contour Representation (explicit scheme) • A contour is represented as • A collection of control points • An interpolating scheme • Linear interpolation gives a piecewise linear curve • Other interpolation scheme such as splines give smoother curve. MATLAB has several routines on splines and polynomial interpolations.

Contour Representation (implicit scheme) Represent a contour as the zero levelset of a continuous function. No need to keep track of the control points. Given a contour, one such continuous function is the signed distance function from the contour. The value at each point is the distance to the given contour. Exterior points are assigned positive values and interior points have negative values. + -

What is the desired contour? Variational framework: Express the desired contour as the minimum of a energy functional (cost function). s is the arc-length parameterization of the contour C=C(s). Each term in the energy functional correspond to forces acting on the contour. control the relative strength of the corresponding energy term and they vary along the contour.

The first two terms, continuous and smoothness constraints, are internal to the contour. The third term, an external force, accounts for the edge attraction, dragging the contour toward the closest image edge. (minimizes contour length) in discrete form or better to prevent clusters

The second term minimizes the curvature to avoid zigzags or oscillations. Curvature is related to the second derivatives and using central difference, the second derivatives can be approximated by What is curvature? Curvature measures how fast the tangents of the contour vary.

Edge Attraction term This terms depend only on the contour and independent of its derivatives. In general, are set to constants and their values will influence the result.

Algorithm Snake ( Using explicit representation ) Inputs: Image I and a chain of points on the image f the minimum fraction of snake points that must move in each iteration and U(p) a small neighborhood of p and d (the average distance). • For each i= 1, .. , N, find the location in U(pi) for which the energy functional is minimum and move the control point pi to that point. • For each i=1, …, N, estimate the curvature and look for local maxima. • Set bi= 0 for all pi at which the curvature has a local maximum or exceeds some user-defined value. • Update the value of the average distance, d.

Main problem with explicit scheme Keeping track of control points make can be cumbersome. In particular, topological change is difficult to execute correctly.

Implicit Scheme is considerably better with topological change. • Transition from Active Contours: • contour v(t) front (t) • contour energy forces FA FC • image energy speed function kI • Level set: • The level set c0 at time t of a function (x,y,t) is the set of arguments { (x,y) , (x,y,t) = c0 } • Idea: define a function (x,y,t) so that at any time, (t) = { (x,y) , (x,y,t) = 0 } • there are many such • has many other level sets, more or less parallel to • only has a meaning for segmentation, not any other level set of

Level Set Framework (x,y,t) 0 0 0 5 7 6 5 4 4 4 3 0 2 1 1 1 2 0 3 4 5 6 5 4 3 3 3 0 2 1 1 2 0 3 4 5 4 3 2 0 0 2 0 2 1 -1 -1 -1 0 1 2 3 4 3 2 0 1 1 1 -1 -2 -2 -2 -1 0 1 2 3 2 1 0 -1 -2 -3 -3 0 -2 -1 1 2 0 2 1 0 -1 -1 -1 -2 -3 -3 0 -2 -1 1 2 3 2 1 -1 0 -2 -2 -3 -3 -2 0 -1 1 2 3 4 2 1 -1 -2 0 -2 0 -2 0 -2 0 -1 0 1 2 3 4 5 3 2 1 -1 -1 -1 -1 -1 0 0 1 2 3 4 5 -2 4 3 2 1 -1 -1 1 2 3 4 5 4 3 2 1 1 1 1 1 2 3 4 5 6 5 4 3 2 2 2 2 1 1 2 3 4 5 6 (x,y,t) (t) Usual choice for : signed distance to the front (0) - d(x,y, ) if (x,y) inside the front (x,y,0) = 0 “ on “ d(x,y, ) “ outside “

Level Set • Segmentation with LS: • Initialise the front (0) • Compute (x,y,0) • Iterate: • (x,y,t+1) = (x,y,t) + ∆(x,y,t) • until convergence • Mark the front (tend)

Write down the equation for Y! product of influences spatial derivative of (x,y,t+1) - (x,y,t) smoothing “force” depending on the local curvature (contour influence) extension of the speed function kI(image influence) constant “force” (balloon pressure) • Equation 17 p162:

Corners Given an image, denote the image gradient. For each point p and a neighborhood around p, form the matrix C is symmetric with two positive eigenvalues. The eigenvalues give the edge strengths and eigenvectors give the direction.

Examples • Perfectly uniform • Step edge • Ideal corner Eigenvectors and eigenvalues of C

Algorithm Detecting Corners Inputs: Image I and two parameters the threshold and sub-window size • Compute image gradients over I • For the matrix C over a neighborhood Q of p. • Compute the second eigenvector • If save p into a list, L. • Sort L in decreasing order of • Scanning the sorted list top to bottom: for each current point p delete all points appearing furhter on the list which belong to the neighborhood of p.

Slight Detour (Corners and object recognition) Corner descriptor: (formed from the) Intensity values in a small neighborhood of the corner points. Treat these corners and their descriptors as words and the each image as a document. Use document analysis techniques to analyze the images. Want to know more? CIS 6930 next semester.

Camera Calibaration Each camera can be considered as a function: a function that takes each 3D point to a point in 2D image plane. (x, y, z) ------ > (X, Y) Camera calibration is about finding (or approximating) this function. Difficulty of the problem depends on the assumed form of this function, e.g., perspective model, radial distortion of the lens.

Calibration Procedure • Calibration Target: Two perpendicular planes with chessboard pattern. • We know the 3D positions of the corners with respect to a coordinates system defined on the target. • Place a camera in front of the target and we can locate the corresponding corners on the image. This gives us the correspondences. • Recover the equation that describes imaging projection and camera’s internal parameters. At the same time, also recover the relative orientation between the camera and the target (pose).

Camera Frame to World Frame Rotation matrix

Homogeneous Coordinates Let C = - Rt T

Perspective Projection Use Homogeneous coordinates, the perspective projection becomes linear.