Sequential Learning

Sequential Learning . James B. Elsner and Thomas H. Jagger Department of Geography, Florida State University. Some material based on notes from a one-day short course on Bayesian modeling and prediction given by David Draper (http://www.ams.ucsc.edu/~draper).

Sequential Learning

E N D

Presentation Transcript

Sequential Learning James B. Elsner and Thomas H. Jagger Department of Geography, Florida State University Some material based on notes from a one-day short course on Bayesian modeling and prediction given by David Draper (http://www.ams.ucsc.edu/~draper)

The conditional probability of an event B in relationship to an event A is the probability of that event B occurs given that A has already occurred. P(B|A): conditional probability of B given A. P(B|A) = P(A and B)/P(A) P(A and B): probability of events A and B both occurring. Imagine that A refers to “customer buys product A” and B refers to “customer buys product B”. P(A|B) would then read as the “probability that a customer will buy product A given that they have bought product B.” If A tends to occur when B occurs, then knowing that B has occurred allows you to assign a higher probability to A's occurrence than in a situation in which you did not know that B occurred. P(A) P(B) P(A and B)

I have two children. At least one is a boy. What is the probability that I have two boys? Event D = {At least one is a boy} Event C = {Both are boys} P(C|D) = ? First child Second child # boys Probability } ½ 2 ¼ B2 ½ B1 ¾ 1 ¼ ½ G2 ½ B2 1 ¼ ½ G1 ½ G2 1 ¼ P(C|D) = P(C and D) / P(C) = P(both are boys) /P(at least one is a boy) = ¼ / ¾ = 1/3

The answer is counterintuitive to some degree. I think there are at least • two reasons why. • Semantics. Mistaking event C (at least one is a boy) with a particular • child being a boy. • For example, conditioning on B1 instead of C gives • P(D|B1) = P(B1 and D) / P(B1) = P(both boys)/P(first boy) • = ¼ / ½ = ½. • Confusion as to why we need to assign a probability to our data. Event • C (at least one is a boy) appears as a statement of fact that needs no • probability assignment.

What happens if A contains no information about B? Well, that would mean P(B) = P(B|A) . Thus it follows that P(B) = P (B and A)/ P(A) Multiplying both sides by P(A), gives P(A)P(B) = P(B and A) This equation defines what we mean by for two events to be independent. So, when A contains no information about B, the two events are independent. If I tell you A happened and this information does not change what You think about B, then it seems appropriate to say that A and B are independent. Random sampling with and without replacement.

There are many circumstances in which you would like to know the probability of an event, but you cannot calculate it directly. You may be able to find it if you know its probability under some conditions. The desired probability is a weighted average of the various conditional probabilities. Example: You are in a chess tournament and you will play your next game against either J or M, depending on results from other games. Suppose your probability of beating J is 7/10, but only 2/10 for beating M. You assess your probability of playing J at ¼. How likely is it that you will win your next game? P(W|J) = 7/10 P(W|not J) = 2/10 P(J) = ¼ P(not J) = ¾ We want P(W), the unconditional probability of winning. By the Law of Total Probability, P(W) is P(W) = P(W|J) P(J) + P(W|not J) P(not J) = 0.325.

As we saw last time, Bayes’ theorem (or rule) indicates how probabilities change in the light of evidence. It is arguably the most important tool in statistics, wherein the evidence is usually data. Bayes’ theorem is derived from the definition of conditional probability. As we’ve seen: P(A|B) = P(A and B)/P(B) (1) But, we can also write: P(B|A) = P(B and A)/P(A) Multiplying through yields: P(A) P(B|A) = P(B and A) (2) Since: P(A and B) = P(B and A), we can write eq (2) as: P(A) P(B|A) = P(A and B) (3) Then substituting eq (3) into eq (1) yields P(A|B) = P(A) P(B|A)/P(B)

Suppose you tell me you won your next chess game. This is the “evidence”. Who was your opponent? Recall these probabilities: P(W|J) = 7/10 P(W|not J) = 2/10 P(J) = ¼ P(not J) = ¾ So, without conditioning on the evidence, you are three times as likely to have played M as J. Now I learn you won and I want to find P(J|W). Since you are more likely to win playing J than M, it seems reasonable to expect P(J|W) to be bigger than P(J). Bayes rule verifies that it is bigger. P(J|W) = P(W|J)P(J)/P(W) We expand the denominator using the law of total probability.

So P(J|W) = P(W|J)P(J)/[P(W|J) P(J) + P(W|not J) P(not J)] This applies for any events W and J, in any setting! In our example, P(J|W) = (7/10)(1/4) / [(7/10)(1/4) + (2/10)(3/4)] = 7/40/13/40 = 7/13 = 54%. Bayes’ rule relates inverse probabilities, giving P(J|W) in terms of P(W|J). The conventional terminology for P(J|W) is the posterior probability of J given W and for P(J) is prior probability of J, since it applies before or not conditionally on the information W occurred.

Bayesian inference is often put forth as a prescriptive framework for hypothesis testing. Using this framework, it is standard to replace P(A | B) with P(H | E) where H stands for hypothesis and E stands for evidence. Bayes inference rule then looks like this: P(H | E) = P(E | H) P(H) / P(E) In words, the formula says that the posterior probability of a hypothesis given the evidence P(H | E) is equal to the likelihood of the evidence given the hypothesis P(E | H) multiplied by the prior probability of the hypothesis P(H).

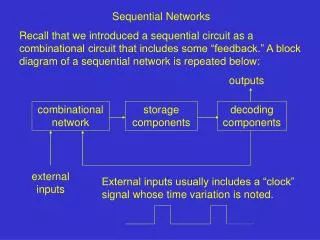

The terms prior and posterior emphasize the sequential nature of the learning process: P(H) is your uncertainty assessment before the data arrives (your prior). Your prior is updated multiplicatively on the probability scale by the likelihood P(E | H). Writing Bayes’ Theorem both for H and (not H) and combining gives another version: Bayes’ Theorem in odds form P(H | E) / P(not H | E) = P(H) / P(not H) X P(E | H)/P(E | not H) posterior odds = prior odds X Bayes’ factor Other names for Bayes’ factor are the data odds and the likelihood ratio, since this factor measures the relative plausibility of the evidence given H and (not H).

Thus for our chess example, we have P(J|W) / P(not J|W) = P(J) / P(not J) X P(W|J)/P(W|not J) Again, recall these probabilities: P(W|J) = 7/10 P(W|not J) = 2/10 P(J) = ¼ P(not J) = ¾ The prior odds in favor of J are ¼ / ¾ = 1/3, and the Bayes factor in favor of J is 7/10/2/10 = 7/2, so the posterior odds in favor of J are 1/3 * 7/2 = 7/6. This translates to a probability of 7/(7+6) = 7/13 = 54%. Bayesian calculator.

Applying Bayes’ rule to the HIV example requires additional information about ELISA obtained by screening the blood of people with known HIV status. sensitivity = P(ELISA positive|HIV positive) specificity = P(ELISA negative|HIV negative) These are called ELISA’s operating characteristics. They are rather good—sensitivity of about 0.95, and specificity of about 0.98. Thus, you might well expect that P(this patient HIV positive|ELISA positive) would be close to 1.

Here the updating produces a surprising result: Bayes factor comes out as B = sensitivity/(1 – specificity) = 0.95/0.02 = 47.5 which sounds like strong evidence for this patient being HIV positive. But, the prior odds are quite a bit stronger the other way prior odds = P(A)/[ 1 - P(A)] = 99 to 1 against this patent being HIV positive. This is 0.0101 for this patent being HIV positive. Thus multiplying prior odds by Bayes factor we get the posterior odds of 0.0101*47.5 = 0.4798 to 1 for HIV. To turn odds into probability we write P(HIV positive|data) = for / (for+against) = 0.4798/(1.4798) = 0.32 (32%).

The reason Your posterior probability that Your patient is HIV positive is only 32% in light of the strong evidence from ELISA is that ELISA was designed to have a vastly better false negative rate—P(HIV positive|ELISA negative); P(HIV positive|ELISA negative)=5/9707 = 0.00052 or 1 in 1941, in comparison to its false positive rate—P(HIV negative|ELISA positive); P(HIV negative|ELISA positive)=198/293 = 0.68 or 2 in 3. This in turn is because ELISA’s developers judged it far worse to tell someone who’s HIV positive that they’re not than the other way around (reasonable for using ELISA for blood bank screening for instance).

The false positive rate would make widespread screening for HIV based only on ELISA a truly bad idea. Formalizing the consequences of the two types of error in diagnostic screening would require quantifying misclassification costs, which shifts the focus from (scientific) inference (the acquisition of knowledge for its own sake: Is this patient really HIV positive?) to decision making (putting that knowledge to work to answer a public policy or business question, e.g.: What use of ELISA and Western Blot would yield the optimal screening strategy?) Examples from your areas of interest.

From which bowl is the cookie? Suppose there are two bowls full of cookies. Bowl #1 has 10 chocolate chip and 30 plain cookies, while bowl #2 has 20 of each. Our friend Fred picks a bowl at random, and then picks a cookie at random. We may assume there is no reason to believe Fred treats one bowl differently from another, likewise for the cookies. The cookie turns out to be a plain one. How probable is it that Fred picked it out of bowl #1? Intuitively, it seems clear that the answer should be more than a half, since there are more plain cookies in bowl #1. The precise answer is given by Bayes' theorem. Let H1 corresponds to bowl #1, and H2 to bowl #2. It is given that the bowls are identical from Fred's point of view, thus P(H1) = P(H2), and the two must add up to 1, so both are equal to 0.5. The datum D is the observation of a plain cookie. From the contents of the bowls, we know that P(D | H1) = 30/40 = 0.75 and P(D | H2) = 20/40 = 0.5.

A Bayesian model for the number of strong hurricanes. Handout.

In the courtroom • Bayesian inference can be used to coherently assess additional evidence of guilt in a court setting. • Let G be the event that the defendant is guilty. • Let E be the event that the defendant's DNA matches DNA found at the crime scene. • Let p(E | G) be the probability of seeing event E assuming that the defendant is guilty. (Usually this would be taken to be unity.) • Let p(G | E) be the probability that the defendant is guilty assuming the DNA match event E • Let p(G) be the probability that the defendant is guilty, based on the evidence other than the DNA match. • Bayesian inference tells us that if we can assign a probability p(G) to the defendant's guilt before we take the DNA evidence into account, then we can revise this probability to the conditional probability p(G | E), since • p(G | E) = p(G) p(E | G) / p(E) • Suppose, on the basis of other evidence, a juror decides that there is a 30% chance that the defendant is guilty. Suppose also that the forensic evidence is that the probability that a person chosen at random would have DNA that matched that at the crime scene was 1 in a million, or 10-6. • The event E can occur in two ways. Either the defendant is guilty (with prior probability 0.3) and thus his DNA is present with probability 1, or he is innocent (with prior probability 0.7) and he is unlucky enough to be one of the 1 in a million matching people. • Thus the juror could coherently revise his opinion to take into account the DNA evidence as follows: • p(G | E) = (0.3 × 1.0) /(0.3 × 1.0 + 0.7 × 10-6) = 0.99999766667. • In the United Kingdom, Bayes' theorem was explained by an expert witness to the jury in the case of Regina versus Dennis John Adams. The case went to Appeal and the Court of Appeal gave their opinion that the use of Bayes' theorem was inappropriate for jurors.