Instruction Based Memory Distance Analysis and its Application to Optimization

This paper presents a novel approach to analyzing memory distance based on instruction access, addressing the widening gap between processor and memory speeds, often referred to as the "memory wall." By utilizing dynamic reuse distance prediction techniques for both integer and floating-point codes, the methodology enhances the capability of static compiler analysis. It predicts miss rates and critical instructions, ultimately aiming to optimize memory access patterns. The findings demonstrate applicability beyond scientific codes, providing insights into memory pattern characteristics, predictive accuracy, and enhancing load speculation processes.

Instruction Based Memory Distance Analysis and its Application to Optimization

E N D

Presentation Transcript

Instruction Based Memory Distance Analysis and its Application to Optimization Changpeng Fang Steve Carr Soner Önder Zhenlin Wang

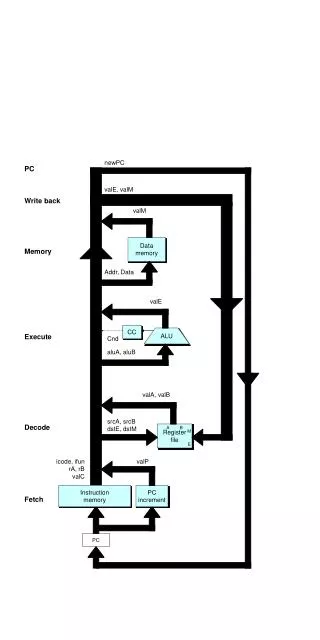

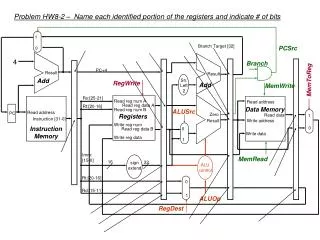

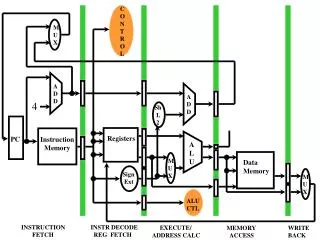

Motivation • Widening gap between processor and memory speed • memory wall • Static compiler analysis has limited capability • regular array references only • index arrays • integer code • Reuse distance prediction across program inputs • number of distinct memory locations accessed between two references to the same memory location • applicable to more than just regular scientific code • locality as a function of data size • predictable on whole program and per instruction basis for scientific codes

Motivation • Memory distance • A dynamic quantifiable distance in terms of memory reference between tow access to the same memory location. • reuse distance • access distance • value distance • Is memory distance predictable across both integer and floating-point codes? • predict miss rates • predict critical instructions • identify instructions for load speculation

Related Work • Reuse distance • Mattson, et al. ’70 • Sugamar and Abraham ’94 • Beyls and D’Hollander ’02 • Ding and Zhong ’03 • Zhong, Dropsho and Ding ’03 • Shen, Zhong and Ding ’04 • Fang, Carr, Önder and Wang ‘04 • Marin and Mellor-Crummey ’04 • Load speculation • Moshovos and Sohi ’98 • Chyrsos and Emer ’98 • Önder and Gupta ‘02

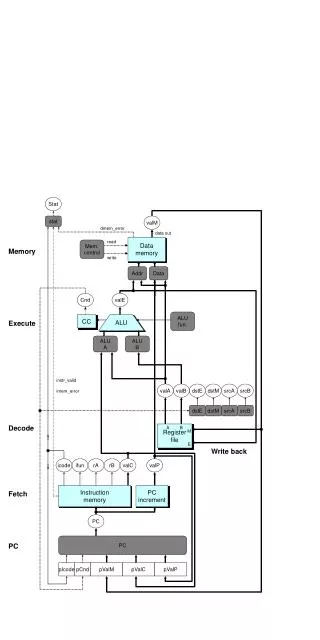

Background • Memory distance • can use any granularity (cache line, address, etc.) • either forward or backward • represented as a pattern • Represent memory distance as a pattern • divide consecutive ranges into intervals • we use powers of 2 up to 1K and then 1K intervals • Data size • the largest reuse distance for an input set • characterize reuse distance as a function of the data size • Given two sets of patterns for two runs, can we predict a third set of patterns given its data size?

Background • Let be the distance of the ith bin in the first pattern and be that of the second pattern. Given the data sizes s1 and s2 we can fit the memory distances using • Given ci, ei, and fi, we can predict the memory distance of another input set with its data size

Instruction Based Memory Distance Analysis • How can we represent the memory distance of an instruction? • For each active interval, we record 4 words of data • min, max, mean, frequency • Some locality patterns cross interval boundaries • merge adjacent intervals, i and i + 1, if • merging process stops when a minimum frequency is found • needed to make reuse distance predictable • The set of merged intervals make up memory distance patterns

What do we do with patterns? • Verify that we can predict patterns given two training runs • coverage • accuracy • Predict miss rates for instructions • Predict loads that may be speculated

Prediction Coverage • Prediction coverage indicates the percentage of instructions whose memory distance can be predicted • appears in both training runs • access pattern appears in both runs and memory distance does not decrease with increase in data size (spatial locality) • same number of intervals in both runs • Called a regular pattern • For each instruction, we predict its ith pattern by • curve fitting the ith pattern of both training runs • applying the fitting function to construct a new min, max and mean for the third run • Simple, fast prediction

Prediction Accuracy • An instruction’s memory distance is correctly predicted if all of its patterns are predicted correctly • predicted and observed patterns fall in same interval • or, given two patterns A and B such thatB.min A.max B.max

Experimental Methodology • Use 11 CFP2000 and 11 CINT2000 benchmarks • others don’t compile correctly • Use ATOM to collect reuse distance statistics • Use test and train data sets for training runs • Evaluation based on dynamic weighting • Report reuse distance prediction accuracy • value and access very similar

Coverage issues • Reasons for no coverage • instruction does not appear in at least one test run • reuse distance of test is larger than train • number of patterns does not remain constant in both training runs

Prediction Details • Other patterns • 183.equake has 13.6% square root patterns • 200.sixtrack, 186.crafty all constant (no data size change) • Low coverage • 189.lucas – 31% of static memory operations do not appear in training runs • 164.gzip – the test reuse distance greater than train reuse distance • cache-line alignment

Miss Rate Prediction • Predict a miss for a reference if the backward reuse distance is greater than the cache size. • neglects conflict misses • Accurate miss rate prediction

Miss Rate Prediction Methodology • Three miss-rate prediction schemes • TCS – test cache simulation • Use the actual miss rates from running the program on a the test data for the reference data miss rates • RRD – reference reuse distance • Use the actual reuse distance of the reference data set to predict the miss rate for the reference data set • An upper bound on using reuse distance • PRD –predicted reuse distance • Use the predicted reuse distance for the reference data set to predict the miss rate.

Critical Instructions • Given reuse distance for an instruction • Can we determine which instructions are critical in terms of cache performance? • An instruction is critical if it is in the set of instructions that generate the most L2 cache misses • Those top miss-rate instructions whose cumulative total misses account for 95% of the misses in a program. • Use the execution frequency of one training run to determine the relative contribution number of misses for each instruction • Compare the actual critical instructions with predicted • Use cache configuration 2

Miss Rate Discussion • PRD performs better than TCS when data size is a factor • TCS performs better when data size doesn’t change much and there are conflict misses • PRD is much better at identifying the critical instructions than TCS • these instructions should be targets of optimization

Memory Disambiguation • Load speculation • Can a load safely be issued prior to a preceding store? • Use a memory distance to predict the likelihood that a store to the same address has not finished • Access distance • The number of memory operations between a store to and load from the same address • Correlated to instruction distance and window size • Use only two runs • If access distance not constant, use the access distance of larger of two data sets as a lower bound on access distance

When to Speculate • Definitely “no” • access distance less than threshold • Definitely “yes” • access distance greater than threshold • Threshold lies between intervals • compute predicted mis-speculation frequency (PMSF) • speculate is PMSF < 5% • When threshold does not intersect intervals • total of frequencies that lie below the threshold • Otherwise

Value-based Prediction • Memory dependence only if addresses and values match store a1, v1store a2, v2store a3, v3load a4, v4 Can move ahead if a1=a2=a3=a4, v2=v3 and v1≠v2 • The access distance of a load to the first store in a sequence of stores storing the same value is called the value distance

Experimental Design • SPEC CPU2000 programs • SPEC CFP2000 • 171.swim, 172.mgrid, 173.applu, 177.mesa, 179.art, 183.equake, 188.ammp, 301.apsi • SPEC CINT2000 • 164.gzip, 175.vpr, 176.gcc, 181.mcf, 186.crafty, 197.parser, 253.perlbmk, 300.twolf • Compile with gcc 2.7.2 –O3 • Comparison • Access distance, value distance • Store set with 16KB table, also with values • Perfect disambiguation

Summary • Over 90% of memory operations can have reuse distance predicted with a 97% and 93% accuracy, for floating-point and integer programs, respectively • We can accurately predict miss rates for floating-point and integer codes • We can identify 92% of the instructions that cause 95% of the L2 misses • Access- and value-distance-based memory disambiguation are competitive with best hardware techniques without a hardware table

Future Work • Develop a prefetching mechanism that uses the identified critical loads. • Develop an MLP system that uses critical loads and access distance. • Path-sensitive memory distance analysis • Apply memory distance to working-set based cache optimizations • Apply access distance to EPIC style architectures for memory disambiguation.