Direct Volume Rendering

Direct Volume Rendering. Acknowledgement : Han-Wei Shen Lecture Notes 사용. Direct Volume Rendering. Direct : no conversion to surface geometry Three methods Ray-Casting Splatting 3D Texture-Based Method. Data Representation.

Direct Volume Rendering

E N D

Presentation Transcript

Direct Volume Rendering Acknowledgement : Han-Wei Shen Lecture Notes 사용

Direct Volume Rendering • Direct : no conversion to surface geometry • Three methods • Ray-Casting • Splatting • 3D Texture-Based Method

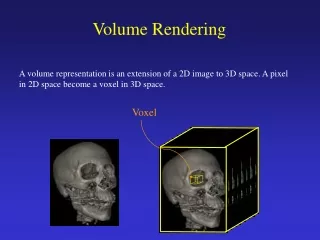

Data Representation • 3D volume data are represented by a finite number of cross sectional slices (hence a 3D raster) • On each volume element (voxel), stores a data value (if it uses only a single bit, then it is a binary data set. Normally, we see a gray value of 8 to 16 bits on each voxel.) N x 2D arraies = 3D array

A voxel is a cubic cell, which has a single value cover the entire cubic region A voxel is a data point at a corner of the cubic cell The value of a point inside the cell is determined by interpolation Data Representation (2) What is a Voxel? – Two definitions

Basic Idea Based on the idea of ray tracing • Trace from eat each pixel • as a ray into object space • Compute color value along • the ray • Assign the value to the • pixel

Viewing • Ray Casting • Where to position the volume and image plane • What is a ‘ray’ • How to march a ray

Viewing (1) 1. Position the volume Assuming the volume dimensions is w x w x w We position the center of the volume at the world origin Volume center = [w/2,w/2,w/2] (local space) Translate T(-w/2,-w/2,-w/2) (0,0,0) x (data to world matrix? world to data matrix ) y z

(0,0,0) x y z Viewing (2) 2. Position the image plane Assuming the distance between the image plane and the volume center is D, and initially the center of the image plane is (0,0,-D) Image plane

Viewing (3) 3. Rotate the image plane A new position of the image plane can be defined in terms of three rotation angle a,b,g with respect to x,y,z axes Assuming the original view vector is [0,0,1], then the new view vector g becomes: cosb 0 -sinb 1 0 0 cosg sing 0 g = [0,0,1]0 1 0 0 cosa sina -sing cosg 0 sinb 0 cosb 0 -sina cosa 0 0 1

E0 u0 E v v0 u + S0 S (0,0,0) x y z Viewing (4) B = [0,0,0] S0 = [0,0,-D] u0 = [1,0,0] v0 = [0,1,0] B Now, R: the rotation matrix S = B – D x g U = [1,0,0] x R V = [0,1,0] x R

Viewing (5) Image Plane: L x L pixels Then E = S – L/2 x u – L/2 x v So Each pixel (i,j) has coordinates P = E + i x u + j x v S + v u E R: the rotation matrix S = B – D x g U = [1,0,0] x R V = [0,1,0] x R We enumerate the pixels by changing i and j (0..L-1)

d p x x x x Q Viewing (6) 4. Cast rays Remember for each pixel on the image plane P = E + i x u + j x v and the view vector g = [0,0,1] x R So the ray has the equation: Q = P + k (d x g) d: the sampling distance at each step K = 0,1,2,…

Old Methods • Before 1988 • Did not consider transparency • did not consider sophisticated light transportation theory • were concerned with quick solutions • hence more or less applied to binary data non-binary data - require sophisticated classification/compositing methods!

Ray Tracing -> Ray Casting • “another” typical method from traditional graphics • Typically we only deal with primary rays -hence: ray-casting • a natural image-order technique • as opposed to surface graphics - how do we calculate the ray/surface intersection??? • Since we have no surfaces - we need to carefully step through the volume

Ray Casting • Stepping through the volume: a ray is cast into the volume, sampling the volume at certain intervals • The sampling intervals are usually equi-distant, but don’t have to be (e.g. importance sampling) • At each sampling location, a sample is interpolated / reconstructed from the grid voxels • popular filters are: nearest neighbor (box), trilinear (tent), Gaussian, cubic spline • Along the ray - what are we looking for?

Example: Using the nearest neighbor kernel Q = P + K x V (v=dxg) At each step k, Q is rounded off to the nearest voxel (like the DDA algorithm) Check if the voxel is on the boundary or not (compare against a threshold) If yes, perform shading In tuys’ paper

Basic Idea of Ray-casting Pipeline • Data are defined at the corners • of each cell (voxel) • The data value inside the • voxel is determined using • interpolation (e.g. tri-linear) • Composite colors and opacities • along the ray path • Can use other ray-traversal schemes as well c1 c2 c3

Ray Traversal Schemes Intensity Max Average Accumulate First Depth

Ray Traversal - First • First: extracts iso-surfaces (again!)done by Tuy&Tuy ’84 Intensity First Depth

Ray Traversal - Average • Average: produces basically an X-ray picture Intensity Average Depth

Ray Traversal - MIP • Max: Maximum Intensity Projectionused for Magnetic Resonance Angiogram Intensity Max Depth

Ray Traversal - Accumulate • Accumulate opacity while compositing colors: make transparent layers visible!Levoy ‘88 Intensity Accumulate Depth

1.0 Raycasting volumetric compositing color opacity object (color, opacity)

Raycasting Interpolationkernel volumetric compositing color opacity 1.0 object (color, opacity)

1.0 Raycasting Interpolationkernel volumetric compositing color c = c s s(1 - ) + c opacity = s (1 - ) + object (color, opacity)

Raycasting volumetric compositing color opacity 1.0 object (color, opacity)

Raycasting volumetric compositing color opacity 1.0 object (color, opacity)

Raycasting volumetric compositing color opacity 1.0 object (color, opacity)

Raycasting volumetric compositing color opacity 1.0 object (color, opacity)

Raycasting volumetric compositing color opacity object (color, opacity)

Volume RenderingPipeline Acquired values Data preparation Prepared values shading classification Voxel colors Voxel opacities Ray-tracing / resampling Ray-tracing / resampling Sample colors Sample opacities compositing Image Pixels

Common Components of General Pipeline • Interpolation/reconstruction • Classification or transfer function • Gradient/normal estimation for shading • Question: are normals also interpolated?

Shading and Classification • - Shading: compute a color(lighting) for each data point in the • volume • - Classification: Compute color and opacity for each data point • in the volume • Done by table lookup (transfer function) • Levoy preshaded the entire volume f(xi) C(xi), a(xi)

Shading Common shading model – Phong model For each sample, evaluate: C = ambient + diffuse + specular = constant +Ip Kd (N.L) + Ip Ks (N.H) Ip: emission color at the sample N: normal at the sample i n

Gradient/Normals (Levoy 1988) • Central difference • per voxel Y+1 y-1, z-1 X+1

Shading (Levoy 1988) • Phong Shading + Depth Cueing • Cp = color of parallel light source • ka / kd / ks = ambient / diffuse / specular light coefficient • k1, k2 = fall-off constants • d(x) = distance to picture plane • L = normalized vector to light • H = normalized vector for maximum highlight • N(xi) = surface normal at voxel xi

Compositing you can use ‘Front-to-Back’ Compositing formula Front-to-Back compositing: use ‘over’ operator C = backgrond ‘over’ C1 C = C ‘over’ C2 C = C ‘over’ C3 … Cout = Cin + C(x)*(1- ain); aout = ain + a(x) *(1-ain) c1 c2 c3

Classification Map from numerical values to visual attributes Color Transparency Transfer functions Color function: c(s) Opacity function: a(s) 21.05 27.05 24.03 20.05

Classification/Transfer Function • Maps raw voxel value into presentable entities: color, intensity, opacity, etc. Raw-data material (R, G, B, a, Ka, Kd, Ks, ...) • May require probabilistic methods (Drebin). Derive material volume from input. Estimate % of each material in all voxels. Pre-computed. AKA segmentation. • Often use look-up tables (LUT) to store the transfer function that are discovered

Levoy - Classification • Usually not only interested in a particular iso-surface but also in regions of “change” • Feature extraction - High value of opacity exists in regions of change • Transfer function (Levoy) - Saliency • Surface “strength”

Opacity function (1) • Goal: visualize voxels that have a selected threshold • value fv • - No intermediate geometry is extracted • - The idea is to assign voxels that have value fv the • maximum opacity (say a) • And then create a smooth transition for the surrounding • area from 1 to 0 • Levoy wants to maintain a constant thickness for the • transition area.

Opacity function (2) Maintain a constant isosurface thickness Can we assign opacity based on function value instead of distance? (local operation: we don’t know where the isosurface is) Yes – we can based on the value distance f – fv but we need to take into account the local gradient opacity = a opacity = 0

Opacity function (3) Assign opacity based on value difference (f-fv) and local gradient gradient: the value fall-off rate grad = Df/Ds Assuming a region has a constant gradient and the isosurface transition has a thickness R F = f(x) Then we interpolate the opacity opacity = a – a * ( fv-f(x))/ (grad * R) opacity = a F = fv opacity = 0 F = fv – grad * R thickness = R

DCH - Material Percentage V. • Probabilistic classifier • probability that a voxel has intensity I: • pi - percentage of material • Pi(I) - prob. that material i has value I • Pi(I) given through statistics/physics • pi then given by:

DCH - Classification • Like Levoy - assumes only two materials per voxel • that will lead to material percentage volumes • from them we conclude color/opacity: • where Ci=(aiRi, aiGi, aiBi, ai)

Levoy - Improvements • Levoy 1990 • front-to-back with early ray terminationa = 0.95 • hierarchical oct-tree data structure • skip empty cells efficiently