Partial Least Squares

Partial Least Squares. Very brief intro. Multivariate regression. The multiple regression approach creates a linear combination of the predictors that best correlates with the outcome

Partial Least Squares

E N D

Presentation Transcript

Partial Least Squares Very brief intro

Multivariate regression • The multiple regression approach creates a linear combination of the predictors that best correlates with the outcome • With principal components regression, we first create several linear combinations (equal to the number of predictors) and then use those composites in predicting the outcome instead of the original predictors • Components are independent • Helps with collinearity • Can use fewer of components relative to predictors while still retaining most of the predictor variance

Multiple Regression Note the bold, we are dealing with vectors and matrices X1 X2 Linear Composite Outcome X3 X4 Principal Components Regression Here T refers to our components, W and Q are coefficient vectors as B is above LinComp X1 LinComp X2 New Composite Outcome LinComp X3 LinComp X4

Partial Least Squares • Partial Least Squares is just like PC Regression except in how the component scores are computed • PC regression = weights are calculated from the covariance matrix of the predictors • PLS = weights reflect the covariance structure between predictors and response • While conceptually not too much of a stretch, it requires a more complicated iterative algorithm • Nipals and SIMPLS algorithms probably most common • Like in regression, the goal is to maximize the correlation between the response(s) and component scores

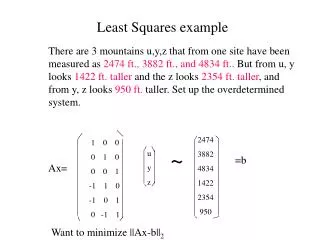

Example • Download the PCA R code again • Requires the pls package • Do consumer ratings of various beer aspects associate1 with their SES?

Multiple regression • All are statistically significant correlates of SES and almost all the variance is accounted for (98.7%) Coefficients: Estimate Std. Error t value Pr(>|t|) (Intercept) 0.534025 0.134748 3.963 0.000101 *** ALCOHOL -0.004055 0.001648 -2.460 0.014686 * AROMA 0.036402 0.001988 18.310 < 2e-16 *** COLOR 0.007610 0.002583 2.946 0.003578 ** COST -0.002414 0.001109 -2.177 0.030607 * REPUTAT 0.014460 0.001135 12.744 < 2e-16 *** SIZE -0.043639 0.001947 -22.417 < 2e-16 *** TASTE 0.036462 0.002338 15.594 < 2e-16 *** --- Signif. codes: 0 '***' 0.001 '**' 0.01 '*' 0.05 '.' 0.1 ' ' 1 Residual standard error: 0.2877 on 212 degrees of freedom (11 observations deleted due to missingness) Multiple R-squared: 0.987, Adjusted R-squared: 0.9866 F-statistic: 2305 on 7 and 212 DF, p-value: < 2.2e-16

PC Regression • For first 3 components • The first component accounts for 53.4% of the variance in the predictors, and only 33% of the variance in the outcome • With the second and third, the vast majority of the variance in the predictors and outcome is accounted for • Loadings breakdown according to a PCA for the predictors Data: X dimension: 220 7 Y dimension: 220 1 Fit method: svdpc Number of components considered: 3 TRAINING: % variance explained 1 comps 2 comps 3 comps X 53.38 85.96 92.19 SES 33.06 95.26 95.35 Loadings: Comp 1 Comp 2 Comp 3 COST -0.546 -0.185 SIZE -0.574 -0.333 ALCOHOL -0.534 -0.110 REPUTAT 0.246 -0.221 -0.890 AROMA 0.554 COLOR -0.120 0.568 -0.298 TASTE 0.519 Comp 1 Comp 2 Comp 3 SS loadings 1.000 1.000 1.000 Proportion Var 0.143 0.143 0.143 Cumulative Var 0.143 0.286 0.429

PLS Regression • For first 3 components • The first component accounts for 44.8% of the variance in the predictors (almost 10% less than PCR), and 90% of the variance in the outcome (a lot more than PCR) • The loadings are notably different compared to the PC regression Data: X dimension: 220 7 Y dimension: 220 1 Fit method: kernelpls Number of components considered: 3 TRAINING: % variance explained 1 comps 2 comps 3 comps X 44.81 85.89 89.72 SES 90.05 95.92 97.90 Loadings: Comp 1 Comp 2 Comp 3 COST -0.573 0.287 0.781 SIZE -0.542 0.365 -0.291 ALCOHOL -0.523 0.315 -0.359 REPUTAT -0.326 0.709 AROMA 0.234 0.450 COLOR 0.218 0.483 TASTE 0.236 0.410 0.146 Comp 1 Comp 2 Comp 3 SS loadings 1.062 1.024 1.353 Proportion Var 0.152 0.146 0.193 Cumulative Var 0.152 0.298 0.491

Comparison of coefficients Coefficients: Estimate (Intercept) 0.534 COST -0.002 SIZE -0.044 ALCOHOL -0.004 REPUTAT 0.014 AROMA 0.036 COLOR 0.008 TASTE 0.036 • MR • PCA • PLS (Intercept) 2.500 COST -0.022 SIZE -0.017 ALCOHOL -0.018 REPUTAT -0.000 AROMA 0.023 COLOR 0.024 TASTE 0.022 (Intercept) 0.964 COST -0.002 SIZE -0.034 ALCOHOL -0.017 REPUTAT 0.012 AROMA 0.027 COLOR 0.019 TASTE 0.031

Why PLS? • PLS can extends to multiple outcomes and allows for dimension reduction • Less restrictive in terms of assumptions than MR • Distribution free • No collinearity • Independence of observations not required • Unlike PCR it creates components with an eye to the predictor-DV relationship • Unlike Canonical Correlation, it maintains the predictive nature of the model • While similar interpretation is possible, depending on your research situation and goals, any may be viable analyses