OUTLINE

ML feature selection, learning classifiers. Pre-classified. documents. Representation ... automobile ---- car --- - wagon -- N036030448 {automobile, car, wagon} ...

OUTLINE

E N D

Presentation Transcript

Slide 2:OUTLINE Motivation

Concept indexing with WordNet synsets

Concept indexing in ATC

Experiments set-up

Summary of results & discussion

Updated results

Conclusions & current work

Slide 3:MOTIVATION Most popular & effective model for thematic ATC

IR-like text representation

ML feature selection, learning classifiers

Slide 4:MOTIVATION Bag of Words

Binary

TF

TF*IDF

Stoplist

Stemming

Feature Selection

Slide 5:MOTIVATION Text representation requirements in thematic ATC

Semantic characterization of text content

Words convey an important part of the meaning

But we must deal with polysemy and synonymy

Must allow effective learning

Thousands to tens of thousands attributes

? noise (effectiveness) & lack of efficiency

Slide 6:CONCEPT INDEXING WITH WORDNET SYNSETS Using vectors of synsets instead of word stems

Ambiguous words mapped to correct senses

Synonyms mapped to same synsets

Slide 7:CONCEPT INDEXING WITH WORDNET SYNSETS Considerable controversy in IR

Assumed potential for improving text representation

Mixed experimental results, ranging from

Very good [Gonzalo et al. 98] to bad [Voorhees 98]

Recent review in [Stokoe et al. 03]

A problem of state-of-the-art WSD effectiveness

But ATC is different!!!

Slide 8:CONCEPT INDEXING IN ATC Apart of the potential...

We have much more information about ATC categories than IR queries

WSD lack of effectiveness can be less hurting because of term (feature) selection

But we have new problems!!!

Data sparseness & noise

Most terms are rare (Zipf�s Law) ? bad estimates

Categories with few documents ? bad estimates, lack of information

Slide 9:CONCEPT INDEXING IN ATC Concept indexing helps to solve IR & new ATC problems

Text ambiguity in IR & ATC

Data sparseness & noise in ATC

Less indexing units of higher quality (selection) ? probably better estimates

Categories with few documents ? why not enriching representation with WordNet semantic relations?

Hyperonymy, meronymy, etc.

Slide 10:CONCEPT INDEXING IN ATC Literature review

As in IR, mixed results, ranging from

Good [Fukumoto & Suzuki, 01] to bad [Scott, 98]

Notably, researchers use words in synsets instead of the synset codes themselves

Still lacking

Slide 11:EXPERIMENTS SETUP Primary goal

Comparing terms vs. correct synsets as indexing units

Requires perfect disambiguated collection (SemCor)

Secondary goals

Comparing perfect WSD with simple methods

More scalability, less accuracy

Comparing terms with/out stemming, stop-listing

Nature of SemCor (genre + topic classification)

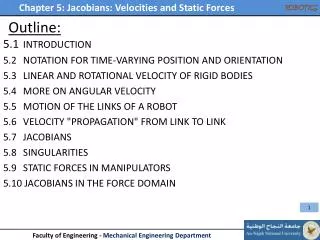

Slide 12:EXPERIMENTS SETUP Overview of parameters

Binary classifiers vs. multi-class classifiers

Three concept indexing representations

Correct WSD (CD)

WSD by POS Tagger (CF)

WSD by corpus frequency (CA)

Slide 13:EXPERIMENTS SETUP Overview of parameters

Four term indexing representations

No Stemming, No StopList (BNN)

No Stemming, with Stoplist (BNS)

With Stemming, without Stoplist (BSN)

With Stemming and Stoplist (BSS)

Slide 14:EXPERIMENTS SETUP Levels of selection with IG

No selection (NOS)

top 1% (S01)

top 10% (S10)

IG>0 (S00)

Slide 15:EXPERIMENTS SETUP Learning algorithms

Na�ve Bayes

kNN

C4.5

PART

SVMs

Adaboost+Na�ve Bayes

Adaboost+C4.5

Slide 16:EXPERIMENTS SETUP Evaluation metrics

F1 (average of recall � precission)

Macroaverage

Microaverage

K-fold cross validation (k=10 in our experiments)

Slide 17:SUMMARY OF RESULTS & DISCUSSION Overview of results

Slide 18:SUMMARY OF RESULTS & DISCUSSION CD > C* weakly supports that accurate WSD is required

BNN > B* does not support that stemming & stop-listing are NOT required

Genre/topic orientation

Most importantly CD > B* does not support that synsets are better indexing units than words (stemmed & stop-listed or not)

Slide 19:UPDATED RESULTS Recent results combining synsets & words (no stemming, no stop-listing, binary problem)

NB ? S00, C4.5 ? S00, S01, S10

SVM ? S01, ABNB ? S00, S00, S10

Slide 20:CONCLUSSIONS & CURRENT WORK Synsets are NOT a better representation, but IMPROVE the bag-of-words representation

We are testing semantic relations (hyperonymy) on SemCor

It is required more work on Reuters-21578

We will have to address WSD, initially with the approaches described in this work

Slide 21:THANK YOU !