Single Variable Regression

Single Variable Regression. Farrokh Alemi, Ph.D. Kashif Haqqi M.D. Additional Reading. For additional reading see Chapter 15 and Chapter 14 in Michael R. Middleton’s Data Analysis Using Excel, Duxbury Thompson Publishers, 2000.

Single Variable Regression

E N D

Presentation Transcript

Single Variable Regression Farrokh Alemi, Ph.D. Kashif Haqqi M.D.

Additional Reading • For additional reading see Chapter 15 and Chapter 14 in Michael R. Middleton’s Data Analysis Using Excel, Duxbury Thompson Publishers, 2000. • Example described in this lecture is based in part on Chapter 17 and Chapter 18 of Keller and Warrack’s Statistics for Management and Economics. Fifth Edition, Duxbury Thompson Learning Publisher, 2000. • Read any introductory statistics book about single and multiple variable regression.

Which Approach Is Appropriate When? • Choosing the right method for the data is the key statistical expertise that you need to have. • You might want to review a decision tool that we have organized for you to help you in choosing the right statistical method.

Do I Need to Know the Formulas? • You do not need to know exact formulas. • You do need to know where they are in your reference book. • You do need to understand the concept behind them and the general statistical concepts imbedded in the use of the formulas. • You do not need to be able to do correlation and regression by hand. You must be able to do it on a computer using Excel or other software.

Objectives Purpose of Regression Correlation or Regression? First Order Linear Model Probabilistic Linear Relationship Estimating Regression Parameters Assumptions Sum of squares Tests Percent of variation explained Example Regression Analysis in Excel Normal Probability Plot Residual Plot Goodness of Fit ANOVA For Regression Table of Content

Objectives • To learn the assumptions behind and the interpretation of single and multiple variable regression. • To use Excel to calculate regressions and test hypotheses.

Purpose of Regression • To determine whether values of one or more variable are related to the response variable. • To predict the value of one variable based on the value of one or more variables. • To test hypotheses.

Correlation or Regression? • Use correlation if you are interested only in whether a relationship exists. • Use Regression if you are interested in building a mathematical model that can predict the response variable. • Use regression if you are interested in the relative effectiveness of several variables in predicting the response variable.

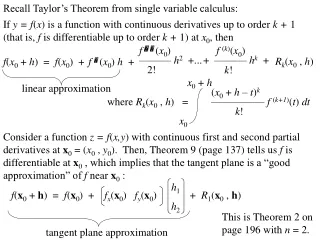

First Order Linear Model • A deterministic mathematical model between y and x: • y = 0 +1 * x • 0 is the intercept with y axis, the point at which x = 0 • 1 is the angle of the line, the ratio of rise divided by the run in figure to the right. It measures the change in y for one unit of change in x.

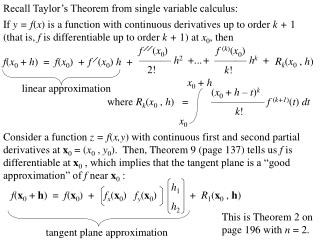

^ ^ y y Probabilistic Linear Relationship • But relationship between x and y is not always exact. Observations do not always fall on a straight line. • To accommodate this, we introduce a random error term referred to as epsilon: y = 0 +1 * x + • The task of regression analysis then is to estimate the parameters b0 and b1 in the equation: = b0 + b1 * x so that the difference between y and is minimized

Regression line Residual Estimating Regression Parameters • Red dots show the observations • The solid line shows the estimated regression line • The distance between each observation and the solid line is called residual • Minimize the sum of the squared residuals (differences between line and observations).

Assumptions • The dependent (response) variable is measured on an interval scale • The probability distribution of the error is Normal with mean zero • The standard deviation of error is constant and does not depend on values of x • The error terms associated with any particular value of Y is independent of error term associated with other values of Y

Sum of Squares • Variation in y = SSR + SSE • MSR divided by MSE is the test statistic for ability of regression to explain the data

Tests • The hypothesis that the regression equation does not explain variation in Y and can be tested using F test. • The hypothesis that the coefficient for x is zero can be tested using t statistic. • The hypothesis that the intercept is 0 can be tested using t statistic

Percent of Variation Explained • R2 is the coefficient of determination. • The minimum R2 is zero. The maximum is 1. • 1- R2 is the variation left unexplained. • If Y is not related to X or related in a non-linear fashion, then R2 will be small. • Adjusted R2 shows the value of R2 after adjustment for degrees of freedom. It protects against having an artificially high R2 by increasing the number of variables in the model.

Example • Is waiting time related to satisfaction ratings? • Predict what will happen to satisfaction ratings if waiting time reaches 15 minutes?

Regression Analysis in Excel • Select tools • Select data analysis • Select regression analysis • Identify the x and y data of equal length • Ask for residual plots to test assumptions • Ask for normal probability plot to test assumption

Normal Probability Plot • Normal Probability Plot compares the percent of errors falling in particular bins to the percentage expected from Normal distribution. • If assumption is met then the plot should look like a straight line.

Residual Plot • Tests that residuals have mean of zero and constant standard deviation • Tests that residuals are not dependent on values of x

Linear Equation • Satisfaction = 121.3 – 4.8* Waiting time • At 15 minutes waiting time, satisfaction is predicted to be: • 121.3 - 4.8 * 15 = 48.87 • The t statistic related to both the intercept and waiting time coefficient are statistically significant. • The hypotheses that the coefficients are zero are rejected.

Goodness of Fit • 57% of variation in satisfaction ratings is explained by the equation • 43% of variation in satisfaction ratings is left unexplained

ANOVA For Regression • The regression model has mean sum of square of 347. • The mean sum of errors is 33. Note the error term is called residuals in Excel. • F statistics is 10, the probability of observing this statistic is 0.02. • The hypothesis that the MSR and MSE are equal is rejected. Significant variation is explained by regression.

Take Home Lesson • Regression is based on SS approach, similar to ANOVA • Regression assumptions can be examined by looking at residuals • Several hypotheses can be tested using regression analysis