Incremental Network Programming for Wireless Sensors

Network Programming. Delivers the program code to multiple nodes over the air with a ... We extended TinyOS network programming implementation (XNP) for incremental update. ...

Incremental Network Programming for Wireless Sensors

E N D

Presentation Transcript

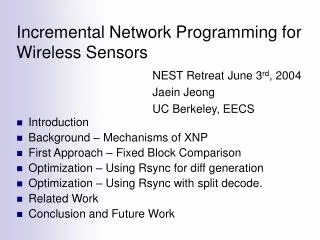

Incremental Network Programming for Wireless Sensors • Introduction • Background – Mechanisms of XNP • First Approach – Fixed Block Comparison • Optimization – Using Rsync for diff generation • Optimization – Using Rsync with split decode. • Related Work • Conclusion and Future Work NEST Retreat June 3rd, 2004 Jaein Jeong UC Berkeley, EECS

Introduction – Programming Wireless Sensors • In-System Programming (ISP) • A sensor node is plugged to the serial / parallel port. • But, it can program only one sensor node at a time. • Network Programming • Delivers the program code to multiple nodes over the air with a single transmission. • Saves the efforts of programming each individual node.

Introduction – Network Programming for TinyOS • Network programming for TinyOS (XNP) • Has been available since release 1.1 • Originally made by Crossbow and modified by UCB. • Provides basic network programming capability. • Has some limitations. • No support of multi-hop delivery. • No support of incremental update. • Goal: • Fast code delivery by incremental update • Multihop delivery (Deluge (UCB), MOAP(UCLA))

Host Machine Sensor Node Program Memory Boot (2) loader User Network Network Application (3) Programming External Programming Section Host Program (1) Module Flash User app Radio SREC file Packets Boot loader Section Background – Mechanisms of XNP • Host: sends program code as download msgs. • Sensor node: stores the msgs in the external flash. • Sensor node: calls the boot loader. The boot loader copies the program code to the program memory.

First Approach – Fixed Block Comparison(Difference Generation) • The host program • Generates the difference of the two program versions. • Compares the corresponding blocks of the two program images. • Sends “copy” for the matching blocks, “download” for the unmatched ones.

First Approach – Fixed Block Comparison(Storage Organization and Code Rebuild) • The sensor node • Keeps new and previous sections in the external flash. • For “copy”, copies the records from the prev section to new section. • For “download”, stores the code bytes in the new section.

Experiment Setup • Test Applications • Wrote simple network programmable applications, XnpBlink and XnpCount. • XnpBlink and XnpCount are modifications of TinyOS applications Blink and CntToLedsAndRfm for network programming. • Test Scenarios • Case 1: Changing a constant (XnpBlink) • Case 2: Modifying implementation file (XnpCount) • Case 3: Major change (XnpBlink XnpCount) • Case 4 and 5: Modifying configuration file (XnpCount)

Experiment Setup – Test Scenarios • Case 1: Changing a constant (XnpBlink) • Case 2: Modifying implementation file (XnpCount)

Experiment Setup – Test Scenarios • Case 3: Major change (XnpBlink XnpCount) • Case 4: Modifying configuration file (XnpCount) • Commented out IntToLeds component. • Case 5: Modifying configuration file (XnpCount) • Commented out IntToRfm component.

Code Comparison • Compared the two program images in different block sizes. • The level of sharing in bytes is much higher than that in blocks. • However, using smaller block size means more copy messages – increasing xmit time.

7.00 Estimated Speed Up (Fixed) 6.00 Measured Speed Up (Fixed) 5.00 4.00 Speed up 3.00 2.00 1.00 0.00 Case 1 Case 2 Case 3 Case 4 Case5 Results – Fixed Block Comparison • We compared the transmission time (T) with the non-incremental delivery time (Txnp). • There is almost no speed up except when we modified the constant in the source code (case 1).

Results – Fixed Block Comparison • In most cases (other than case 1), much of program code is sent as download messages rather than copy and this contributes the not-so-good performance.

Optimizing Difference Generationusing Rsync algorithm • Needs an algorithm that can capture the shared data even when the program is shifted. • We use Rsync algorithm to compare the two program images at an arbitrary byte position. • Rsync was originally made to transfer the incremental update of arbitrary binary file over a low bandwidth Internet connection.

Difference Generationusing Rsync algorithm (Diff Generation) (1) Build the hash table for the previous image. • Calculate the checksum pair (checksum, hash) for each block. • The checksum is for the fast match. • The hash is for more accurate match.

Difference Generationusing Rsync algorithm (Diff Generation) (2) Scan the current image and find the matching block. • Calculate the checksum for the block at each byte. • Calculate the hash only if the checksum matches. • Moves to the next byte and recalculates the checksum if the block doesn’t match.

Difference Generation using Rsync (Rebuilding the code image) • Storage Organization • Two sections (current & previous) in the external flash memory. • Building the program image using the difference • Download: data bytes are written to the current program section. • Copy: lines of the prev section are copied to the current section. • Copy blocks are set as multiple of SREC lines to avoid additional flash memory read/write due to partial SREC lines.

7.00 Estimated Speed Up (Fixed) 6.00 Measured Speed Up (Fixed) Estimated Speed Up (Rsync) 5.00 Measured Speed Up (Rsync) 4.00 Speed up 3.00 2.00 1.00 0.00 Case 1 Case 2 Case 3 Case 4 Case5 Results – Using Rsync • The performance got better than Fixed Block Comparison. • Speed up of 2 to 2.5 for a small change. • 2.5 for adding a few lines in implementation file (case 2). • 2.0 for commenting out IntToLeds in the configuration file (case 4). • Still limited speed up for big changes. • Major change (case 3). • Commenting out IntToRfm (case 5).

Problems – Using Rsync • Sends a number of copy messages even though the two program images are very close. • The maximum block size of a copy message is limited. • This is to bound the running time of a copy message. • Inefficient handling of missing packets. • The script commands are not stored. • Checks the rebuilt program image for verification. • In case a copy message is lost, it requests the retransmission of each missing record rather than the copy message itself.

Optimizing Difference Delivery (1) The network programming module stores the difference script first.

Optimizing Difference Delivery (2) And then, it starts building the program image only after the host program sends decode message.

10.00 Fixed Block Comparison 9.00 Rsync 8.00 Rsync + decode 7.00 6.00 Speedup 5.00 4.00 3.00 2.00 1.00 0.00 Case 1 Case 2 Case 3 Case 4 Case5 Results – Using Rsync with decode • The performance of using Rsync algorithm with decode command is similar to just using Rsync. • But, the performance for changing a constant has improved (speed up of 9.0).

Related Work • Wireless Sensor Network Programming • XNP: network programming for TinyOS • MOAP: multihop network programming by UCLA • Deluge: multihop network programming by UC Berkeley • Reijers et al: algorithms for updating binary image. Updating code at instruction level. • Kapur et al: Incremental network programming based on (4). • Mate and Trickle: Network programming of virtual machine code. • Outside Wireless Sensor Network • Rsync and LBFS: mechanisms for distributing the new version of file over a low bandwidth network.

Conclusion • We extended TinyOS network programming implementation (XNP) for incremental update. • The host program generates the program difference using Rsync algorithm and sends the difference to the sensor node. • The sensor node stores the program difference in its external flash memory and rebuilds the new program image using the difference and the previous image. • We have got 2 – 2.5 of speed up for small changes in the source code.

Future Work • Combine Incremental Program Update with multihop delivery. • Deluge or MOAP • Study on nesC compiler support to further increase the level of sharing.