Chapter19 Clustering Analysis

Chapter19 Clustering Analysis. Content. Similarity coefficient Hierarchical clustering analysis Dynamic clustering analysis Ordered sample clustering analysis.

Chapter19 Clustering Analysis

E N D

Presentation Transcript

Content • Similarity coefficient • Hierarchical clustering analysis • Dynamic clustering analysis • Ordered sample clustering analysis

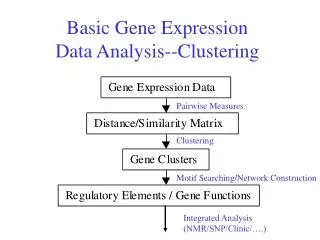

Discriminant Analysis : having known with certainty to come from two or more populations, it’s a method to acquire the discriminate model that will allocate further individuals to the correct population. Clustering Analysis: a statistic method for grouping objects of random kind into respective categories. It’s used when there’s no priori hypotheses, but trying to find the most appropriate sorting method resorting to mathematical statistics and some collected information. It has become the first selected means to uncover great capacity of genetic messages.Both are methods of multivariate statistics to study classification.

Clustering analysis is a method of exploring statistical analysis. It can be classified into two major species according to its aims. For example, m refers to the number of variables(i.e. indexes) while n refers to that of cases(i.e. samples) ,you can do as follows: (1) R-type clustering: also called index clustering. The method to sort the m kinds of indexes, aiming at lowering the dimension of indexes and choosing typical ones. (2)Q-type clustering: also called sample clustering. The method to sort the n kinds of samples to find the commonness among them.

The most important thing for both R-type clustering and Q-type clustering is the definition of similarity, that is how to quantify similarity. The first step of clustering is to define the metric similarity between two indexes or two samples- similarity coefficient

§ 1 similarity coefficient 1 similarity coefficient of R-type clustering Suppose there are m kinds of variables: X1,X2,…,Xm. R-type clustering usually use the absolute value of simple correlation coefficient to define the similarity coefficient among variables: The two variables tend to be more similar when the absolute value increases. Similarly, Spearman rank correlation coefficient can be used to define the similarity coefficient of non-normal variables. But when the variables are all qualitative variables, it’s best to use contingency coefficient.

2. Similarity coefficient commonly used in Q-type clustering : Suppose there are n cases regard as n spots in a m dimensions space, distance between two spots can be used to define similarity coefficient, the two samples tend to be more similar when the distance declines. (1)Euclidean distance(2)Manhattan distance (3)Minkowski distance: Absolute distance refers to Minkowski distance when q=1;Euclidean distance is direct-viewing and simple to compute, but having not regarded the correlated relations among variables. That’s why Manhattan distance was introduced. (19-5)

(4)Mahalanobis distance :it’s used to express the sample covariance matrix among m kinds of variables. It can be worked out as follows: When it’s a unit matrix, Mahalanobis distance equals to the square of Euclidean distance. All of the four distances refer to quantitative variables, for the qualitative variables and ordinal variables, quantization is needed before using.

§ 2 Hierarchical Clustering Analysis Hierarchical clustering analysis is a most commonly used method to sort out similar samples or variables. The process is as follows: 1)At the beginning, samples(or variables) are regarded respectively as one single cluster, that is, each cluster contains only one sample(or variable). Then work out similarity coefficient matrix among clusters. The matrix is made up of similarity coefficients between samples (or variables). Similarity coefficient matrix is a symmetrical matrix. 2)The two clusters with the maximum similarity coefficient( minimum distance or maximum correlation coefficient) are merged into a new cluster. Compute the similarity coefficient between the new cluster with other clusters. Repeat step two until all of the samples (or variables) are merged into one cluster.

The calculation of similarity coefficient between clusters Each step of hierarchical clustering has to calculate the similarity coefficient among clusters. When there is only one sample or variable in each of the two clusters, the similarity coefficient between them equals to that of the two samples or the two variables, or compute according to section one. When there are more than one sample or variable in each cluster, many kinds of methods can be used to compute similarity coefficient. Just list 5 kinds of methods as follows. and refer to the two clusters, which respectively has or kinds of samples or variables.

1.The maximum similarity coefficient method If there’re respectively , samples(or variables) in cluster and , here’re altogether and similarity coefficients between the two clusters, but only the maximum is considered as the similarity coefficient of the two clusters. Attention :the minimum distance also means the maximum similarity coefficient. 2.The Minimum similarity coefficient method similarity coefficient between clusters can becalculated as follows:

3. The center of gravity method (only used in sample clustering) The weights are the index means among clusters. It can be computed as follows: 4.Cluster equilibration method (only used insampleclustering) work out the average square distance between two samples of each cluster. Cluster equilibration is one of the good methods in the hierarchical clustering, because it can fully reflect the individual information within a cluster.

5.sum of squares of deviationsmethod also called Ward method,only for sample clustering. It imitates the basic thoughts of variance analysis, that is, a rational classification can make the sum of squares of deviation within a cluster smaller, while that among clusters larger. Suppose that samples have been classified into g clusters, including and . The sum of squares of deviations of cluster from samples is: ( is the mean of ) . The merged sum of squares of deviations of all the g clusters is . If and are merged, there will be g-1 clusters.The increment of merged sum of squares of deviations is ,which is defined as the square distance between the two clusters. Obviously, when n samples respectively forms a single cluster, the merged sum of squares of deviation is 0.

Sample 19-1 There’re four variables surveying from 3454 female adults : height(X1)、length of legs (X2)、waistline(X3)and chest circumference(X4).The correlation matrix has been worked out as follows: Try to use hierarchical clustering to cluster the 4 indexes. This is a case of R-type(index) clustering. We choose simple similarity coefficient as the similarity coefficient ,and use maximum similarity coefficient method to calculate the similarity coefficient among clusters.

The clustering procedure is listed as follows: (1)each index is regarded as a single cluster G1={X1},G2={X2},G3={X3},G4={X4}.There’re altogether 4 clusters.(2)Merge the two clusters with maximum similarity coefficient into a new cluster. In this case, we merge G1 and G2( similarity coefficient is 0.852) as G5={X1 , X2}. Calculate the similarity coefficient among G5、G3 and G4.The similar matrix among G3,G4 and G5:

(3)Merge G3 and G4 as G6={G3 , G4}, for this time the similarity coefficient between G3 and G4 ranks the largest(0.732). Compute the similarity coefficient between G6 and G5. (4)Lastly G5 and G6 are merged into one clusterG7={G5 , G6}, which in fact includes all the primitive indexes.

heightlength waistline chest of legs circumference Picture 19-1 hierarchical dendrogram with 4 indexes Draw the hierarchical dendrogram (picture 19-1)according to the process of clustering. As the picture indicates, it’s better to be classified into two clusters: {X1,X2},{X3,X4}.That is, length index as one cluster while circumference as the other one.

Sample 19-2 Table 19-1 lists the means of energy expenditure and sugar expenditure of four athletic items from six athletes. In order to provide correspondent dietary standard to improve performance record, please cluster the athletic items using hierarchical clustering.

We choose Minkowski distance in this sample, and use minimum similarity coefficient method to calculate distances among clusters. To reduce the effect of variable dimensions, the variables should be standardized before analysis. respectively refers to the sample mean and standard deviation of Xi. The data after transformation are listed in table 19-1.

The clustering process:(1)compute the similarity coefficient matrix( i.e. distance matrix) of the 4 samples. The distance of weight loading crouching and pull-ups can be work out using formula(19-3). Likewise, the distance between weight loading crouching and push-ups can be computed as follows: Lastly,work out the distance matrix:

(2)The distance between G2 and G4 is the minimum, so G2 and G4 should be emerged into a new cluster G5={ G2,G4}. Compute the distance between G5 and other clusters using minimum similarity coefficient method according to formula (19-8).The distance matrix of G1,G3 and G5: (3)Merge G1 and G5 into a new cluster G6={ G1,G5}. Compute the distance between G6 and G3: (4)lastly merge G1 and G6 into G7={G1 , G6}. All the indexes have all been merged into a large cluster.

According to the process of clustering, draw out the the hierarchy dendrogram (chart 19-2). As the hierarchy dendrogram shows and expertise we have learned, the indexes should be sorted into two clusters: { G1,G2,G4} and { G3}. Physical energy expenditure in weight loading crouching 、pull-ups and sit-ups would be much higher, dietary standard improvement might be required in those items during training.

Analysis of clustering examples Different definition of similarity coefficient and that among clusters will cause different clustering results. Expertise as well as clustering method is important to the explanation of clustering analysis.

Sample 19-3 twenty-seven petroleum pitch workers and pyro-furnaceman are surveyed about their ages, length of service and smoking information. In addition, detections of sero- P21, sero-P53, peripheral blood lymphocyte SCE, the number of chromosomal aberration and the number of cells that had happened chromosomal aberration were carried out among these workers (table 19-3). (P21mutiple=P21detection value /the mean of control group P21) Please sort the 27 workers using hierarchical clustering serviceably method.

This example apply minimum similarity coefficient method originating from Euclidean distance, cluster equilibration method and sum of squares of deviations method to cluster the data. The results are listed in chart 19-3, chart19-4 and chart19-5. All the variables have been standardized before analysis.

chart 19-3 the hierarchy dendrogram of 27 petroleum pitch workers and pyro-furnacemen using minimum similarity coefficient method

Chart 19-4 the hierarchy dendrogram of 27 petroleum pitch workers and pyro-furnacemen using cluster equilibration method

Chart 19-5 the hierarchy dendrogram of 27 petroleum pitch workers and pyro-furnacemen using sum of squares of deviations method

The outcomes of the three kinds of clustering are not the same, from which we can see different ways have different efficiency. The differences are more distinct in case of more variables. So you’d better select efficient variables before clustering analysis. Such as the p21 and p53 in this example. You can get more information by reading the clustering chart.

According to expertise ,we can see the outcome of equilibration clustering is more reasonable. The classifying result is filled in the last column. Workers numbered {10,20,23} are classified as one class; others are another .researchers find that workers numbered {10,20,23} are in high risk of cancer. Number {10,20,23,8,16,26} are clustered together according to the chart of sum of squares of deviations, reminding that workers of 8,16,26 maybe in high risk too.

Dynamic clustering If there are too many samples under classified ,hierarchy clustering analysis demands more space to store similarity coefficient matrix. and is quite inefficient. What’s more ,samples can’t be changed once they are classified. Because of these shortcomings, statists put forward dynamic clustering which can overcome the inefficiency and adjust the classifying along with the process of clustering.

The principle of dynamic clustering analysis is: firstly, select several representative samples ,called cohesion point, as the core of each class; secondly, classify others. adjust the core of each class until classifying is reasonable . The most common way of dynamic clustering analysis is k-means, which is quite efficient and it’s principle is simple. We can get the outcomes even if samples are in large number. However we have to know how many classes the samples are classified into before analysis. we may know under some circumstances in terms of expertise ,but not in other cases.

Ordinal Clustering Methods Clustering analysis mentioned before are for non-sequenced samples. But there are another kind of data ,such as ages of development data, incidence rate in different years and locations. These data are in order in time and space, so they are called ordinal data .We have to take the order into consideration before classifying and can not destroy the order so that we call it ordinal clustering methods.

Attentions 1.Clustering analysis is used to explore data. Explanation of outcomes must be integrated with expertise. try different ways of clustering to get reasonable outcomes. 2.pre-dispose variable and get rid of useless variable which change little and these with too many absences .generally speaking, we need to make standard transform or range transform to eliminate effect of dimension and coefficient of variation.

3.Reasonable outcomes of classifying will lead to distinct differences between classes, and minute in class. after classifying we can apply analysis of variance in case of single variable , in case of multiple variable to check statistical differences between classes. 4. fuzzy clustering analysis, neuro-networks clustering analysis ,and other specific analysis to explore genetic data are not introduced here ,please inquire related information on internet.