Hierarchical Dirichelet Processes

This presentation explores multi-task learning through Hierarchical Dirichlet Processes (HDP) for clustering in related groups. The goal is to share clusters among multiple clustering problems using a nonparametric Bayesian approach. It discusses the fundamentals of Dirichlet Processes, their properties via the Chinese Restaurant Process, and the construction of an HDP wherein groups share mixture components while maintaining unique group-specific combinations. The method automates the determination of the number of mixture components, enhances cluster discovery across and within groups, and illustrates experimental results showcasing its effectiveness.

Hierarchical Dirichelet Processes

E N D

Presentation Transcript

Sharing Clusters Among Related Groups: Hierarchical Dirichelet Processes Y. W. Tech, M. I. Jordan, M. J. Beal & D. M. Blei NIPS 2004 Presented by Yuting Qi ECE Dept., Duke Univ. 08/26/05

Overview • Motivation • Dirichlet Processes • Hierarchical Dirichlet Processes • Inference • Experimental results • Conclusions

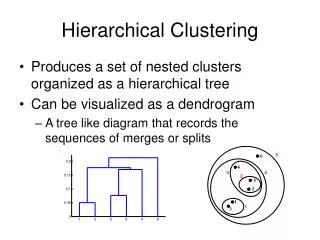

Motivation • Multi-task learning: clustering • Goal: • Share clusters among multiple related clustering problems (model-based). • Approach: • Hierarchical; • Nonparametric Bayesian; • DP Mixture Model: learn a generative model over the data, treating the classes as hidden variables;

Dirichlet Processes • Let (,) be a measurable space, G0 be a probability measure on the space, and be a positive real number. • A Dirichlet process is any distribution of a random probability measure G over (,) such that, for all finite partitions (A1,…,Ar) of , • G ~DP(, G0 ) if G is a random probability measure with distribution given by the Dirichlet process. • Draws G from DP are generally not distinct, discrete, , Өk~G0, βk are random and depend on. • Properties:

Chinese Restaurant Processes • CRP(the polya urn scheme) • Φ1,…,Φi-1, i.i.d., r.v., distributed according to G; Ө1,…, ӨK to be the distinct values taken on by Φ1,…,Φi-1, nk be # of Φi’= Өk, 0<i’<i, This slide is from “Chinese Restaurants and Stick-Breaking: An Introduction to the Dirichlet Process”, NLP Group, Stanford, Feb. 2005

DP Mixture Model • One of the most important application of DP: nonparametric prior distribution on the components of a mixture model. • Why no direct application of density estimation? Because G is discrete?

HDP – Problem statement • We have J groups of data, {Xj}, j=1,…, J. For each group, Xj={xji}, i=1, …, nj. • In each group, Xj={xji} are modeled with a mixture model. The mixing proportions are specific to the group. • Different groups share the same set of mixture components (underlying clusters, ), but different group is a different combination of the mixture components. • Goal: • Discover the distribution of within a group; • Discover the distribution of across groups;

HDP - General representation • G0: the global prob. measure ~ DP(r, H) , r: concentration parameter, H is the base measure. • Gj: the probability distribution for group j, ~ DP(α, G0). • Φji : the hidden parameters of distribution F(Φji) corresponding to xji. • The overall model is: • Two-level DPs.

HDP - General representation • G0 places non-zeros mass only on , thus, • , i.i.d, r.v. distributed according to H.

HDP-CR franchise • First level: within each group, DP mixture • Φj1,…,Φji-1, i.i.d., r.v., distributed according to Gj; Ѱj1,…, ѰjTj to be the values taken on by Φj1,…,Φji-1, njk be # of Φji’= Ѱjt, 0<i’<i. • Second level: across group, sharing components • Base measure of each group is a draw from DP: Ѱjt | G0 ~ G0, G0 ~ DP(r, H), • Ө1,…, ӨK to be the values taken on by Ѱj1,…, ѰjTj , mk be # of Ѱjt=Өk, all j, t.

HDP-CR franchise Integrating out G0 • Values of Φji are shared among groups.

Inference- MCMC • Gibbs sampling the posterior in the CR franchise: • Instead of directly dealing with Φji & Ѱjt to get p(Φ, Ѱ|X), p(t, k, Ө|X) is achieved by sampling t, k, Ө, where, • t={tji}, tji is the table index that Φji associated with, Φji=Ѱjtji. • K={kjt}, kjt is the index that Ѱjttakes value on Өk, Ѱjt=Өkjt. • Knowing the prior distribution as shown in CPR franchise, the posterior is sampled iteratively, • Sampling t: • Sampling K: • Sampling Ө:

Experiments on the synthetic data 1 1 1 2 2 2 7 7 7 6 6 6 3 3 3 4 4 4 5 5 5 Group 3: [5, 6, 1, 7] Group 1: [1, 2, 3, 7] Group 2: [3, 4, 5, 7] • Data description: • We have three group data; • Each group is a Gaussian mixture; • Different group can share same clusters; • Each cluster has 50 2-D data points, features are independent;

Experiments on the synthetic data • HDPs definition: here, F(xji|φji) is Gussian distribution, φji={μji, σji}; φji take values on one of θk={μk, σk}, k=1…. μ ~ N(m, σ/β), σ-1 ~ Gamma (a, b), i. e., H is Norm-Gamma joint distribution. m, β, a, b are given hyperparameters. • Goal: • Model each group as a Gaussian mixture ; • Model the cluster distribution over groups ;

Experiments on the synthetic data • Results on Synthetic Data • Global distribution: Estimated over all groups and the corresponding mixing proportions The number of components is open-ended, here only partial is shown.

Experiments on the synthetic data Mixture within each group: The number of components in each group is also open-ended, here only partial is shown.

Conclusions & discussions • This hierarchical Bayesian method can automatically determine the appropriate number of mixture components needed. • A set of DPs are coupled via their base measure to achieve the component sharing among groups. • Non-parametric priors; not non-parametric density estimation.