Belief Propagation on Markov Random Fields

190 likes | 629 Views

Belief Propagation on Markov Random Fields. Aggeliki Tsoli. Outline. Graphical Models Markov Random Fields (MRFs) Belief Propagation. Graphical Models. Diagrams Nodes : random variables Edges : statistical dependencies among random variables Advantages: Better visualization

Belief Propagation on Markov Random Fields

E N D

Presentation Transcript

Belief Propagation on Markov Random Fields Aggeliki Tsoli

Outline • Graphical Models • Markov Random Fields (MRFs) • Belief Propagation MLRG

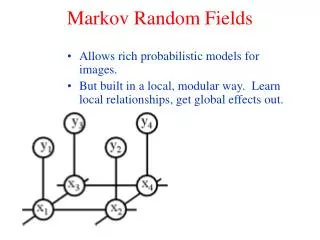

Graphical Models • Diagrams • Nodes: random variables • Edges: statistical dependencies among random variables • Advantages: • Better visualization • conditional independence properties • new models design • Factorization MLRG

Graphical Models types • Directed • causal relationships • e.g. Bayesian networks • Undirected • no constraints imposed on causality of events (“weak dependencies”) • Markov Random Fields (MRFs) MLRG

Example MRF Application: Image Denoising Noisy image e.g. 10% of noise Original image (Binary) • Question: How can we retrieve the original image given the noisy one? MLRG

MRF formulation • Nodes • For each pixel i, • xi : latent variable (value in original image) • yi : observed variable (value in noisy image) • xi, yi {0,1} y1 y2 x1 x2 yi xi yn xn MLRG

y1 y2 x1 x2 yi xi yn xn MRF formulation • Edges • xi,yi of each pixel i correlated • local evidence function (xi,yi) • E.g. (xi,yi) = 0.9 (if xi = yi) and (xi,yi) = 0.1 otherwise (10% noise) • Neighboring pixels, similar value • compatibility function (xi, xj) MLRG

y1 y2 x1 x2 yi xi yn xn MRF formulation • Question: What are the marginal distributions for xi, i = 1, …,n? P(x1, x2, …, xn) = (1/Z) (ij) (xi, xj) i (xi, yi) MLRG

Belief Propagation • Goal: compute marginals of the latent nodes of underlying graphical model • Attributes: • iterative algorithm • message passing between neighboring latent variables nodes • Question: Can it also be applied to directed graphs? • Answer: Yes, but here we will apply it to MRFs MLRG

Belief Propagation Algorithm • Select random neighboring latent nodes xi, xj • Send message mij from xi to xj • Update belief about marginal distribution at node xj • Go to step 1, until convergence • How is convergence defined? yi yj xi xj mij MLRG

Step 2: Message Passing • Message mij from xi to xj : what node xi thinks about the marginal distribution of xj yi yj N(i)\j xi xj mij(xj) = (xi) (xi, yi)(xi, xj)kN(i)\j mki(xi) • Messages initially uniformly distributed MLRG

Step 3: Belief Update • Belief b(xj): what node xj thinks its marginal distribution is N(j) yj xj b(xj) = k (xj, yj)qN(j)mqj(xj) MLRG

Belief Propagation Algorithm • Select random neighboring latent nodes xi, xj • Send message mij from xi to xj • Update belief about marginal distribution at node xj • Go to step 1, until convergence yi yj xi xj mij MLRG

Example - Compute belief at node 1. 3 m32 Fig. 12 (Yedidia et al.) 1 2 m21 m42 4 MLRG

Does graph topology matter? • BP procedure the same! • Performance • Failure to converge/predict accurate beliefs [Murphy, Weiss, Jordan 1999] • Success at • decoding for error-correcting codes [Frey and Mackay 1998] • computer vision problems where underlying MRF full of loops [Freeman, Pasztor, Carmichael 2000] vs. MLRG

How long does it take? • No explicit reference on paper • My opinion, depends on • nodes of graph • graph topology • Work on improving the running time of BP (for specific applications) • Next time? MLRG

Questions? MLRG