Markov Random Fields & Conditional Random Fields

Markov Random Fields & Conditional Random Fields. John Winn MSR Cambridge. Road map. Markov Random Fields What they are Uses in vision/object recognition Advantages Difficulties Conditional Random Fields What they are Further difficulties. 12. 23. X 1. X 2. X 3. 234. X 4.

Markov Random Fields & Conditional Random Fields

E N D

Presentation Transcript

Markov Random Fields & Conditional Random Fields John WinnMSR Cambridge

Road map • Markov Random Fields • What they are • Uses in vision/object recognition • Advantages • Difficulties • Conditional Random Fields • What they are • Further difficulties

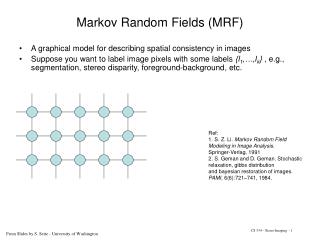

12 23 X1 X2 X3 234 X4 Markov Random Fields

Examples of use in vision • Grid-shaped MRFs for pixel labelling e.g. segmentation • MRFs (e.g. stars) over part positions for pictorial structures/constellation models.

Advantages • Probabilistic model: • Captures uncertainty • No ‘irreversible’ decisions • Iterative reasoning • Principled fusing of different cues • Undirected model • Allows ‘non-causal’ relationships (soft constraints) • Efficient algorithms:inference now practical for MRFs with millions variables – can be applied to raw pixels.

Maximum Likelihood Learning Sufficient statisticsof data Expected model sufficient statistics

Difficulty I: Inference • Exact inference intractable except in a few cases e.g. small models • Must resort to approximate methods • Loopy belief propagation • MCMC sampling • Alpha expansion (MAP solution only)

Difficulty II: Learning • Gradient descent – vulnerable to local minima • Slow – must perform expensive inference at each iteration. • Can stop inference early… • Contrastive divergence • Piecewise training + variants • Need fast + accurate methods

Difficulty III: Large cliques • For images, we want to look at patches not pairs of pixels. Therefore would like to use large cliques. • Cost of inference (memory and CPU) typically exponential in clique size. • Example: Field of Experts, Black + Roth • Training: contrastive divergenceover a week on a cluster of 50+ machines • Test: Gibbs samplingvery slow?

Other MRF issues… • Local minima when performing inference in high-dimensional latent spaces • MRF models often require making inaccurate independence assumptions about the observations.

Conditional Random Fields Lafferty et al., 2001 12 23 X1 X2 X3 234 I X4

Examples of use in vision • Grid-shaped CRFs for pixel labelling (e.g. segmentation), using boosted classifiers.

Difficulty IV: CRF Learning Sufficient statisticsof labels given the image Expected sufficient statistics given the image

Difficulty V: Scarcity of labels • CRF is a conditional model – needs labels. • Labels are expensive + increasingly hard to define. • Labels are also inherently lower dimensional than the data and hence support learning fewer parameters than generative models.