Abductive Logic Programming (ALP) and its Application in Agents and Multi-agent Systems

760 likes | 978 Views

Abductive Logic Programming (ALP) and its Application in Agents and Multi-agent Systems. Fariba Sadri Imperial College London ICCL Summer School Dresden August 2008. Contents. ALP recap ALP and agents Abductive planning via abductive event calculus (AEC) Dynamic planning with AEC

Abductive Logic Programming (ALP) and its Application in Agents and Multi-agent Systems

E N D

Presentation Transcript

Abductive Logic Programming(ALP) and its Application in Agents and Multi-agent Systems Fariba Sadri Imperial College London ICCL Summer School Dresden August 2008 Fariba Sadri ICCL 08 ALP

Contents • ALP recap • ALP and agents • Abductive planning via abductive event calculus (AEC) • Dynamic planning with AEC • Abductive proof procedures – IFF and CIFF • Hierarchical Planning • Reactivity • Plan repair • Dialogues and Negotiation Fariba Sadri ICCL 08 ALP

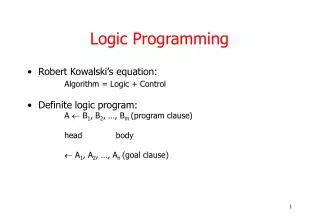

Abductive Logic ProgrammingRecap from Bob Kowalski’s Slides Abductive Logic Programs <P, A, IC> have three components: • P is a normal logic program. • A is a set of abducible predicates. • IC, the set of integrity constraints. Often, ICs are expressed as conditionals: If A1 &...& An then B A1 &...& An B or as denials: not (A1 &...& An & not B) A1 &...& An & not B false Normally, P is not allowed to contain any clauses whose conclusion contains an abducible predicate. (This restriction can be made without loss of generality.) Fariba Sadri ICCL 08 ALP

ALP Semantics Recap from Bob Kowalski’s Slides Semantics: Given an abductive logic program, < P,A,IC >, an abductive answer for a goal G is a set Δ of ground atoms in terms of the abducible predicates such that: G holds in P Δ IC holds in P Δ or P Δ IC is consistent. Fariba Sadri ICCL 08 ALP

Abduction is normally used to explain observationsRecap from Bob Kowalski’s Slides Program P: Grass is wet if it rained Grass is wet if the sprinkler was on The sun was shining Abducible predicates A: it rained, the sprinkler was on Integrity constraint: it rained & the sun was shining false Observation: Grass is wet Two potential explanations: it rained, the sprinkler was on The onlyexplanation that satisfies the integrity constraint is the sprinkler was on. Fariba Sadri ICCL 08 ALP

ALP and Agents Some references: Two ALP-based agent models: R.A. Kowalski, F. Sadri, From logic programming towards multi-agent systems. In Annals of Mathematics and Artificial Intelligence Volume 25, pages 391-419 (1999) Later developments of this model in later papers by Bob Kowalski A. Kakas. P. Mancarella, F. Sadri, K. Stathis, F. Toni. Computational logic foundations of KGP agents, Journal of Artificial Intelligence Research. To appear Fariba Sadri ICCL 08 ALP

ALP and Agents Agents can be seen as ALPs • Logic Programs represent beliefs (more elaborate beliefs than Agent0 or AgentSpeak) • Abducibles represent observations and actions • Integrity Constraints represent • Condition-action rules for reactivity • Plan repair rules • Communication policies • Obligations and prohibitions Fariba Sadri ICCL 08 ALP

ALP and Agents Abductive Logic Programs used for • Planning • Reactivity • Plan repair • Negotiation Fariba Sadri ICCL 08 ALP

ALP and AgentsA small planning example P: have(X) if buy(X) have(X) if borrow(X) A: buy, borrow, register (actions) IC: buy(X) & no-money false buy(tv) register(tv) Goal: have(tv) Δ1: buy(tv) & register(tv) (Plan 1) Δ2: borrow(pc) (Plan 2) If P also includes no-money then The only solution (only plan) is Δ2:borrow(pc). Fariba Sadri ICCL 08 ALP

ALP for PlanningAbductive Event Calculus (AEC) Some References Original Event Calculus R.A. Kowalski and M.J. Sergot (1986) A logic-based calculus of events. New Generation Computing, 4(1), 67-95. Abductive Event calculus P. Mancarella, F. Sadri, G. Terreni, F. Toni (2004) Planning partially for situated agents. In Leite, Torroni (eds.), Computational Logic in Multi-agent Systems, CLIMA V, Lecture Notes in Computer Science, Springer, 230-248. Also papers by M. Shanahan. Fariba Sadri ICCL 08 ALP

ALP for PlanningAbductive Event Calculus (AEC) Domain Independent Rules holds_at(F,T2) ¬ happens(A,T1), T1<T2, initiates(A, T1, F), ¬clipped(T1, F, T2) holds_at(F,T) ¬ initially(F), 0T, ¬clipped(0,F,T) clipped(T1,F,T2) ¬ happens(A,T), terminates(A,T,F), T1T<T2 Fariba Sadri ICCL 08 ALP

ALP for PlanningAbductive Event Calculus (AEC) holds_at(¬F,T2) ¬ happens(A,T1), T1<T2, terminates(A, T1, F), ¬declipped(T1, F, T2) holds_at(¬F,T) ¬ initially(¬F), 0T, ¬declipped(0,F,T) declipped(T1,F,T2) ¬ happens(A,T), initiates(A,T,F), T1T<T2 Fariba Sadri ICCL 08 ALP

AECDomain dependent rules Example: initially(¬have(money)) initiates(buy(X), T, have(X) ) ¬X=money initiates(borrow(X), T, have(X)) terminates(buy(X), T, have(money) ) precondition(buy(X), have(money)) Fariba Sadri ICCL 08 ALP

AECDomain dependent rules Another Example Actions can be communicative actions : tell(Ag1, Ag2, Content, D) initially(no-info(tr-ag, arrival(tr101)) initiates(tell(X, tr-ag, inform(Q,I), D),T,have-info(tr-ag,Q,I)) ¬ holds_at(trustworthy(X),T) terminates(tell(X, tr-ag, inform(Q,I), D),T,no-info(tr-ag,Q)) ¬ holds_at(trustworthy(X),T) precondition(tell(tr-ag,X, inform(Q,I), D), have-info(tr-ag,Q,I)) Fariba Sadri ICCL 08 ALP

AEC • Abducible happens • Domain Independent Integrity Constraints holds_at(F,T) & holds_at(F,T) false happens(A,T)& precondition(A,P)holds_at(P,T) • and any Domain Dependent Integrity Constraints For example: holds_at(open-shop, T) T≥9 & T≤18 happens(tell(a, Ag, accept(R), D), T) & happens(tell(a, Ag, refuse(R), D), T) false Fariba Sadri ICCL 08 ALP

ALP with Constraints • Notice that AEC has times and constraint predicates, T1<T2, T1T2, T1=T2, etc. • We can extend the notion of abductive answer to cater for these, in the spirit of Constraint Logic Programming (CLP). • Structure R consisting of • a domain D(R) and • a set of constraint predicates and • an assignment of relations on D(R) for each such constraint predicate. Fariba Sadri ICCL 08 ALP

ALP with ConstraintsSemantics Given an ALP with constraints, < P,A,IC,R>, an abductive answer for a goal G is a Δ=(D, C) such that D is in terms of the abducible predicates, and for all groundings of the variables in G, D, C such that satisfies C (according to R) G holds in P D IC holds in P D or P D IC is consistent. Fariba Sadri ICCL 08 ALP

Back to AEC Given AEC and a goal G holds_at(g1, T1) &holds_at(g2, T2) & … & holds_at(gn, Tn) an answer for G is a parially ordered plan for achieving G. That is an answer for G is a = (As, TC) As is a set of happens atoms, and TC is a set of temporal constraints, and is an abductive answer to G wrt AEC with constraints. Fariba Sadri ICCL 08 ALP

Example Domain dependent part : initially(¬have(money)) initiates(buy(X), T, have(X) ) ¬X=money initiates(borrow(X), T, have(X)) terminates(buy(X), T, have(money) ) precondition(buy(X), have(money)) happens(buy(X), T) T≥9 & T≤18 Fariba Sadri ICCL 08 ALP

Example cntd. Goal: holds_at(have(pc), T) & T<12 One answer (plan): 1= (happens(borrow(pc), T1), T1<T<12) 2= (happens(borrow(money),T1), happens(buy(pc), T2) T1<T2, T29, T2<T<12) Fariba Sadri ICCL 08 ALP

Example cntd. Goal: holds_at(have(pc), T) & holds_at(have(tv), T) How many answers (plans) are there ???? Some answers are: 1=(happens(borrow(pc),T1), happens(borrow(tv),T2), T1<T, T2<T) 2=(happens(borrow(money),T1), happens(buy(pc), T2), happens(borrow(money),T3), happens(buy(tv), T4), T1<T2, T29, T2 ≤18, T2<T3, T3<T4, T49, T4 ≤18, T4<T) Fariba Sadri ICCL 08 ALP

Act Observe Reason Agent Cycle Messages Goals + ALP Environment + Observations Effects of actions Fariba Sadri ICCL 08 ALP

An ALP agent cycle with explicit time to cycle at time T, record any observations at time T, resume thinking, giving priority to forward reasoning with the new observations, evaluate to false any alternatives containing sub-goals that are not marked as observations but are atomic actions to be performed at an earlier time, select sub-goals, that are not marked as observations, from among those that are atomic actions to be performed at times consistent with the current time, attempt to perform the selected actions, record the success or failure of the performed actions and mark the records as observations, cycleat time T+ n, where n is small. Note selecting an action involves both selecting an alternative branch of the search space and prioritising conjoint subgoals. Fariba Sadri ICCL 08 ALP

Agent Cycle Now consider using AEC in an agent cycle. We will have: • Observations • Plan execution • Interleaved planning and plan execution So we have to extend the AEC theory for this dynamic setting. Fariba Sadri ICCL 08 ALP

(Dynamic) AECBridge Rules Bridge rules for connecting the AEC theory to observations: holds_at(F,T2) ¬ observed(F,T1), T1 T2, ¬clipped(T1,F,T2) holds_at(¬F,T2) ¬ observed(¬F,T1), T1 T2, ¬declipped(T1,F,T2) happens(A,T) ¬ executed(A,T) happens(A,T) ¬ observed(_, A[T], _) happens(A, T) ¬ assume_happens(A, T) clipped(T1,F,T2) ¬ observed(¬F,T), T1T<T2 declipped(T1,F,T2) ¬ observed(F,T), T1T<T2 Fariba Sadri ICCL 08 ALP

(Dynamic) AEC Abducible assume_happens As well asabducibleswe can also have a set of observables. These are fluents (or fluent literals) that can only be proved by being observed. The agent cannot plan to achieve them. In the KGP architecture they are called sensing goals. E.g. shop is open it is raining. Fariba Sadri ICCL 08 ALP

Observations In general observations can involve: • Observable predicates • Abducible predicates • Defined predicates – in which case the observation may be explained through abductions. Fariba Sadri ICCL 08 ALP

(Dynamic) AEC Modify the set of domain independent integrity constraints: holds_at(F,T) & holds_at(F,T) false assume_happens(A,T) & precondition(A,P) holds_at(P,T) assume_happens(A,T) & ¬executed(A,T), time_now(T’) T>T’ Fariba Sadri ICCL 08 ALP

Example Domain dependent part : initially(¬have(money)) initiates(buy(X), T, have(X) ) ¬X=money initiates(borrow(X), T, have(X)) terminates(buy(X), T, have(money) ) precondition(buy(X), have(money)) precondition(buy(X), open-shop) observable Fariba Sadri ICCL 08 ALP

Example cntd. Goal: holds_at(have(pc), T) & T<12 The agent can consider two alternative plans, to borrow pc to buy pc. Then an observation ¬open-shop (maybe a notice saying the shop is closed all day or until further notice) makes the agent focus on the first plan. Fariba Sadri ICCL 08 ALP

Proof Procedures for Abductive Logic Programs (with constraints) Some references: CIFF: Endriss U., Mancarella P., Sadri F., Terreni G., Toni F., Abductive logic programming with CIFF: system description. The CIFF proof procedure for abductive logic programming with constraints. Both in Proceedings of Jelia 2004. Fariba Sadri ICCL 08 ALP

Proof Procedures Endriss U., Mancarella P., Sadri F., Terreni G., Toni F., The CIFF proof procedure for abductive logic programming with constraints: theory, implementation and experiments. Forthcoming. A-System: Kakas A., Van Nuffelen B., Denecker M., A-system: problem solving through abduction. In Proceedings of the 17th Internationla Joint Conference on Artificial Intelligence, 2001, 591-596. Fariba Sadri ICCL 08 ALP

Proof Procedure : CIFF • Builds on earlier work by Fung and Kowalski: The IFF proof procedure for abductive logic programming. Journal of Logic Programming, 1997. • CIFF adds constraint satisfaction and a few other features for efficiency and extended applicability. • Here we review the IFF proof procedure. Fariba Sadri ICCL 08 ALP

IFF Roughly speaking : Given ALP <P,A,IC> : IFF reasons forwards with IC and backwards with the selective completion of P (wrt non-abducibles). Fariba Sadri ICCL 08 ALP

IFF – Selective Completion Example of selective completion wrt non-abducibles): P: have(X) if buy(X) have(X) if borrow(X) A: buy, borrow (actions) IC: buy(X) & no-money false Selective completion of P is: have(X) iff buy(X) or borrow(X) no-money iff false i.e. the abducibles are open predicates, the rest are completed. We can also designate the observables as open predicates. Fariba Sadri ICCL 08 ALP

IFF – Backward reasoning (Unfolding) Backward reasoning (Unfolding) uses iff-definitions to reduce atomic goals (and observations) to disjunctions of conjunctions of sub-goals. E.G. A goal have(pc) will be unfolded to buy(pc) or borrow(pc). Fariba Sadri ICCL 08 ALP

IFF –Forward reasoning (Propagation) Forward reasoning(Propagation) tests and actively maintains consistency and the integrity constraints by matching a new observation or new atomic goal p with a condition of an implicational goal p & q r to derive the new implicational goal q r. q can be reduced to subgoals by backward reasoning (unfolding) or can be removed by forward reasoning (propagation). r is added as a new goal (after p &q has been removed). r can then trigger forward reasoning or can be reduced to sub-goals. r is (in general) a disjunction of conjunctions of sub-goals. Fariba Sadri ICCL 08 ALP

IFF –Forward reasoning (Propagation) In the example propagation will give: [buy(pc) & (no-money false)] or borrow(pc). Suppose now we observe no-money. Another propagation step will give [buy(pc) & false] or borrow(pc). Fariba Sadri ICCL 08 ALP

IFF – Some Other Inference Rules • Logical equivalences replace a subformula by another formula which is both logically equivalent and simpler. These include the following equivalences used as rewrite rules: G & true iff G G & false iff false D or true iff true D or false iff D. [true D] iff D [false D] iff true • Splitting uses distributivity to replace a formula of the form (D or D') & G by (D & G) or (D'& G) • Factoring“unifies two atomic subgoals P(t) & P(s) replacing them by the equivalent formula [P(t) & s=t] or [P(t) & P(s) & s t] Fariba Sadri ICCL 08 ALP

IFF - Negation Two versions: • Negation re-writing: ¬P is re-written as p false. • Combining negation re-writing and negation as failure: Some negative literals are marked These are evaluated using special inference rules that provide the effect of NAF. Fariba Sadri ICCL 08 ALP

IFF - Search Space The search space is represented by a logical formula, e. g. [happens(borrow(money), T1) & happens(buy(pc), T2) & T1<T2 & happens(borrow(tv), T3) & precondition(buy(pc),P) holds_at(P,T2)] or [happens(borrow(pc),T)] & happens(happens(borrow(tv),T’)] Each disjunct is analogous to an alternative branch of a prolog-like search space. Fariba Sadri ICCL 08 ALP

Notice - 1 • Propagation together with unfolding allows ECA or condition-action rule type of behaviour. E & C A E & C G trigger via propagation evaluate via propagation or unfolding fires the action or goal Fariba Sadri ICCL 08 ALP

Notice - 2 Factoring allows repeating actions at a later stage if an earlier attempt has not been effective. Scenario: e-shopping – logged on with one credit card, choose item, then card fails because there are not enough funds. Given : happens(logon(Card), T1) & happens(logon(visa), 5)& Rest(Card) planned action recorded observation factoring obtains: [happens(logon(Card), T) & Card=visa &T=5 & Rest(Card)] or [happens(logon(Card), T) & happens(logon(visa), 5) & (T5 or Cardvisa) & Rest(Card)] Fariba Sadri ICCL 08 ALP

Notice – 2 cntd. • So if Rest(Card) fails with Card=visa &T=5 the 2nd disjunct allows further attempts: • either to logon with a different card at a later time • or to logon with visa at a later time • Notice also that any work done in Rest(Card) in the first attempt is saved and available in the 2nd disjunct, for example choice of item to buy. Fariba Sadri ICCL 08 ALP

Semantics • LetD be a conjunction of definitionsin iff-form • Let G be a goal, i.e.conjunction of literals • Let IC be a set on integrity constraints • Let O be a conjunction of positive and negative observations, including actions successfully or unsuccessfully performed by the agent. G & Ois an answer iff all the below hold: • GandOare both conjunctions of formulae in the abducible and constraint (e.g.=) (or observable) predicates • D & G & O |= G • D & O |= O • D & G & O |= IC. Fariba Sadri ICCL 08 ALP

AEC for Hierarchical Planning • So far you have seen AEC formalised for planning from 1st principles. • This may not be ideal in an agent setting. • AEC lends itself to formalising plan libraries, macro-actions and hierarchical and progressive planning. • We will look at some of these through examples. Fariba Sadri ICCL 08 ALP

AEC for Hierarchical Planning A Reference: M. Shanahan, An abductive event calculus planner. The Journal of Logic Programming, Vol 44, 2000, 207-239 Fariba Sadri ICCL 08 ALP

AEC for Hierarchical PlanningExample (from Shanahan’s paper) Robot mail delivery domain Primitive actions initiates(pickup(P), T, got(P)) holds_at(in(R),T), holds_at(in(P,R),T), initiates(putdown(P),T, in(P,R)) holds_at(in(R),T), holds_at(got(P),T) initiates(gothrough(D), T, in(R1)) holds_at(in(R2),T), connects(D,R2,R1) terminates(gothrough(D), T, in(R)) holds_at(in(R),T) Fariba Sadri ICCL 08 ALP

AEC for Hierarchical PlanningExample cntd. Compound action happens(shiftPack(P,R1,R2,R3),T1,T6) happens(goToRoom(R1,R2),T1,T2), ¬clipped(T2,in(R2),T3), ¬clipped(T1,in(P,R2),T3), happens(pickup(P),T3), happens(goToRoom(R2,R3),T4,T5), ¬clipped(T3,got(P),T6), ¬clipped(T5,in(R3),T6), happened(putdown(P),T6), T2<T3<T4, T5<T6 Fariba Sadri ICCL 08 ALP

AEC for Hierarchical PlanningExample cntd. goToRoom(R1,R2) maintain effect pickup(P) maintain effect goToRoom(R2,R3) maintain effect putdown(P) shiftPack (P, R1, R2, R3) Fariba Sadri ICCL 08 ALP